There’s a productivity orthodoxy that goes unquestioned in most engineering and knowledge-work cultures: break everything into smaller tasks. Sprint cards, Jira tickets, daily todos — the advice is always to decompose, subdivide, granularize. And it’s not wrong, exactly. The problem is that nobody talks about where this approach starts costing you more than it saves.

The cost is real, it compounds, and most task management systems are specifically designed to hide it from you.

The Overhead You Don’t Count as Work

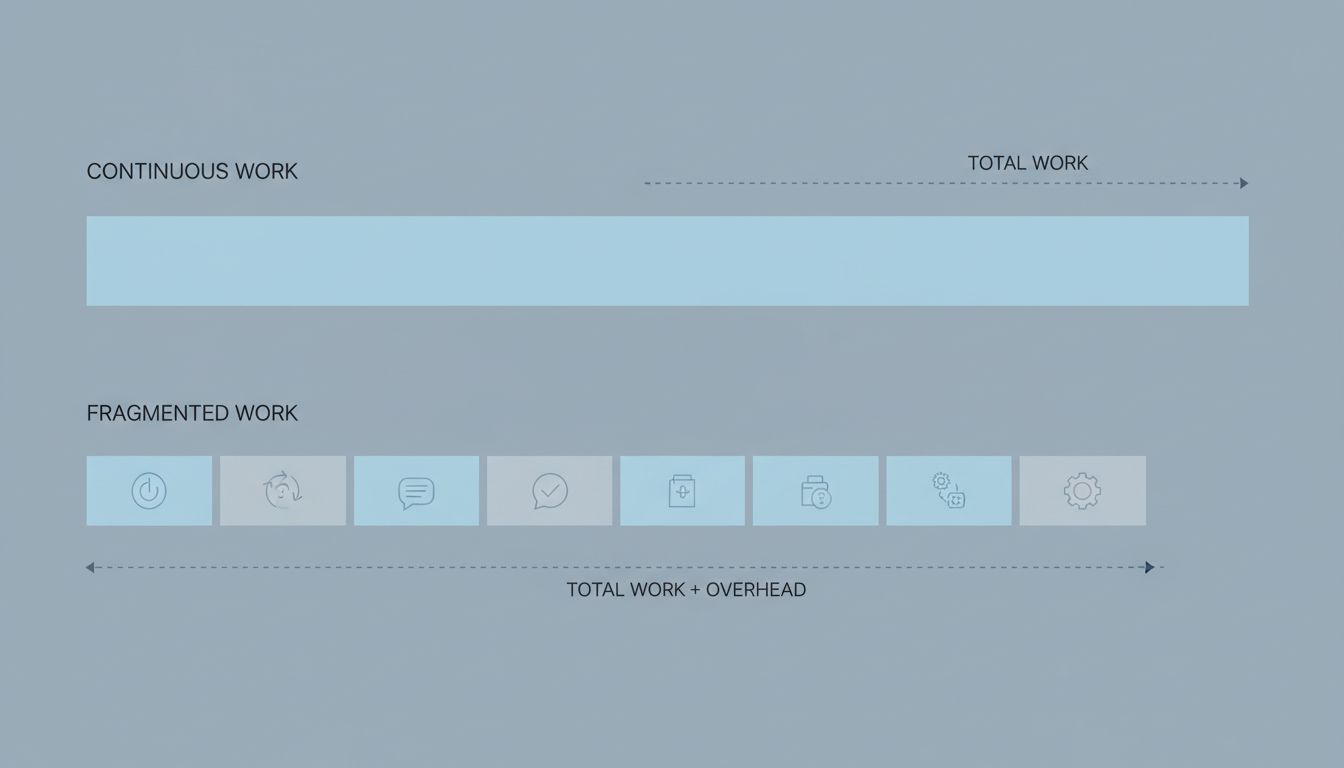

Every task boundary is a transaction. You write a description, assign it, link it to a parent, estimate it, move it through statuses, review it in standup, close it out. If your actual work takes twenty minutes and your task management ritual takes ten, you’re paying a fifty percent overhead tax on that piece of work. That’s not a hypothetical — it’s a rough description of how many teams I’ve seen operate.

The deeper issue is that these costs are invisible in your productivity accounting. You finish the day having closed eight tickets and feel productive. What you don’t see is that two hours of your day went to administering those tickets rather than doing the underlying work. The system shows you green checkmarks. It doesn’t show you the ratio.

This is a variation of what engineers call Goodhart’s Law applied to task management: when a measure becomes a target, it ceases to be a good measure. Ticket count is a legible proxy for progress, so people optimize for it. But a closed ticket is not the same thing as completed work. It’s a symbol that completed work may have happened.

Context Switching Compounds Across Small Tasks

Breaking work into very small units also multiplies context switching. Research by Gloria Mark at UC Irvine has consistently shown that interruptions and task switches take significant time to recover from — her work suggests it can take over twenty minutes to return to deep focus after a distraction. Multiply that by how many times a day you consciously switch between atomic sub-tasks and the math gets uncomfortable quickly.

The argument for small tasks is that they’re easier to pick up after interruption. That’s true when the interruption is external and unavoidable. But when you’re voluntarily switching between your own micro-tasks because that’s how you’ve structured your work, you’ve designed interruption into your workflow. You’re not managing distraction; you’re manufacturing it.

Deep work — the kind that produces your most valuable output — requires holding a large mental model in working memory simultaneously. Implementing a non-trivial feature means keeping data flow, edge cases, API contracts, and performance constraints all live in your head at once. Every time you task-switch, you flush part of that context. Rebuilding it costs time. Very small tasks encourage very frequent context switches.

When Decomposition Actively Damages the Work

There’s a class of problem where fragmenting the work doesn’t just create overhead — it actively degrades the output. This happens when the sub-tasks need to be coherent with each other in ways that only become visible when you’re holding the whole thing in your head.

Refactoring is a good example. You can break a refactoring job into ten small tickets, assign them across two developers, and execute them all. You can also end up with code that’s locally consistent but globally incoherent, because the design decisions that make the whole thing hang together were never captured in any individual ticket. The tickets described the work. They didn’t capture the thinking that should have unified the work.

This is a real failure mode in distributed teams, and it’s underappreciated. The task granularity that makes work parallelizable can destroy the conceptual integrity of the result. Fred Brooks wrote about this in The Mythical Man-Month in 1975 — adding more workers (or more tickets) to a project doesn’t speed it up linearly because the coordination cost grows faster than the parallelism helps. The same principle applies within a single person’s workflow when the coordination overhead is administrative rather than interpersonal.

The Right Granularity Is Not “Smaller”

None of this means you should work in huge undifferentiated blobs. Large tasks have their own failure modes: it’s hard to know where to start, hard to track progress, hard to hand off. The goal isn’t maximizing task size. The goal is matching task size to the natural shape of the work.

Some work is genuinely atomic — a specific bug fix, a config change, a copy edit. Break that into sub-tasks and you’ve invented busywork. Other work has a natural intermediate grain: a feature that has distinct phases (design, implementation, testing) where those phases are actually separable and can stand alone. Break that at the phase boundaries, not below them.

The heuristic I find most useful is asking: does this sub-task have an independent definition of done? If the answer is “yes, and I can verify it without running the whole system,” it’s probably the right unit. If the answer is “well, it’s done when the parent task is done,” you’ve created a sub-task that’s really just a checklist item with extra bureaucracy around it. As the productivity research broadly shows, the system that matches how you actually work tends to outperform the one optimized for appearances.

How to Tell When You’re Over-Decomposing

There are a few reliable signals. The first is when you spend more time updating task status than doing the underlying work. The second is when a task’s description is longer and more complex than the work it describes. The third, and most telling, is when you feel productive all day and can’t point to anything meaningfully finished.

That last one is the real diagnostic. Productivity systems can create an illusion of motion. Tickets move. Boards update. Standups report progress. But if the thing you’re actually trying to build isn’t measurably closer to done, the granularity of your task system is serving the system, not the work.

The overhead isn’t just time. It’s attention. Every task boundary is a decision point: is this the right next thing? Should I re-estimate? Is this still relevant? These micro-decisions drain cognitive resources that could go toward the actual problem. The best task system is the one that gets out of your way fastest — and sometimes that means merging five tickets back into one honest piece of work.