The standard narrative around machine learning performance focuses on model architecture, hyperparameter tuning, and compute. These are tractable, visible problems. What’s harder to see is that your model’s ceiling is often set much earlier, in the preprocessing step, by decisions that feel like hygiene but function like bets.

Preprocessing is where you decide what counts as signal and what counts as noise. The trouble is, you’re making that call before you fully understand the problem.

The Outlier Removal Problem

Consider the most common preprocessing operation: removing outliers. The standard approach is to drop observations that fall outside some threshold, often 1.5 times the interquartile range, or values beyond two or three standard deviations from the mean. This is statistically defensible and usually described in tutorials as “cleaning” the data, which implies the outliers were dirt.

But outliers in your training data are frequently the most informative examples your model will ever see. A fraud detection system trained on transaction data after aggressive outlier removal will learn the center of the distribution very well. It will be mediocre at catching fraud, because fraudulent transactions are, by definition, unusual. You have just trained your model to ignore the thing it needs to detect.

This isn’t a hypothetical. It’s a structural problem in how outlier removal is taught and applied. The decision to remove an outlier should follow from domain understanding, not from statistical distance. A blood pressure reading of 200/120 is an outlier. It is also a medical emergency, and if you’re building a clinical risk model, it is one of the most important training examples you have.

Imputation Is Encoding an Assumption

Missing data is the other major preprocessing fork where teams tend to apply defaults without interrogating them. The options are roughly: drop rows with missing values, impute with the mean or median, impute with a model, or carry forward the last observation in time-series contexts.

All of these are fine choices under certain conditions. The problem is that missingness is rarely random, and the choice you make about how to handle it encodes a claim about why the data is missing.

Mean imputation, the most common default, assumes the data is missing completely at random (MCAR). In practice, this is almost never true. In medical datasets, values are often missing because a clinician didn’t order a test, which itself is a clinical decision correlated with patient presentation. Replacing those missing lab values with the population mean doesn’t neutralize the missing data, it actively obscures the information the missingness carries.

A model trained on mean-imputed data learns to treat patients with missing labs as if they had average lab values. A model trained on data where missingness is preserved as a feature can learn that “no test was ordered” is itself a signal. These are different models with different behaviors in production, and the difference is entirely downstream of a checkbox in your preprocessing notebook.

What Gets Dropped Before You Even Notice

Beyond outliers and missing values, there’s a third category of preprocessing loss that’s harder to audit: implicit filtering from upstream decisions about what data to collect or retain.

Many teams work with data that was shaped by a previous system, a business rule, a prior model, or a logging choice made years ago. If your training data only includes users who completed onboarding, your model has never seen a person who churned during onboarding. If your text classifier was trained on tickets that a human agent resolved, it has no examples of tickets that were misrouted and abandoned. The missing population isn’t in your data at all, so no preprocessing step will surface it.

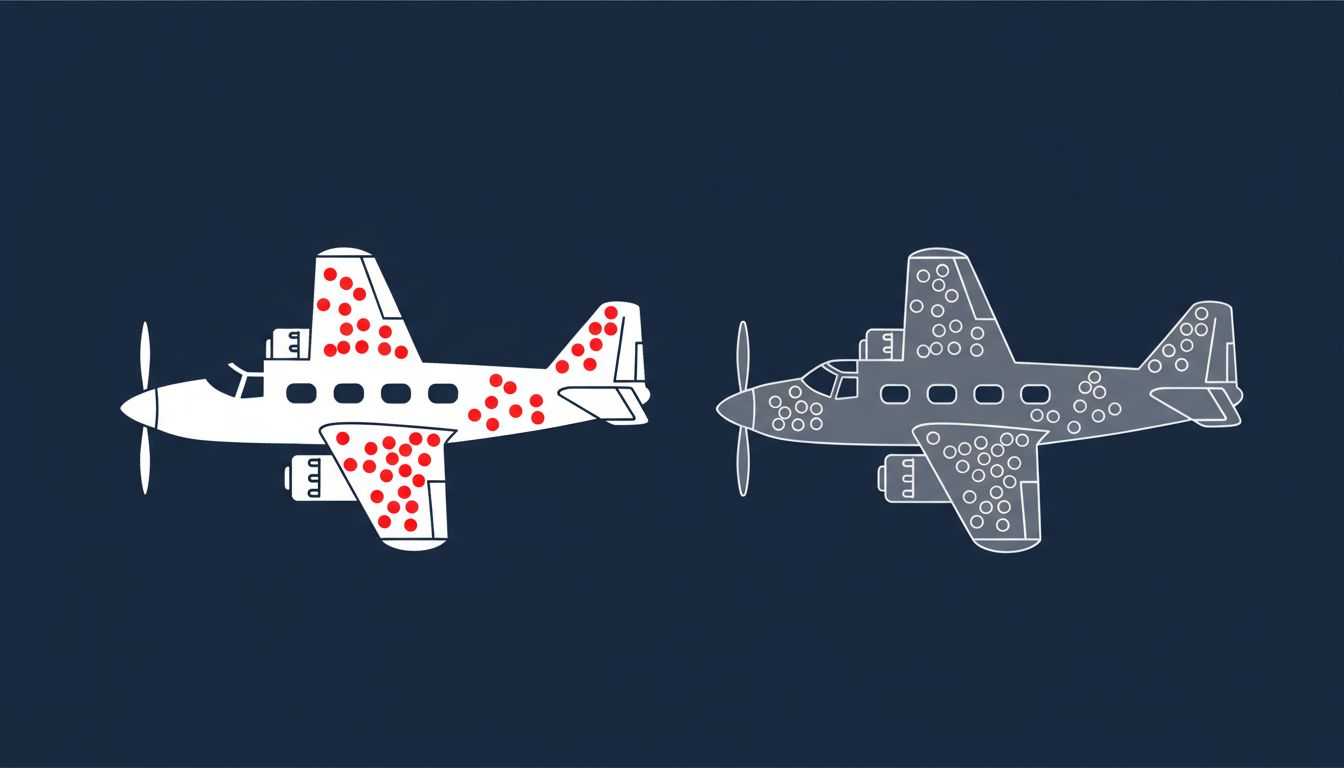

This is survivorship bias, and it’s pervasive. The canonical example from World War II is Abraham Wald’s analysis of aircraft damage. The military wanted to reinforce the parts of returning planes that showed the most bullet holes. Wald pointed out that the sample only included planes that returned. The bullet holes on those planes showed where a plane could be hit and still fly. You needed to reinforce the places with no holes, because planes hit there didn’t come back.

The same logic applies to your training set. The examples that aren’t there are often more informative about failure modes than the examples that are.

How to Actually Audit Your Preprocessing

The practical fix isn’t to stop preprocessing. Raw data is genuinely unusable in most cases, and some normalization, deduplication, and encoding are necessary. The fix is to treat every preprocessing decision as a modeling decision and document it accordingly.

A few concrete practices help. First, log what you remove, not just what you keep. If you drop 4% of your training rows as outliers, have a record of what those rows looked like. Periodically compare the distribution of your dropped data to the distribution of errors your model makes in production. The overlap will often be uncomfortable.

Second, when you impute missing values, add a binary indicator column that records where the imputation happened. This preserves the missingness signal and lets the model learn from it if it’s informative. The overhead is minimal. The potential gain is significant.

Third, ask whether your data collection process itself filters the population you care about. This is the hardest question because it requires stepping outside the dataset and thinking about what it can’t contain. It often means talking to people who understand the business or clinical context, which is unglamorous work but frequently the highest-leverage thing you can do.

Model performance on your held-out test set is a measure of how well you generalize to data that looks like your training data. If your preprocessing systematically removed a category of inputs, your test set has the same gap, and the metric looks clean right up until the model hits that category in production. This is a close cousin of the problem where production bugs hide from your local tests: the evaluation environment doesn’t surface the failure because it was built from the same filtered slice of reality.

The Real Cost

Preprocessing decisions compound. A choice made at the start of a pipeline propagates through every model trained on that data, every evaluation run against a test set derived from it, and every inference made by a system deployed on top of it. By the time you notice a systematic failure in production, the cause is often a decision that felt like bookkeeping months earlier.

This is worth taking seriously not because preprocessing is uniquely treacherous, but because it sits at the boundary between data engineering and modeling, and ownership at boundaries is always weak. Data engineers clean data. ML engineers train models. Nobody owns the question of what meaning the cleaning step destroyed.

The teams that build reliable ML systems tend to have someone who obsesses over this gap. Not a preprocessing critic who blocks every data cleaning step, but someone who insists that each decision be legible, reversible where possible, and connected to an explicit assumption about the domain. That person is doing some of the most important work on the team, and they’re rarely the one giving demos.