Deleting a Database Column Is Surprisingly Hard

The command is four words: ALTER TABLE orders DROP COLUMN notes;. It runs in milliseconds on your laptop. On a production table with 400 million rows, it can lock the entire table, halt writes, and trigger an outage that wakes up your on-call engineer at 2 a.m. The gap between those two realities explains a lot about why database engineering is harder than it looks.

Column deletion is a useful lens for understanding something broader: in software, operations that appear atomic to the developer are rarely atomic to the system. The database has to coordinate storage, indexes, cached query plans, application code that’s already deployed, and other services that may be reading the same table. Dropping a column touches all of them.

What the Database Actually Has to Do

When you issue a DROP COLUMN statement in a standard relational database, several things happen beneath the surface. The database must acquire a lock on the table, rewrite the physical storage to remove the column’s data from every row (in most storage engines), update its internal catalog to reflect the new schema, and invalidate any query plan that referenced the column.

In PostgreSQL, prior to version 11, dropping a column didn’t immediately reclaim space. PostgreSQL marks the column as “dropped” in its system catalog but leaves the data physically in place. The column becomes invisible to queries, but the storage remains until you run VACUUM FULL or CLUSTER, both of which rewrite the entire table and require either an exclusive lock or significant I/O overhead. PostgreSQL 11 introduced the ability to make some ALTER TABLE operations non-blocking, but a full rewrite operation on a large table still requires exclusive access.

MySQL’s behavior depends on which storage engine you’re using and which version you’re running. With InnoDB on MySQL 5.6 and later, some schema changes can be done “online” without blocking reads and writes. But the specifics matter enormously: adding a column to the end of a table is usually online; changing column definitions or dropping columns with certain constraints may still require a full table copy.

The key insight is that “locking” isn’t a simple binary state. Databases distinguish between shared locks (multiple readers allowed) and exclusive locks (only one writer, no readers). A table rewrite requires an exclusive lock. On a high-traffic table, acquiring that lock means waiting for every active transaction to finish, then blocking all new transactions. That wait can be indefinitely long.

The Application Layer Doesn’t Know Yet

Assume you’ve handled the database side carefully. You’ve scheduled a maintenance window, the DROP COLUMN completes, and the schema is updated. The problem isn’t over.

Your application code was deployed before the column was dropped. If it contains any query that references the deleted column, that query will now throw an error. This sounds obvious, but the failure modes are subtle. The column might be referenced in a SELECT * query (which expands at query time, not deploy time, so the error surfaces at runtime). It might appear in an ORM model that auto-maps columns. It might be used in a view, a stored procedure, or a trigger you forgot about.

The correct sequence for a safe column removal is roughly: first deploy application code that no longer reads or writes the column, verify that nothing is actively using it, then drop it from the database. Many teams invert this order or skip the verification step, which is how you get a seemingly routine schema change cascading into a broken API at 11 p.m.

Organizations running multiple services against the same database face an additional problem: you can update your service’s code, but you may not control when other teams’ services get updated. If three microservices query the same table and one of them still references the old column, you can’t safely drop it until all three are updated. This requires coordination that many engineering organizations aren’t set up to handle smoothly.

Zero-Downtime Migration Is a Multi-Step Protocol

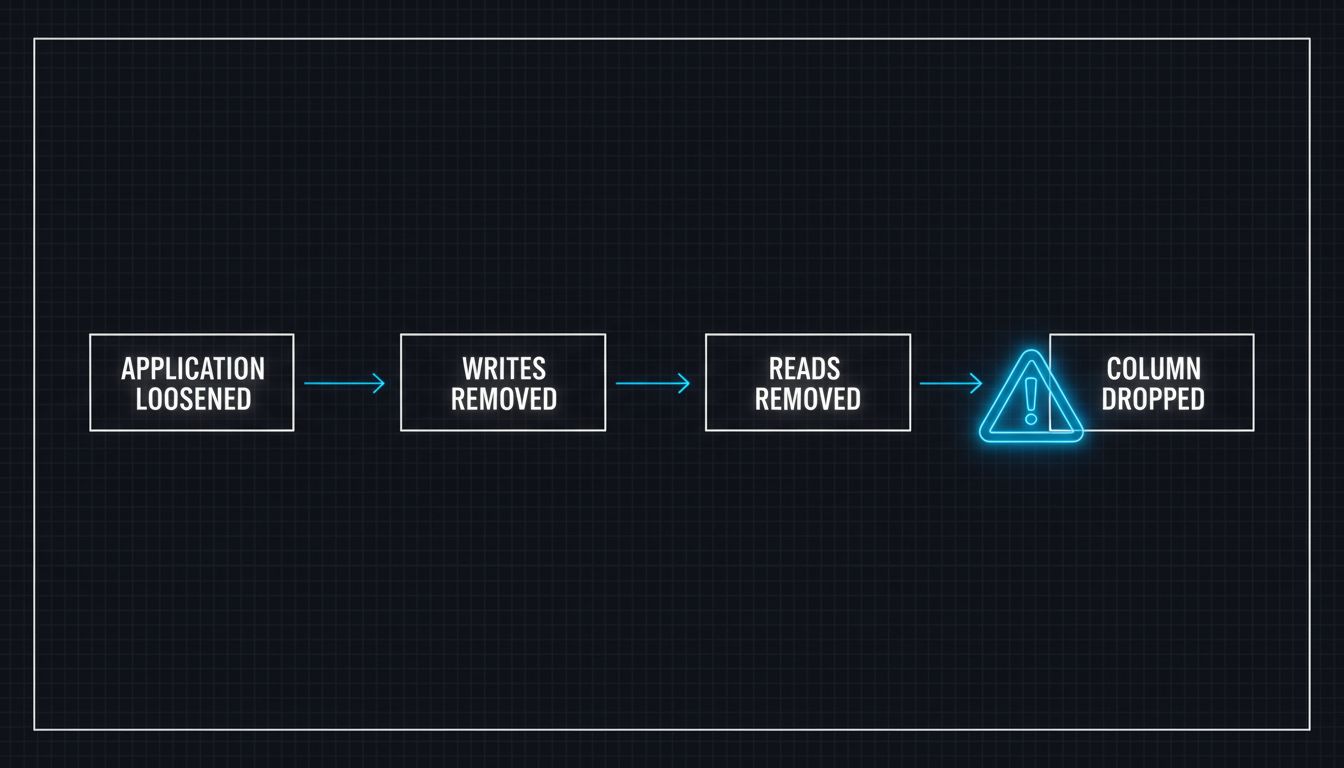

The engineering teams that handle this well treat column removal as a protocol with distinct phases, not a single operation.

The first phase is making the column optional at the application level. If the column is required in your model validation, you loosen that requirement so the app works whether the column exists or not. The second phase is removing writes to the column across all deployed code. The third phase is removing reads. Only after all of this, and after confirming through monitoring that no queries are touching the column, does the fourth phase occur: the actual DROP COLUMN statement.

For very large tables, teams often use a tool like pt-online-schema-change (from Percona) or gh-ost (from GitHub) rather than running ALTER TABLE directly. These tools work by creating a shadow copy of the table without the column, keeping it in sync with triggers as new writes come in, then swapping the tables atomically. GitHub open-sourced gh-ost specifically because they needed schema changes on tables too large to lock, and they’ve documented using it to make changes on tables with hundreds of millions of rows without downtime.

Cloud-managed databases like Amazon Aurora and Google Cloud Spanner have made some of this easier, with online DDL support that reduces lock contention. But they don’t eliminate the application-layer coordination problem. The database can drop the column without a lock; your deployed code still has to be updated before that happens.

Indexes and Foreign Keys Multiply the Problem

A column rarely exists in isolation. If the column you want to drop has an index on it, most databases require you to drop the index first. If it’s part of a composite index, you may need to evaluate whether to drop the entire index or rebuild it without the column. Each of these is its own operation with its own locking behavior.

Foreign key constraints are worse. If another table has a foreign key referencing your column, you cannot drop the column until that constraint is removed. This means you may need schema changes across multiple tables, in the right order, which multiplies your coordination surface. In some database configurations, even checking foreign key constraints requires a full table scan.

The operational lesson here mirrors a broader principle in system design: the cost of removing something is often higher than the cost of adding it, because additions are additive and removals have to account for everything that already depends on what you’re removing. This is why many engineering teams end up with tables full of columns that nobody uses anymore but nobody wants to touch.

The Organizational Cost of “Later”

The most common outcome is that the column doesn’t get dropped. The team intends to clean it up after the feature migration is complete, but there’s always something more urgent. Six months later, a new engineer joins, sees the column in the schema, assumes it’s used somewhere, and starts querying it. The technical debt calcifies.

This isn’t an engineering failure exactly. It’s an honest response to incentives. The work of dropping a column correctly requires coordination, careful sequencing, production monitoring, and scheduled downtime risk, while the benefit is purely cosmetic: a cleaner schema. Against a backlog of features that directly affect users, the schema cleanup loses every time.

Some teams address this by making schema migrations a regular practice, treating them the way they’d treat dependency updates: not glamorous, but scheduled and expected. Others use tooling that tracks column usage at the query level, making it possible to confirm with data that a column is genuinely unused before removing it. The teams that handle this best tend to treat database migrations as production bugs are telling you where your tests lie applies equally here: the schema is a source of latent failure, and the only way to know it’s safe to change is to have instrumented the system well enough to see what it’s doing.

The Command Is Easy. The System Is Not.

The reason column deletion is hard isn’t that database engineers didn’t think to make it easy. It’s that a relational database table is simultaneously a storage structure, a coordination point for concurrent transactions, a contract between the database and its application layer, and (often) a contract between multiple services. Dropping a column requires renegotiating all of those contracts at once.

This is also why the apparent simplicity of SQL is sometimes misleading. The language presents schema changes as commands, implying they’re as controlled and reversible as function calls. In practice, they’re closer to infrastructure changes: consequential, difficult to reverse quickly, and dependent on the state of everything connected to them.

Engineers who understand this stop thinking about column removal as a cleanup task and start thinking about it as a coordination problem. The four-word command is the easy part.

What This Means

For engineers: Never run DROP COLUMN directly on a production table without understanding the locking behavior of your specific database engine and version. Use online schema change tools for large tables. Always remove application-layer references before touching the database schema, not after.

For engineering managers: If your team’s database schema has a growing graveyard of unused columns, that’s a signal that your migration process is too costly relative to the benefit. Investing in tooling and process to make schema cleanup routine is cheaper than the eventual confusion caused by a schema nobody fully understands.

For system architects: The multi-step protocol required for safe column removal is a microcosm of a broader truth: in distributed systems, removals are harder than additions. Design your migration strategy with that asymmetry in mind from the beginning, not as an afterthought when cleanup is due.