The most dangerous software in the world is not the code that crashes. It is the code that keeps running.

Across power grids, hospital networks, water treatment facilities, and banking systems, there is software nobody owns, nobody fully understands, and nobody is paid to maintain. It works, in the narrow sense that it has not failed today. But it sits in a state of suspended obsolescence, carrying enormous risk while generating no line item on anyone’s budget that says “maintenance.”

This is not a hypothetical. It is the defining infrastructure debt of the computing era, and we are getting close to the point where the bill comes due.

The Economics Favor Neglect

Organizations make a rational choice when they stop maintaining legacy software. The system works. Replacing it costs millions and risks catastrophic failure during migration. The engineers who built it have retired or moved on. So the system stays, and the maintenance budget quietly disappears into other priorities.

This calculus makes sense at the individual level and is disastrous at the systemic level. COBOL is the clearest example. A language most computer science programs stopped teaching decades ago still runs an enormous share of global financial transactions. When U.S. states tried to process pandemic unemployment claims in 2020, many discovered their systems ran on COBOL code written in the 1970s, and there were not enough people who could modify it to handle the load. New Jersey’s governor publicly asked for COBOL volunteers.

That is not a legacy problem. That is a maintenance economics problem. The software was not maintained because maintaining it never appeared urgent, until suddenly it was the only thing that mattered.

The Open Source Amplifier

Closed legacy systems are dangerous because nobody touches them. Open source legacy systems carry a different risk: everyone depends on them, but almost nobody funds them.

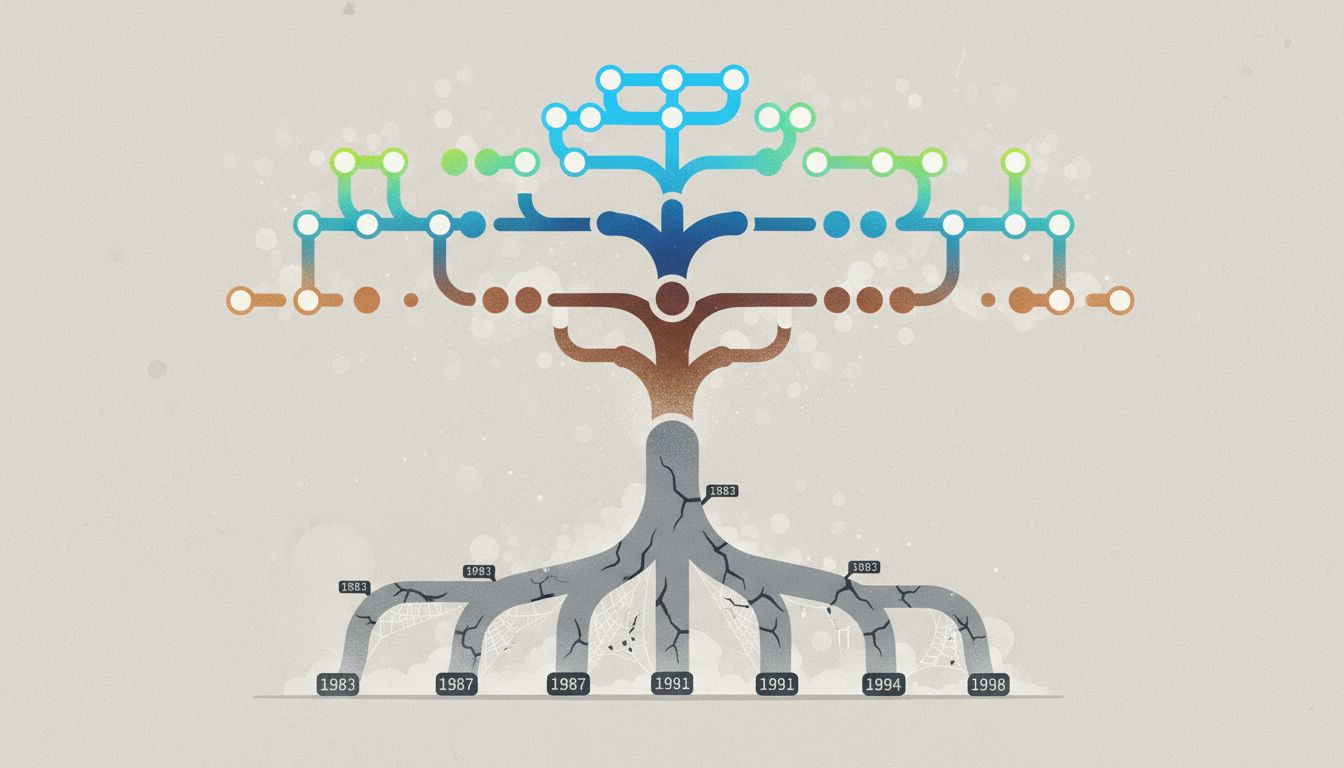

The 2021 Log4Shell vulnerability made this concrete. Log4j, a Java logging library embedded in software across governments, hospitals, and Fortune 500 companies, contained a critical flaw. The library was maintained largely by a small number of volunteers. When the vulnerability was disclosed, organizations scrambled to find every system that used the library, a task complicated by the fact that many did not know they were running it at all.

Log4j is not an isolated case. It is the structure. The open source supply chain that modern software sits on top of is, in many places, maintained by a handful of unpaid contributors who could stop tomorrow. The software does not advertise this fragility. It just runs.

Visibility Is the Core Failure

The reason this problem persists is that unmaintained software does not announce itself. A server that is quietly vulnerable looks identical to one that is secure. A COBOL system processing payroll sends no signal that the last person who understood its edge cases retired in 2008.

Modern organizations have invested heavily in monitoring application performance. They have invested almost nothing in monitoring maintenance status. There is no standard metric for “how long since a qualified engineer reviewed this code” or “what percentage of this system’s dependencies have active maintainers.” Budget cycles reward shipping new features. They do not reward auditing whether the foundation those features sit on is still sound.

This is compounded by outsourcing patterns from the 1990s and 2000s, where critical systems were built by contractors who held the institutional knowledge and then left. The organization kept the software and lost the understanding.

The Counterargument

The reasonable pushback here is that old software is often more stable than new software, not less. Systems that have run for twenty years without failure have had their bugs discovered and fixed. They are not subject to the constant churn of new vulnerabilities introduced by rapid iteration. There is something to this. The instinct to leave working systems alone is not purely laziness.

But stability and security are different properties. A system can be stable, meaning it behaves predictably under normal conditions, while being deeply insecure, meaning it behaves unpredictably or harmfully when deliberately probed. Legacy systems are frequently both at once. The 2021 attack on a Florida water treatment facility involved software with known vulnerabilities that had simply never been patched. The system was “stable” until someone tried to raise the sodium hydroxide levels to dangerous concentrations through a remote interface.

The argument for leaving working systems alone assumes that “working” means “safe.” It does not.

The Position

We treat infrastructure software as a capital asset that depreciates on a predictable schedule. We do not treat it that way for the software running inside that infrastructure. That inconsistency is going to produce failures, and some of those failures will be serious.

The fix is not purely technical. It is organizational and economic. Regulators in critical sectors need to require software bill of materials documentation, so organizations at minimum know what they are running. Maintenance funding needs to become a line item with the same visibility as new development. The open source projects embedded in critical systems need sustainable funding models, not volunteer labor and donation buttons.

None of this is dramatic. None of it ships a product or wins a quarter. But the alternative is continuing to run civilization on code that nobody is home to answer for, and hoping the day it matters never comes.