The Disagreement Is the Signal

Ask GPT-4 when a particular regulation went into effect. Ask Claude the same question. If they give you different dates, your instinct might be to check which one is right. That instinct is correct, but it’s also incomplete. The disagreement itself carries information, and understanding what’s actually happening when two models diverge is more useful than just treating it as a coin flip you need to adjudicate.

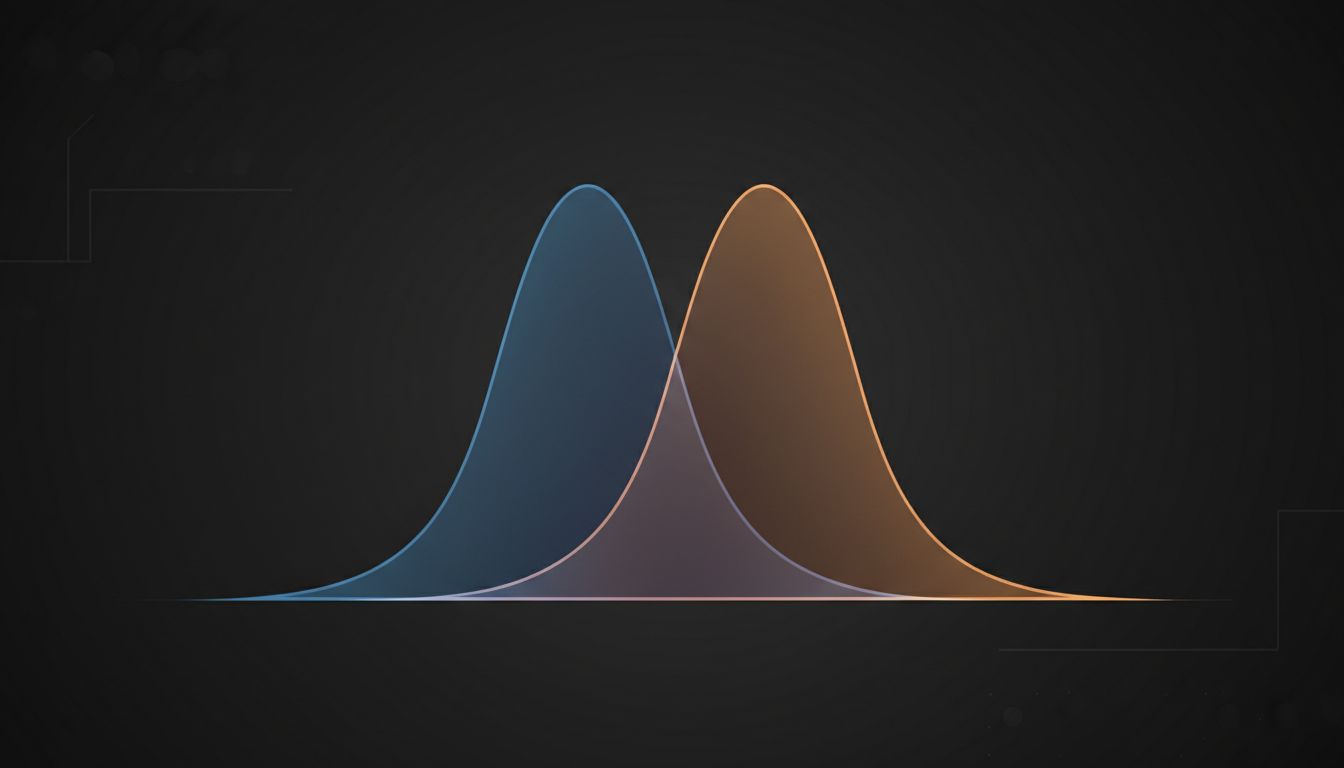

These models are not databases. They are not looking up facts from a shared ledger and returning them to you. They are probability distributions over text, and the fact they happen to output something that looks like a specific date or number is a side effect of that, not the primary mechanism. When two models disagree, you are seeing two different distributions over the same query produce different high-probability outputs. That’s a fundamentally different situation than two witnesses disagreeing about what they saw.

Why Models Disagree Even When They Train on Overlapping Data

The short answer is that model outputs are shaped by far more than raw training data. Architecture matters. Fine-tuning matters. RLHF (reinforcement learning from human feedback, where human raters score model outputs to push behavior in certain directions) matters enormously. Two models can be trained on datasets with substantial overlap and still diverge on factual recall because they learned different internal representations of the same underlying information.

Consider a concrete case: a model trained primarily on web text from before a major scientific revision might have seen thousands of documents citing the old figure. A model with a later or differently curated dataset might have seen the corrected value more often. Neither model “knows” which is right in any meaningful sense. Each model’s output reflects which version of that fact appeared most frequently in contexts that its training procedure reinforced.

There is also the compression problem. A large language model stores information implicitly in billions of numerical weights, not as discrete retrievable records. When a model recalls a fact, it is reconstructing it from these compressed representations, which introduces noise. The noisier the reconstruction, the more a specific output depends on small perturbations in the input, like how you phrase the question. This is why asking the same model the same question with slightly different wording can produce different answers, and why two models, even very capable ones, can produce confidently different answers to the same precise question.

Confidence Calibration Is a Separate Problem

Here is where it gets genuinely tricky. Both models might express high confidence. Modern LLMs are notoriously poorly calibrated on factual claims, meaning their expressed certainty does not reliably track their actual accuracy. A model that says “the answer is X” is not necessarily more likely to be right than one that says “I believe the answer is X, though I’m not entirely certain.” In fact, RLHF processes often reward confident-sounding outputs because human raters tend to prefer them, which can make models systematically overconfident.

This is not a fringe problem. Research on LLM calibration consistently shows that verbally expressed confidence from these models does not reliably correlate with accuracy on factual questions, particularly for obscure or highly specific facts. For common, well-represented facts, accuracy tends to be higher. For specific dates, names, technical specifications, or anything with low document frequency in the training corpus, models can be confidently wrong at rates that would be unacceptable if you treated them as authoritative sources.

The practical implication is that when two models disagree, you cannot resolve it by asking each model how confident it is. You need a ground truth source. The disagreement is best understood as a flag that this particular claim is underspecified in at least one model’s training, or reconstructed inconsistently, or genuinely disputed in the source material itself.

What the Disagreement Tells You About the Fact Itself

This is the more interesting angle. Consistent disagreement between models on a specific class of questions often reflects something real about the underlying data landscape. If models trained on different corpora consistently disagree about the timeline of some historical event, there is a decent chance that the source documents themselves are inconsistent, or that the event is genuinely contested among historians, or that a revision happened close to one model’s training cutoff.

In this sense, model disagreement can function as a rough signal of epistemic uncertainty in the world, not just in the models. It is a noisy signal, but it is a signal. If you are building a system that uses multiple models and you notice they systematically disagree on a domain, that is worth investigating, because the domain itself might contain genuinely contested information.

This maps to something developers know well from working with databases: inconsistency between replicas is not just an operational nuisance, it is a symptom. The cause might be a replication lag, a conflicting write, or a schema mismatch, but the disagreement tells you to go look. Model disagreement deserves the same diagnostic instinct.

Building Systems That Handle This Gracefully

If you are shipping anything where factual accuracy matters, “ask one model and trust it” is not an architecture. Neither is “ask two models and take the majority.” Two models with similar training pipelines will have correlated failure modes, meaning they will be wrong about the same things in similar ways. Agreement between them is not strong evidence of correctness.

Better patterns include using models for generation while routing factual claims through a retrieval system that pulls from a source of record. Retrieval-augmented generation (RAG, where the model is given actual retrieved documents to ground its response rather than relying purely on training) substantially reduces this class of error by anchoring outputs to verifiable text. Disagreement between a model’s prior and a retrieved document is, again, a useful signal rather than a tie to break arbitrarily.

For higher-stakes applications, you want the system to surface uncertainty rather than paper over it. A response that says “sources disagree on this point” is more honest and ultimately more useful than one that picks an answer with false confidence. This requires explicit design work, because models default toward producing confident-sounding outputs. Prompt design can push against this tendency, though as the constraints on prompt engineering make clear, you are working against strong prior behaviors baked in during training.

The cleanest mental model is this: treat model outputs as hypotheses, not facts. Disagreement between models is evidence that a hypothesis is underspecified. Your job as the engineer or product designer is to decide what happens next when a hypothesis fails to reach consensus, and to build that decision into the system explicitly rather than hoping the problem doesn’t surface in production.