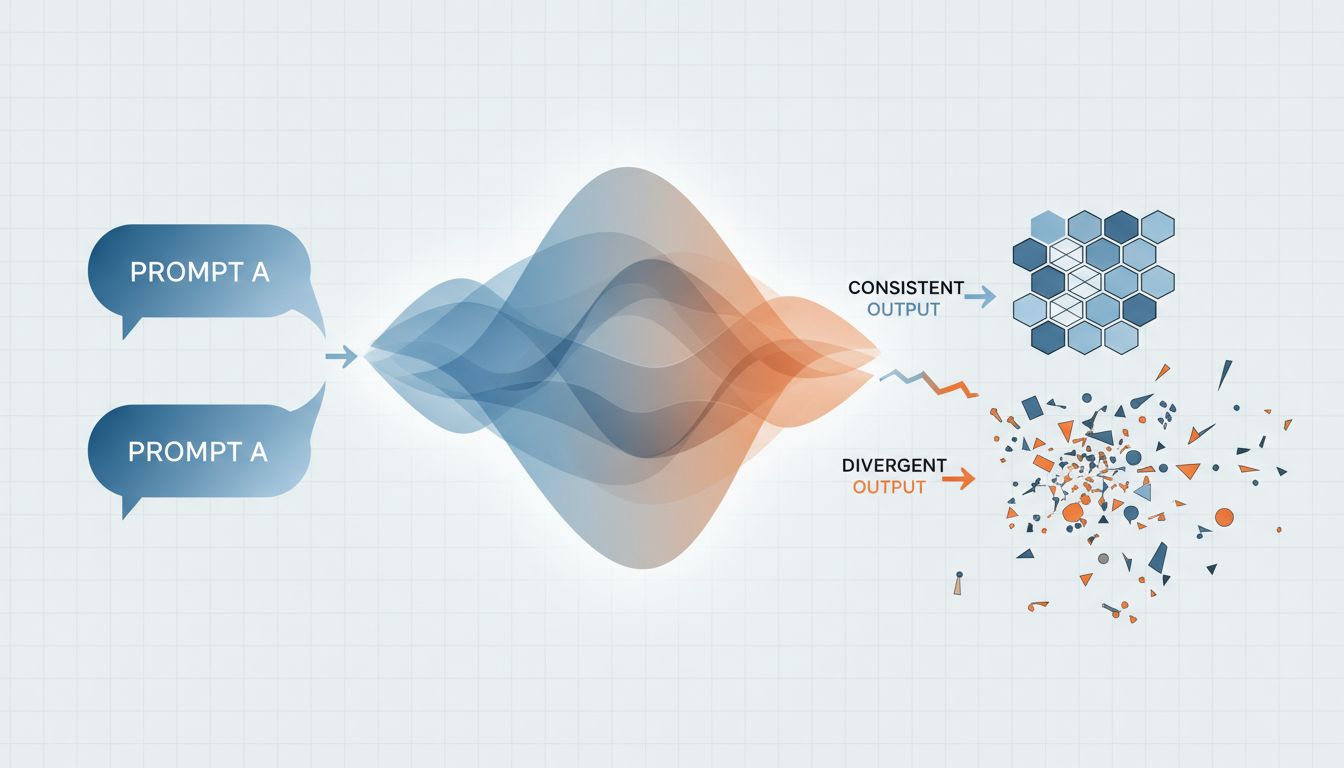

The simple version: Large language models generate text by sampling from a probability distribution, not by retrieving a stored answer. Every run is a fresh draw from that distribution, which means identical inputs can produce different outputs.

If you’ve used an LLM seriously for more than a few days, you’ve hit this wall. You craft a prompt, get a genuinely useful response, copy the prompt somewhere safe, run it again an hour later, and get something subtly wrong or completely off. Nothing changed on your end. So what happened?

The Model Is Not a Calculator

A calculator given 4 + 4 will return 8 every single time. That’s deterministic behavior: same input, same output, guaranteed. LLMs don’t work that way.

When a model generates text, it doesn’t look up the right answer. It builds a response token by token, and at each step it’s choosing from a weighted probability distribution over its entire vocabulary. The word “Paris” might have a 73% probability, “London” a 12% probability, “Rome” an 8% probability, and so on. The model samples from that distribution rather than always picking the top option.

This sampling behavior is controlled by a parameter called temperature. At temperature 0, the model always picks the highest-probability token, giving you deterministic output. At higher temperatures, it samples more broadly, introducing more variety and occasionally more creativity, but also more randomness. Most production APIs default to something above zero.

There’s also a related parameter called top-p (sometimes called nucleus sampling), which caps the sampling pool to tokens that collectively account for a certain probability mass. Both parameters shape how adventurous the model is at each step. What an LLM actually does between prompt and reply covers the mechanics in more detail if you want to trace the full path from input to output.

Small Wording Changes Have Outsized Effects

Even if you pin temperature to zero, you’re not in the clear. The model’s probability distribution is exquisitely sensitive to the exact tokens it receives. A period versus a comma. “List” versus “enumerate.” “You are a helpful assistant” versus “You are an expert assistant.” These aren’t cosmetic differences to the model; they shift which regions of its training distribution get activated.

Researchers at Stanford and elsewhere have published work showing that trivial prompt variations, things a human would consider synonymous, can flip model outputs from correct to incorrect on reasoning benchmarks. A prompt that scores well on one phrasing scores significantly worse on a near-identical rephrasing. The model isn’t being perverse; it’s responding to genuine statistical patterns it learned from training data, patterns that happen to be invisible to humans reading the text.

This is part of why prompt engineering as a discipline exists at all. You’re not discovering the “right” prompt in some absolute sense. You’re finding a prompt that consistently steers the model into high-probability regions that produce what you want. Prompt engineering is just begging an LLM to behave is a fair characterization of what that process often feels like in practice.

Context Windows and Order Effects

Another underappreciated source of inconsistency is what else is in the context window. The model doesn’t process your prompt in isolation. It processes your prompt plus everything else in the conversation, the system prompt, previous messages, any injected documents. All of that shifts the probability distribution for the next token.

This creates order effects that can be surprisingly strong. Studies on in-context learning have found that the order in which examples are presented in a prompt meaningfully affects accuracy, sometimes by double-digit percentage points. Put your best example first or last and you get different results. Add a sentence of small talk before your actual request and the distribution shifts slightly. Append a trailing newline and it shifts again.

For use cases where you’re building something on top of an API, this is a practical engineering problem. A customer support chatbot that accumulates 20 turns of conversation history is operating in a very different context than the same chatbot at turn 2. The prompt that worked in your testing environment (short context, controlled inputs) may degrade in production simply because real users generate messier, longer histories.

Why Model Updates Are Silent Killers

There’s a final category of failure that catches even careful engineers off guard: the model itself changed.

Major AI providers update their models continuously. OpenAI, Anthropic, and Google all push improvements to their production endpoints, and the versioning story is often murky. If you’re calling gpt-4 rather than a pinned version like gpt-4-0613, you may be talking to a different model than you were last month. The interface is identical. The behavior isn’t.

OpenAI documented a case where GPT-4’s performance on certain tasks measurably shifted between versions, a finding that prompted significant debate about what “improving” a model actually means when different capabilities can improve and regress simultaneously. If your prompt was calibrated to one version’s quirks, a silent update can break it without any error message or changelog notification.

The practical fix here is straightforward: pin to specific model versions in production, treat model updates as dependency upgrades that need testing, and keep a small regression suite of prompts with known-good outputs that you can run after any infrastructure change.

What To Do About It

None of this means LLMs are unreliable tools. It means they’re probabilistic tools, and building on them requires the same discipline you’d apply to any non-deterministic system.

A few things that actually help: Set temperature to 0 for tasks where consistency matters more than creativity. Write prompts that constrain the output format explicitly, because structure reduces the degrees of freedom the model is sampling over. Test prompts across multiple runs before trusting them, not just once. Version your prompts the same way you version code. And if you’re building a product, pin your model version.

The underlying issue won’t go away. Sampling from a probability distribution is fundamental to how these models work, not a bug waiting to be fixed. Understanding that is the difference between being frustrated by inconsistency and being able to engineer around it.