Most teams using LLMs in production are solving a logistics problem while thinking they’re solving an intelligence problem. The context window looks like memory. It isn’t. It’s a billing unit with cognitive quirks attached, and not understanding that distinction is costing teams real money and producing worse results than they’d get with a more deliberate approach.

Here are the things worth getting right.

1. The Context Window Is Not Working Memory

When developers first encounter context windows, the mental model that snaps into place is RAM. You load stuff in, the model has access to it, reasoning happens. That framing is wrong in a way that matters.

LLMs don’t retrieve information from context the way a program retrieves a variable. They attend to it, which means their sensitivity to what’s in context is uneven, positional, and influenced by how similar the content is to what they were trained on. Research on “lost in the middle” effects, including work from Stanford published in 2023, found that models perform significantly worse at using information placed in the middle of long contexts compared to information placed at the beginning or end. Stuffing a 100,000-token window and expecting uniform access to everything is a reasonable-sounding but empirically bad strategy.

This matters practically. If you’re building a RAG pipeline and you’re pulling the top 20 retrieved chunks because the window can technically hold them, you may be actively hurting response quality while paying for more tokens.

2. Longer Prompts Often Produce Worse Outputs

There’s a real temptation to give the model more context because more context feels like more information and more information feels like it should produce better answers. For some tasks this is true. For many it isn’t.

Models can get confused by contradictory information spread across a long prompt. They can latch onto earlier framing in ways that make them less responsive to instructions buried further in. They can produce verbose, hedging outputs because the context itself is verbose and hedging. If you’re seeing outputs that feel unfocused or generic, the prompt being too long is a legitimate suspect, not just prompt wording.

A practical test: take your current prompt and cut it by 30%. Run both versions on the same set of inputs and compare outputs. Many teams find the shorter version performs comparably or better, and costs meaningfully less.

3. You’re Paying Per Token on Both Sides

Input tokens and output tokens are both billed, but teams tend to focus on output length as the cost driver. Input is often where the real money goes. A system prompt that’s 3,000 tokens, sent with every single API call across thousands of daily requests, adds up fast. The per-token cost looks trivial until you do the multiplication.

Compressing your system prompt is one of the highest-leverage optimizations available to you. Write it like you’re paying for every word, because you are. Remove examples that aren’t pulling weight. Remove instructions the model follows reliably without being told. Audit it the way you’d audit a cloud bill.

This also applies to conversation history. Many implementations append the full conversation history to every call. That means a ten-turn conversation might include 8,000 tokens of history even when only the last two turns are relevant to the current question. Selective context pruning, where you summarize older turns or drop low-signal exchanges, can cut costs substantially without degrading quality.

4. Retrieval Is Not a Substitute for Curation

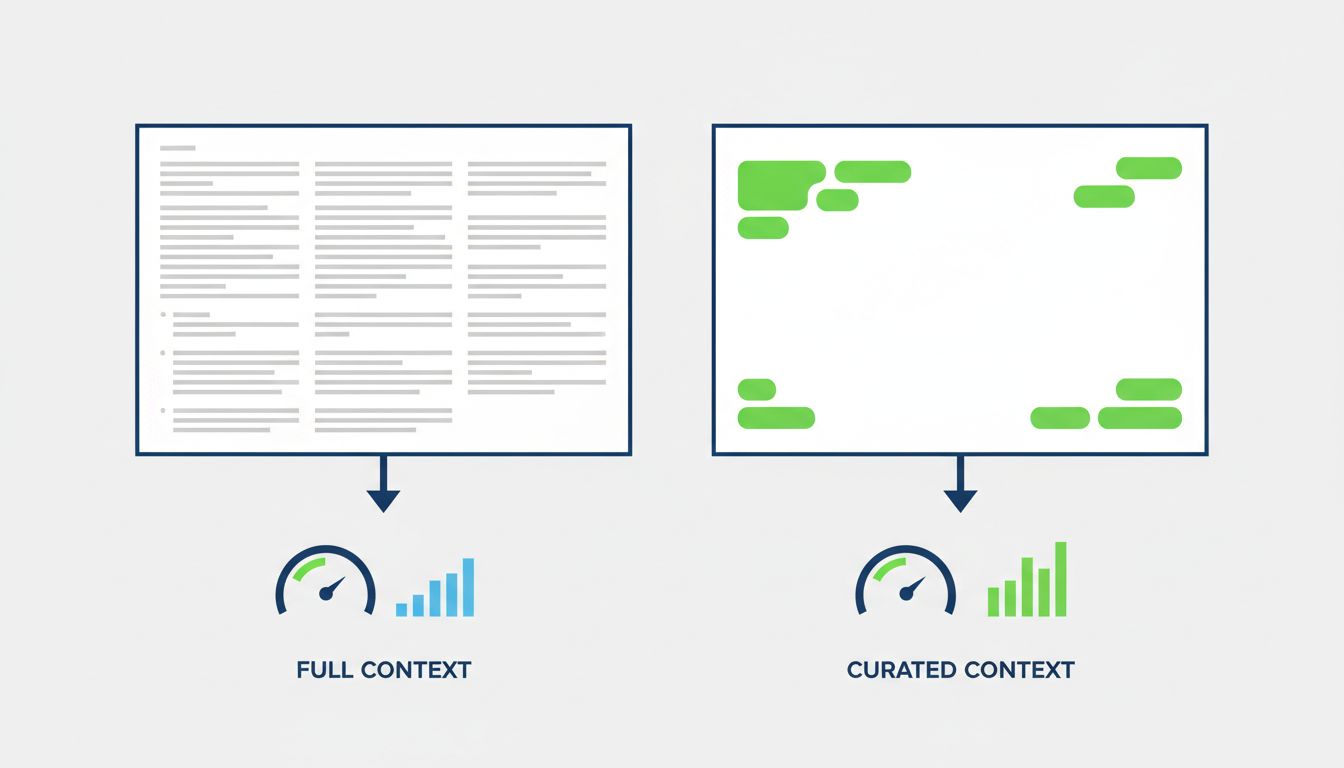

RAG (retrieval-augmented generation) became popular as a way to give models access to knowledge beyond their training data, and it works. But teams often implement it as “retrieve more and let the model figure it out,” which is exactly the wrong approach.

Your vector database retrieves chunks that are mathematically similar to the query. That’s not the same as semantically relevant, and it’s definitely not the same as useful for the specific question being asked. Similarity and usefulness diverge more often than you’d expect. The model then gets 15 chunks of varying relevance, some of which contradict each other, and is expected to synthesize an answer.

Better retrieval pipelines treat the retrieved chunks as candidates, not as context. They score for relevance beyond embedding similarity, they apply re-ranking, and they pass only the top two or three chunks that are genuinely load-bearing for the query. This produces better answers at lower cost. The work is in the retrieval layer, not in asking the model to do more with more.

5. Long Context Is a Feature, Not a Default Setting

Claude’s 200,000-token context window and GPT-4’s 128,000-token window are remarkable capabilities. They’re appropriate for specific tasks: analyzing a long document, reasoning across a full codebase, processing an entire meeting transcript. They’re not appropriate as a default architecture choice because you haven’t thought hard about what actually needs to be in context.

Treat large context as a tool you reach for deliberately, not as a permission slip to stop thinking about what your model actually needs. Most conversational applications don’t require more than a few thousand tokens of well-chosen context. Most document Q&A tasks don’t require feeding in the whole document if retrieval is done properly.

The teams getting the most out of LLMs aren’t the ones with the biggest context windows. They’re the ones who are precise about what goes in, where it goes, and why. That precision is what separates a useful, cost-efficient AI feature from an expensive one that produces mediocre results and gradually gets defunded.

Understanding what the model is actually doing with your tokens, rather than assuming it works like a database or a human reading a document, is the prerequisite for building anything that holds up under real usage.