The Wrong Explanation You’ve Already Heard

If you’ve read any introduction to embeddings, you’ve probably seen something like this: “Embeddings convert text into numbers so computers can understand it.” That sentence is technically defensible and almost completely useless. It describes the output without touching the mechanism, which is the part that actually matters.

Here’s a better starting point. An embedding is a learned compression of meaning into a fixed-length vector of floating-point numbers, where the geometry of the vector space encodes semantic relationships. Words (or sentences, images, users, products) that mean similar things end up close to each other in that space. Words that mean different things end up far apart. The distance and direction between vectors are meaningful.

That’s not just turning words into numbers. That’s encoding the structure of human concepts into the structure of mathematics.

Why Geometry?

The thing that trips people up is that this is a spatial idea, not a computational one. You’re not converting text so a computer can “process” it in some vague sense. You’re placing concepts into a space where mathematical operations correspond to semantic operations.

The classic demonstration is word2vec, the 2013 paper from Tomas Mikolov and colleagues at Google. They trained a model on a large text corpus, and the resulting vectors had a remarkable property: king - man + woman ≈ queen. The vector arithmetic worked. Subtract the “maleness” direction, add the “femaleness” direction, and you land near the correct concept.

This wasn’t a party trick they engineered. It fell out of the training process. The model learned to distribute meaning across dimensions in a way that preserved relational structure. Nobody told it that royalty and gender were separate axes. It figured that out from the statistics of how words appeared near each other.

That’s the thing worth sitting with. The geometry isn’t imposed on the data. It emerges from it.

How the Space Gets Learned

Embeddings are almost always learned rather than hand-crafted. The training process varies by context, but the core idea is consistent: you train a model to do some task (predict surrounding words, match a caption to an image, classify a document), and the intermediate representation the model develops to solve that task becomes the embedding.

For language models, a common approach is to train on the task of predicting masked or missing words. The model has to develop some internal representation of what words mean in context to do this well. The embedding is that internal representation, extracted and saved.

For something like OpenAI’s text-embedding models or Sentence-BERT, the model is trained on pairs of sentences with known similarity scores. It learns to place similar sentences close together and dissimilar ones far apart. The training signal directly shapes the geometry.

This is why embeddings are domain-sensitive. An embedding model trained on biomedical literature will have a different geometric structure around the word “culture” than one trained on social science papers. The word lives in a different neighborhood depending on what the model learned to care about.

The Dimensionality Problem (and What It Actually Means)

Modern embedding models produce vectors with hundreds or thousands of dimensions. OpenAI’s text-embedding-3-large produces 3072-dimensional vectors. This makes intuitive visualization impossible (you can’t draw a 3072-dimensional space), but it’s not arbitrary.

Higher dimensionality gives the model more room to encode distinctions. In a two-dimensional space, you can only place concepts along two axes. In a thousand-dimensional space, you can encode a thousand independent aspects of meaning simultaneously. The model can represent “formal tone,” “legal domain,” “negation,” “temporal reference,” and hundreds of other features each in its own approximate dimension.

The cost is obvious: storage and computation. A single 3072-dimensional float32 vector takes about 12KB. At scale, that adds up fast. This is why most production systems use dimensionality reduction techniques like PCA or UMAP to compress embeddings when you need approximate similarity search rather than exact matches. You lose some precision and gain a lot of speed and storage efficiency.

The tradeoff is real, and the right call depends on your tolerance for false positives in retrieval. This is the same class of problem as every other performance vs. accuracy tradeoff in systems work.

What Similarity Search Actually Does

Once you have embeddings, the primary operation is finding vectors that are close to a query vector. This is called approximate nearest neighbor search (ANN search), and it’s more interesting than it sounds.

The naive implementation would be to compute the distance from your query vector to every stored vector. That’s O(n) per query. At a million vectors, it’s still fast enough for many applications. At a billion vectors, it’s not.

Libraries like FAISS (from Meta AI) and systems like Pinecone, Weaviate, and pgvector build index structures that let you find approximate nearest neighbors in sublinear time. The most common approach is HNSW (Hierarchical Navigable Small World graphs), which builds a multi-layer graph where you traverse from coarse to fine resolution, skipping most of the search space.

The word “approximate” matters. You’re trading guaranteed correctness for speed. You might miss the single closest vector in exchange for finding 99.9% of the top-K closest vectors in milliseconds rather than seconds. For semantic search applications, this is almost always the right call. Human meaning doesn’t have crisp boundaries anyway.

The practical implication: when you build a retrieval-augmented system and something comes back that seems wrong, the problem is usually not the ANN search. It’s the embedding model or the chunking strategy. The search is doing what it was told. What it was told is often the issue.

The Part Most Applications Get Wrong

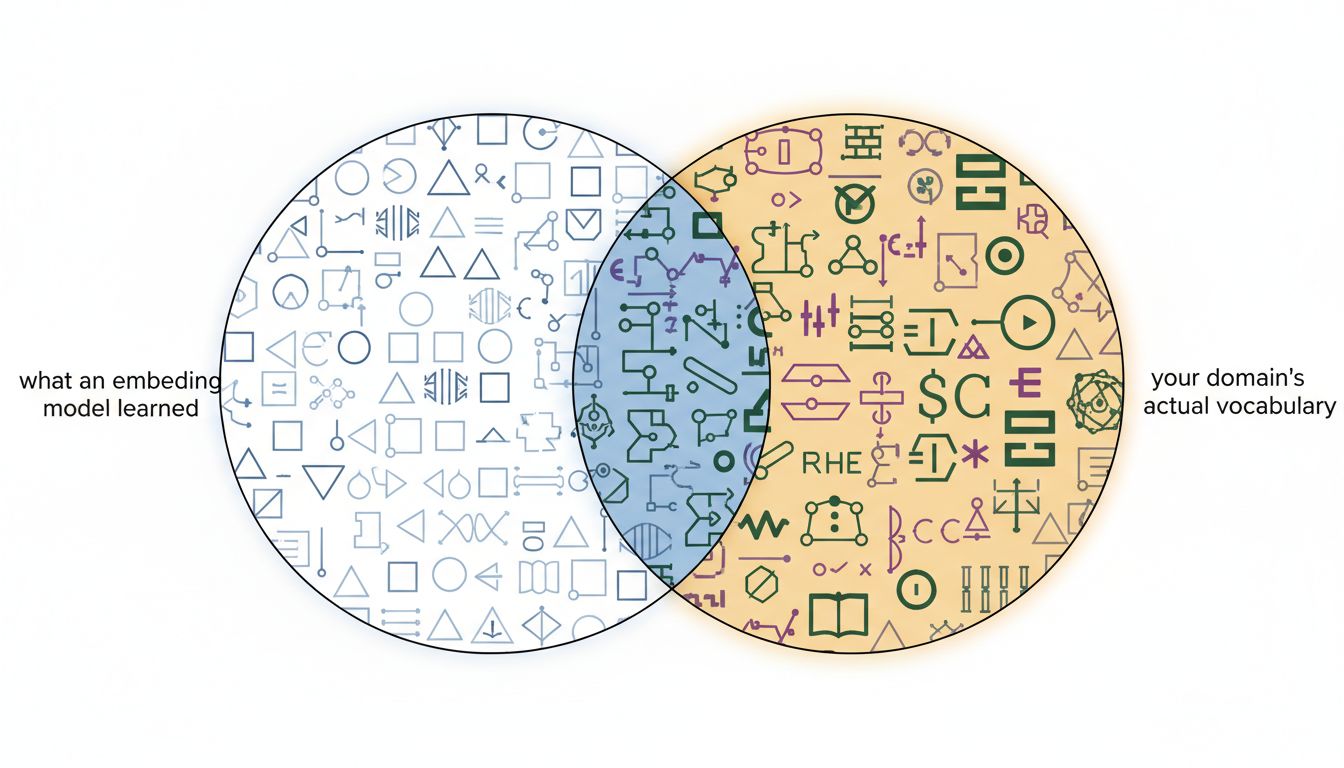

Here’s where most embeddings tutorials stop, and where most production bugs start: embeddings only encode what the training distribution covered.

If you use a general-purpose embedding model to search a codebase, you’re asking a model trained mostly on natural language to encode the semantic relationships in Python or SQL. It will do something reasonable, because there’s enough code in most training sets, but it will do worse than a model specifically trained on code. The geometry of the space won’t reflect the relationships that matter for your domain.

More subtly: embeddings don’t encode what your users mean when they phrase a query in a way that’s underrepresented in the training data. Internal jargon, product-specific terminology, abbreviations that have specific meanings in your context. These will either land in the wrong neighborhood or be near nothing relevant at all.

This is why fine-tuned or domain-specific embedding models exist. Cohere, Voyage AI, and others offer models trained on specific domains. In serious production systems, fine-tuning your embedding model on domain-specific data often does more good than any amount of prompt engineering on the generation side.

Cross-Modal Embeddings and the Bigger Picture

The most interesting extension of this idea is cross-modal embeddings, where different types of data (text, images, audio, code) get embedded into a shared vector space. OpenAI’s CLIP model does this for text and images. A text description of a red car and a photograph of a red car end up near each other in the shared space, even though one is pixels and one is tokens.

This is not a coincidence of architecture. It’s the consequence of training the model to place matched pairs close together across modalities. The shared space becomes a universal semantic coordinate system, at least within what the model learned.

The practical upshot: you can search images with text queries, find code that matches a natural language description, or build recommendation systems where user behavior and item descriptions live in the same space and interact naturally. These applications aren’t clever hacks. They’re the direct consequence of taking the geometry seriously.

What this also means is that the “embeddings are just numbers” framing actively obscures what makes this powerful. The numbers aren’t incidental. They’re a learned map of meaning. The map has real structure, real limits, and real failure modes. Understanding those things is the difference between building a retrieval system that works and one that confuses your users for reasons that are genuinely hard to debug.

What This Means

Embeddings are a learned geometric encoding of meaning. The training process discovers structure in data and distributes it across dimensions of a vector space. Semantic similarity becomes spatial proximity. Semantic relationships become vector arithmetic.

The practical takeaways:

- Model choice matters more than most tutorials suggest. General-purpose embedding models are a reasonable starting point. For domain-specific applications, they’re often the bottleneck.

- Dimensionality is a tradeoff, not a quality metric. Higher dimensions encode more distinctions but cost more. Reduce aggressively when approximate retrieval is sufficient.

- Approximate nearest neighbor search is correct behavior, not a compromise. For semantic applications, you want the mostly-right answer fast, not the exactly-right answer slowly.

- When retrieval is broken, check the embedding model first. The search index is usually doing its job. The representation is usually the problem.

- Cross-modal embeddings are the same idea, not a different one. Different inputs, same principle: train a model to map meaning into a shared space, and the geometry becomes the interface.

The deepest thing about embeddings isn’t how they work mechanically. It’s that they work at all. Training a model to predict words, and discovering that the resulting vectors encode the relationship between royalty and gender, is genuinely surprising. That surprise is worth preserving. It means something real is being captured.