When you delete your account on an AI platform, a natural assumption kicks in: the data is gone. The conversations you had, the prompts you typed, the feedback you clicked, all of it erased. This is almost certainly wrong, and the gap between what users expect and what actually happens is wide enough to matter.

This isn’t a story about malicious actors. Most AI companies aren’t scheming to keep your data out of spite. The issue is structural. The way modern AI systems are built makes “deletion” a genuinely complicated technical and legal concept, and the policies governing that complexity are, in most cases, written to favor the company.

What Your Data Actually Becomes

When you use a large language model product, your inputs don’t just bounce off the model and disappear. They get logged. They may get reviewed by human contractors for safety and quality assessment. Depending on your account type and the terms you agreed to, they may get used to fine-tune future model versions. At some point, a subset of that data may become part of a training dataset.

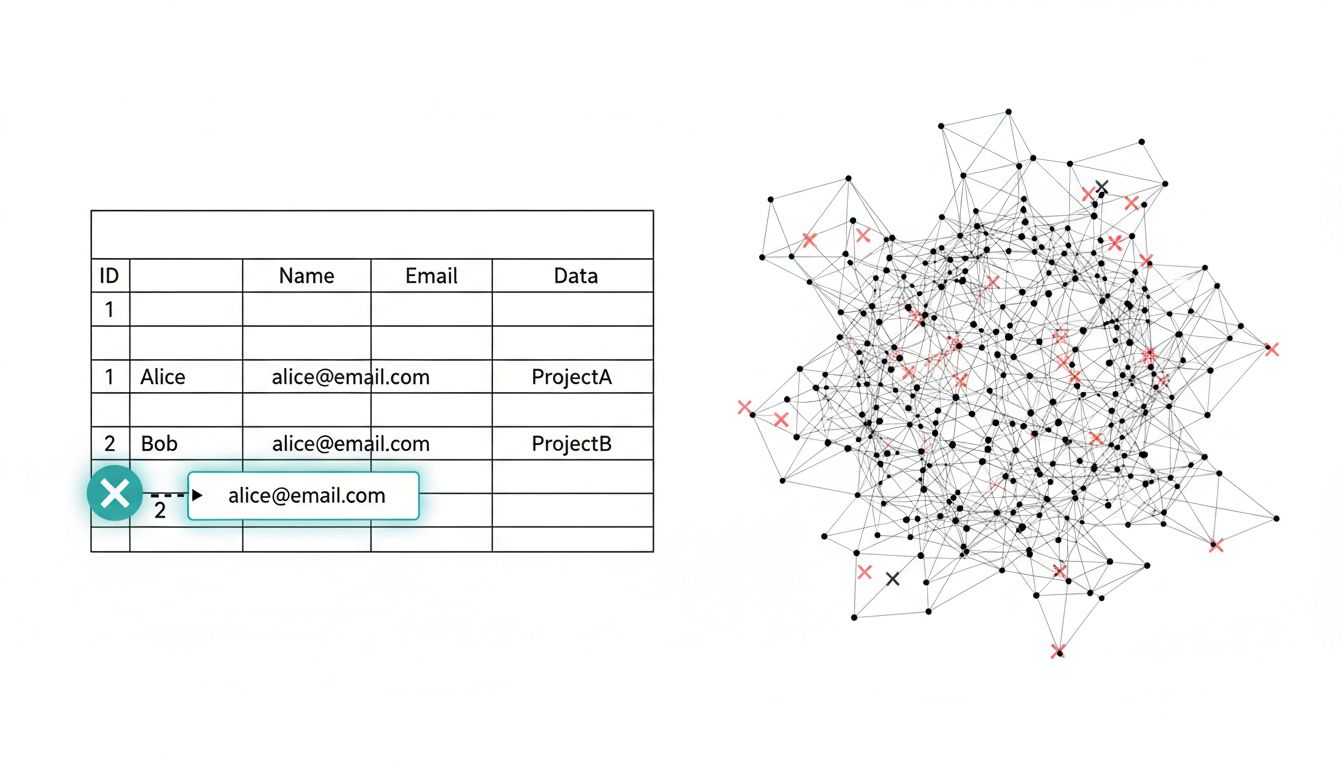

That last step is where deletion gets complicated. Training a neural network is not like writing to a database. The model doesn’t store your conversations as rows in a table that can be dropped with a SQL command. Your data gets dissolved into billions of floating-point weights through a mathematical process that is, practically speaking, irreversible. You cannot surgically remove the influence of a single training example from a trained model without retraining the entire thing, which is extraordinarily expensive. As a concept worth understanding in this context, the representations learned during training encode statistical patterns across the whole dataset, not individual contributions.

So when a company says they’ll delete your account data, they can credibly promise to delete the raw logs, the stored conversation history, the account record itself. What they generally cannot promise, and usually don’t, is that your data’s influence on already-trained models will be erased.

What the Policies Actually Say

Read the terms of service for major AI platforms carefully and you’ll find language that reflects this reality, just buried in legalese. OpenAI’s privacy policy distinguishes between personal data used for services and data used to improve models. They note that data used to train models may not be deletable in the traditional sense. Anthropic and Google take similar positions.

The GDPR’s “right to erasure” complicates this in Europe. Under Article 17, individuals can request deletion of personal data, but there are exceptions, including when processing is necessary for the performance of a task carried out in the public interest, or when the data has been rendered anonymous. Companies often argue that once data is aggregated and used in training, it no longer constitutes personal data in a legally meaningful sense. Whether that argument holds water is still being tested in courts and regulatory proceedings.

In the United States, there’s no equivalent federal baseline. California’s CPRA gives residents some deletion rights, but enforcement against AI training data specifically remains murky. The practical result is that your leverage as a user varies enormously depending on where you live and how a company chooses to interpret its obligations.

The Fine-Tuning Wrinkle

Consumer products are one thing. Enterprise AI products introduce a more pointed version of this problem. Many platforms allow businesses to fine-tune models on their proprietary data, creating customized versions that behave according to specific instructions, tone, or knowledge bases. When the business ends its contract and deletes its account, what happens to those fine-tuned weights?

This is not an abstract concern. A company might fine-tune a model on internal documentation, customer service transcripts, or product specs that contain trade secrets. If they later switch vendors, they want that data gone. The contract terms governing this are often vague, and the technical complexity of what “gone” means for model weights creates genuine ambiguity.

Some vendors now offer explicit guarantees around fine-tuning data deletion, including commitments to delete the base fine-tuned weights. Others offer no such guarantees. The pricing opacity in cloud services extends in spirit to data handling terms: the details that matter most are often the hardest to compare across vendors.

What You Can Actually Control

The answer, honestly, is less than you’d like. But it’s not nothing.

First, timing matters. Many platforms have settings to opt out of training data use before your data is ever incorporated into a model. OpenAI offers this for API users by default (API data is not used for training unless you opt in) and provides a toggle for ChatGPT users through their privacy settings. Using these options before you generate significant data is far more effective than trying to delete data after the fact.

Second, understand the tier you’re on. Enterprise contracts typically come with stronger data handling guarantees than consumer terms of service. If your organization is using AI products and data sovereignty is a concern, this is a contract negotiation point, not a settings menu.

Third, read the deletion confirmation carefully. When a platform confirms your deletion request, note exactly what they say they’re deleting. Reputable companies will specify what is and isn’t covered. Vague language like “your account and associated data” should prompt follow-up questions.

Fourth, consider what you’re putting in. The most reliable protection is treating AI chat interfaces the way you’d treat a public forum: don’t put in anything you’d be uncomfortable seeing persist indefinitely. This sounds overly cautious until you consider that these systems genuinely cannot guarantee full erasure.

The Honest Answer

Deleting your account on an AI platform will remove the visible record of your activity. It will not, in most cases, remove your data’s influence on models that have already been trained. The technical reasons for this are real, not excuses. The policy language reflects this reality, just often without explaining it plainly.

The industry needs clearer standards here. Differential privacy techniques exist that can limit the extractability of individual training examples from models, and some research labs are applying them. Unlearning algorithms, which attempt to reduce a model’s dependence on specific training data after the fact, are an active area of research. Neither is a complete solution yet.

Until those technical and regulatory floors exist, the honest framework is: assume that data you share with an AI system may persist in some form beyond your account deletion. Use the opt-out controls available to you before generating data, not after. And treat enterprise data handling terms as a procurement requirement, not an afterthought.