Spotify’s recommendation engine is one of the most-discussed systems in consumer technology, and most of the discussion focuses on the wrong thing. People talk about collaborative filtering, about how the system learns from what millions of users play and skip. But the early version of what became Discover Weekly didn’t start by analyzing listening behavior. It started by reading the web.

The team scraped blog posts, forum threads, and music reviews, looking for sentences like “if you like Radiohead, you should try Portishead.” They fed all of that text into a model and let it learn associations between artists from language alone. The output wasn’t a list of related artists. It was a set of numbers, one vector per artist, positioned in a high-dimensional space so that artists people wrote about in similar contexts ended up near each other.

Those numbers are embeddings. And the Spotify story is one of the clearest examples of what an embedding actually represents, and why that representation is more powerful than it first appears.

The Setup

Before embeddings became common infrastructure, music recommendation was largely a manual or rule-based problem. You could tag songs by genre, tempo, and mood, but someone had to assign those tags. You could track what users listened to together, but that required enough listening history to be useful. New artists, niche genres, and users with thin histories fell into gaps.

Spotify’s research team, working on what would eventually become their audio and natural-language understanding stack, tried something different. They treated the question “which artists belong near each other?” as a language problem. If the web’s collective writing placed two artists in similar sentence contexts, that was a signal worth capturing.

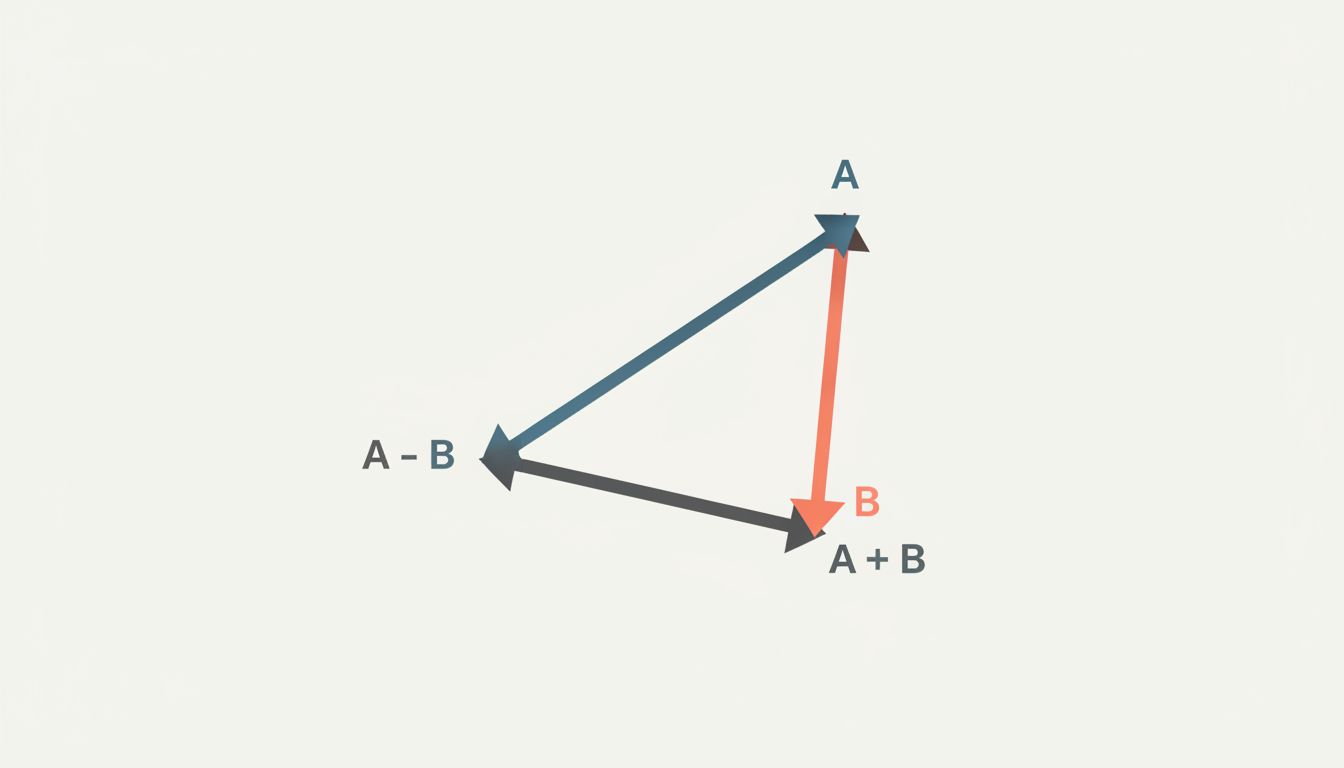

The technique they leaned on came from Word2Vec, a model Google researchers published in 2013. Word2Vec’s core insight was that word meaning could be encoded as position in a geometric space, and that position could be learned from context alone. Words that appeared in similar surrounding contexts ended up near each other. “King” minus “man” plus “woman” produced a vector close to “queen.” Nobody programmed that relationship. The model inferred it from patterns in text.

Spotify applied the same logic to artists instead of words. An artist became a point in space. Proximity became a proxy for similarity.

What Happened

The system they built, described in a 2014 paper by Shengbo Guo and Sander Dieleman, treated each artist mention in a sentence as analogous to a word in a vocabulary. The model learned to predict which artists appeared in similar contexts, and adjusted the vectors accordingly during training. After enough iterations across enough text, the geometry of the space encoded real musical relationships.

Jazz artists clustered near other jazz artists. Subgenres of metal ended up in their own neighborhoods. Artists who bridged genres, like Beck, sat in ambiguous territory between clusters, which itself was informative.

The useful thing about this representation is what you can do with it after training. You can find the nearest neighbors to any artist and get a ranked list of similar acts. You can average vectors across a user’s listening history and land in a point that represents their taste, then find artists near that point. You can do arithmetic: take the vector for a well-known artist, subtract the vector for a common characteristic (say, high commercial appeal), and find artists nearby who share the musical DNA without the mainstream presence.

This is not a lookup table. It’s a compressed model of relationships, and those relationships generalize to artists the system has never been asked about directly.

When collaborative filtering and this embedding-based approach were combined into what became Discover Weekly, the results were strong enough that Spotify has cited the feature as a meaningful driver of user engagement. The embedding layer handled cold-start problems (new artists with no listening history but plenty of web coverage) and enriched recommendations for users with unusual tastes.

Why It Matters

An embedding is a learned compression. You take something, an artist, a word, a product, a user, and you ask a model to represent it as a fixed-length list of numbers in such a way that the geometry of those numbers reflects meaningful relationships in the original domain.

The numbers themselves are uninterpretable. Dimension 47 of an artist embedding doesn’t mean “has distorted guitars” or “peaked in the 1990s.” The meaning lives in the relationships between vectors, not in any individual dimension. That’s why people sometimes explain embeddings as “putting similar things close together,” which is technically correct but slightly backwards. You don’t move similar things together. You train the model on a task, and similarity in the embedding space emerges as a side effect of learning to do that task well.

This matters practically because it means the quality of your embeddings is tied to the quality of your training task and training data. Spotify’s artist embeddings were good because web writing about music is rich and plentiful. If you tried the same approach on a domain with sparse, low-quality text, your embeddings would reflect that poverty.

It also means embeddings transfer. An embedding trained on one task can often be fine-tuned or used directly for a related task, because the compressed representation captures something real about the domain. This is part of why large language models are so broadly useful: their token embeddings are trained on enough varied text that the resulting geometry encodes a huge range of conceptual relationships. As with fine-tuning more broadly, what you get from transfer depends heavily on how well the original training task aligns with your new one.

What You Can Learn From This

If you’re building anything that involves similarity, recommendation, or search, there are a few concrete things to take from Spotify’s approach.

Your training task defines your geometry. Spotify trained on co-occurrence in music writing, so their embeddings captured “sounds like” relationships. If you trained on purchase co-occurrence, you’d get embeddings that capture “bought together” relationships instead. Before you use someone else’s embeddings, or train your own, be explicit about what task produced them and whether that task is actually the one you care about.

Embeddings solve cold-start differently than collaborative filtering does. Collaborative filtering requires history. Embeddings can be generated from content, metadata, or text descriptions alone. For any system where you regularly add new items, having an embedding-based fallback is worth the engineering investment.

The geometry is inspectable. When a recommendation looks wrong, you can look at what vectors are nearest to the one you’re investigating. This gives you a debugging path that pure behavioral models don’t offer. If an artist ends up in the wrong neighborhood, you can trace it back to the text or training signal that pulled it there.

Averaging vectors is surprisingly powerful. Spotify represented user taste as a point in artist-embedding space by averaging the vectors for everything a user had listened to. That simple operation produces a usable taste profile. The same trick works for documents, products, and sessions. You don’t always need a separate user-modeling step.

The deeper lesson from Spotify’s embedding work is that the right question isn’t “what is this thing?” It’s “what task, if learned well, would force similar things to end up near each other?” Answer that question clearly, and the representation follows. Get it wrong, and you end up with a space full of numbers that are technically an embedding but geometrically useless.

That distinction, between an embedding that encodes real structure and one that just encodes noise, is where most practical embedding problems actually live. The math is the easy part.