There’s a natural impulse when building with language models: if the output isn’t right, add more instructions. The model ignored your formatting rule, so you restate it. It went off-topic, so you add a constraint. Before long, your system prompt is 800 words of accumulated corrections, and the model is somehow worse than when you started.

This isn’t bad luck. It’s a predictable consequence of how these models process instructions. Understanding the mechanics makes the fix obvious.

1. Models Don’t Read Your Prompt the Way You Do

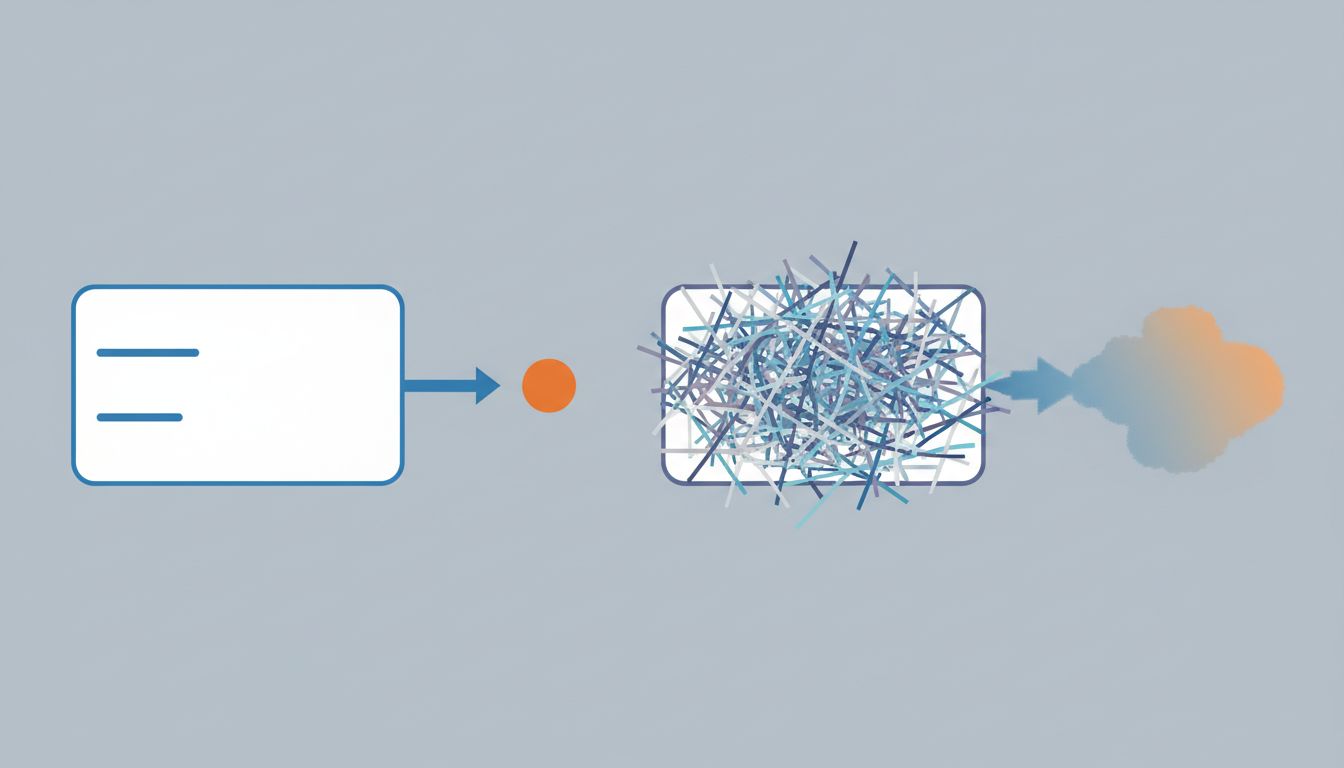

When you write a numbered list of rules, you imagine the model checking them off before responding. That’s not what happens. Transformer-based models process the entire context (system prompt, conversation history, user message) as a weighted attention field. Every token influences every other token, but not equally and not linearly.

Long prompts dilute attention. Instructions buried in paragraph four compete with instructions in paragraph one, and the model doesn’t resolve conflicts the way a human editor would. It averages across them, which means contradictory rules don’t cancel out cleanly, they blend into fuzzy behavior. As the prompt you write is not the prompt the model reads, the gap between your mental model of the instruction and the model’s interpretation widens as the text gets longer.

2. Each New Rule Can Override a Previous One

System prompts grow by accretion. You add rule seven because you noticed a problem, but rule seven now silently conflicts with rule three. The model doesn’t flag this. It just produces output that satisfies neither.

This is especially bad with negations. “Do not discuss pricing” and “always be helpful and answer any question the user has” are both reasonable rules individually. Together, they create a contradiction the model resolves inconsistently. Sometimes it’ll answer pricing questions. Sometimes it’ll refuse everything adjacent to pricing. You won’t find a pattern because there isn’t a clean one, just a probabilistic blend of two competing objectives.

The practical test: if you can’t state your system prompt’s core purpose in two sentences, it probably contains internal contradictions. That’s not a threshold, it’s a diagnostic.

3. Instruction-Following Degrades Non-Linearly

Research from multiple alignment teams has documented that instruction-following accuracy tends to drop as prompt length increases, and the drop isn’t gradual. Models often handle five to seven constraints reasonably well and then degrade sharply as you add more. The tenth constraint is much harder to follow reliably than the fifth, not because it’s more complex, but because the model is already straining to satisfy the others.

This has a counterintuitive implication: a system prompt with twelve rules will often produce outputs that violate more rules than a prompt with six. Adding a rule to fix a problem can make the overall compliance rate go down. You’re not debugging a system with clear logic, you’re adjusting a probability distribution, and it doesn’t behave like a checklist.

4. Verbosity Signals Uncertainty to the Model

There’s a subtler problem: models are trained on human text, and in human text, over-explanation often signals uncertainty or defensive hedging. A confident legal brief is tight. A nervous one is padded. A confident manager’s email is four lines. A worried one is twelve.

When your system prompt is dense with qualifications and exceptions, “unless the user specifically asks,” “except in cases where,” “but only if” you’re teaching the model to operate in a space where normal rules don’t quite apply. It learns to hedge. The outputs become more tentative and more generic, which is often exactly the problem you were trying to fix with the extra instructions.

Brief, declarative prompts model confident behavior. That’s not intuitive, but it holds.

5. The Real Fix Is Usually Upstream

Most long system prompts are a product of not knowing exactly what you want. Each rule is a patch on a problem that a clearer core objective would have prevented. “Don’t be too formal” and “don’t be too casual” are both signs you haven’t defined the actual tone you want. One concrete example of desired output often outperforms five negative constraints.

This maps to a general engineering principle: constraints are a downstream fix for unclear specifications. The better investment is in the specification. Write a single paragraph that describes who the model is, what it’s trying to accomplish, and what the user needs. Then test. You’ll usually find that a clear persona handles edge cases better than a list of rules covering every edge case you’ve thought of, because it generalizes.

6. When Long Prompts Are Actually Justified

This isn’t a universal argument for minimal prompts. There are cases where length is legitimate: when you need to include reference data (product names, API specifications, a style guide), when you’re doing few-shot prompting with worked examples, or when you’re operating in a domain where precision genuinely requires extensive context.

The distinction is between instructions and information. Information-dense prompts can work well because the model uses that content as lookup material. Instruction-dense prompts degrade because the model has to satisfy all of them simultaneously. If your prompt is long because it contains a reference table, that’s fine. If it’s long because you’ve added seventeen behavioral rules, start cutting.

The test is simple: if you removed half the instructions, would the core behavior break, or would you just feel less safe? If it’s the latter, remove them. Your discomfort is not evidence that they’re doing anything.