The simple version

A smaller AI model isn’t a worse AI model. In many real-world situations, it’s a better one.

The size obsession and why it exists

When AI labs announce a new model, the number that gets attention is parameter count. GPT-4 has more than GPT-3. Larger parameter counts signal capability, and for raw benchmark performance on academic tasks, that signal is often accurate. Bigger models trained on more data do tend to score better on standardized tests of reasoning and knowledge.

But benchmarks measure potential, not usefulness. A model that scores brilliantly on a reasoning benchmark and takes four seconds to respond, costs a dollar per thousand queries, and requires a server rack to run is not useful for most purposes. It’s impressive on paper and impractical in practice.

The industry learned this lesson slowly, then all at once. The release of Meta’s LLaMA series in 2023 was a turning point: smaller open models that could run on consumer hardware demonstrated that capable AI didn’t require hyperscale infrastructure. Suddenly the conversation shifted from “how big can we go” to “how small can we get away with.”

What model compression actually does

Shrinking a model isn’t just deleting parameters and hoping for the best. There are several distinct techniques, each with its own tradeoffs.

Quantization reduces the numerical precision used to store each parameter. A standard model might store each weight as a 32-bit floating point number. Quantization converts those to 8-bit integers, or even 4-bit. You lose some precision, but models are surprisingly robust to this. A well-quantized model running at 4-bit precision often performs nearly as well as the original, at a fraction of the memory footprint.

Pruning removes parameters that contribute little to the model’s output. Neural networks are famously redundant, a feature that makes them trainable but also means many weights do almost nothing. Pruning identifies and removes those weights. Done carefully, it has minimal effect on output quality.

Knowledge distillation is more interesting. You train a smaller “student” model to mimic the behavior of a larger “teacher” model, rather than training on raw data directly. The student learns from the teacher’s outputs, which carry more signal than simple right-or-wrong labels. DistilBERT, a distilled version of Google’s BERT, retained about 97% of BERT’s performance on most benchmarks while being 40% smaller and 60% faster.

The practical case for smaller

Consider what happens when you actually deploy a model into a product. Latency matters enormously. Users notice delays above a few hundred milliseconds, and in agentic systems where a model might be called dozens of times to complete a task, latency compounds fast. A model that responds in 200ms instead of 800ms doesn’t just feel better, it makes previously impractical workflows possible. (If you’ve seen the gap between benchmark speed and real-world speed, this analysis of why fast benchmarks produce slow apps explains exactly why.)

Cost matters too. Inference at scale is not cheap. Every token generated costs money, and serving a large model to millions of users daily adds up quickly. Smaller models shift the math dramatically. They can also run on less expensive hardware, or on hardware that’s closer to the user, which reduces latency further.

Then there’s the offline case. Many real applications need to run where there’s no reliable internet connection: medical devices, industrial sensors, mobile apps that need to work on a plane. A model small enough to fit on a phone or an embedded chip enables entirely different categories of products. Apple’s on-device models that power features in iOS do exactly this, running locally to preserve privacy and function without a network call.

The focus effect

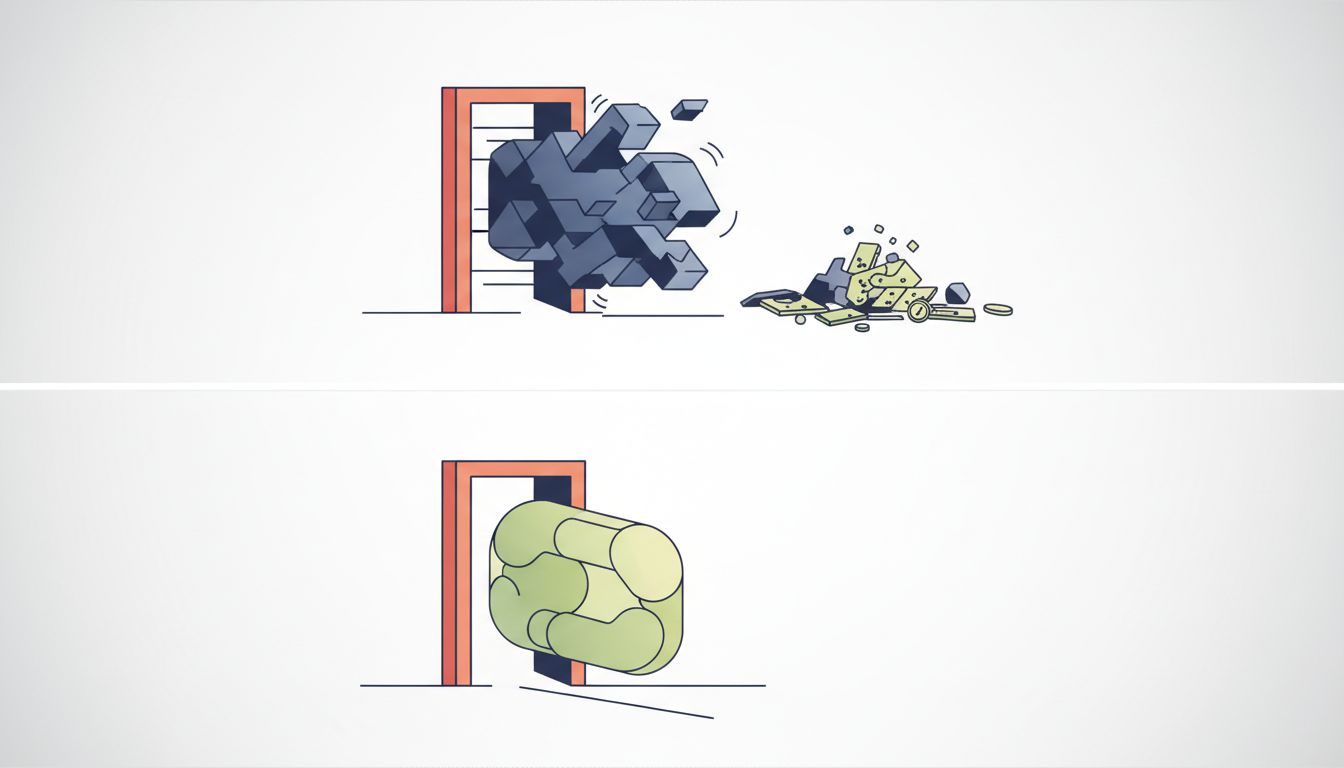

Here’s the part that surprises people: smaller models that are fine-tuned for specific tasks often outperform larger general-purpose models on those tasks.

A large general model has to be good at everything: writing poetry, debugging code, translating languages, answering trivia. That generality costs something. When you fine-tune a smaller model on a narrow domain, it stops wasting capacity on things it will never be asked to do. It’s the difference between a generalist consultant and someone who has spent ten years doing exactly one thing.

For most business applications, this is the right tradeoff. A company building a customer support tool doesn’t need the model to write sonnets. It needs the model to understand their product, handle common questions accurately, and escalate edge cases. A small, fine-tuned model can do this better than a large general one, and it will do it faster and cheaper.

This connects to a broader point about AI deployment that often gets missed. Getting a model into production is where the real complexity lives, and model size is one of the first decisions that shapes every constraint downstream.

What you should take away

Size is a starting point for capability, not a measure of usefulness. The right model for any given task is the smallest one that does the job well enough, not the largest one available.

This isn’t settling for less. It’s making an engineering decision instead of a marketing one. The companies building AI into real products have largely figured this out already. They’re not running GPT-4-scale models for every query. They’re running hierarchies of models, using smaller ones for common cases and routing to larger ones only when necessary.

The benchmark leaderboard tells you what a model can do in ideal conditions. What matters is what it does in your conditions, at your volume, on your budget, at the latency your users will actually tolerate. On those measures, smaller models win more often than the headlines suggest.