In 2017, Discord was growing faster than its database could handle. The company had chosen Cassandra, a distributed NoSQL database built for scale, to store user messages. On paper, this was a reasonable choice. Cassandra was battle-tested at companies like Netflix and Apple. It could handle writes at enormous throughput and scale horizontally by adding nodes.

Then Discord’s engineers started looking at their read latency numbers.

Cassandra’s read performance was degrading in ways that seemed counterintuitive. More data meant slower reads, even for recent messages. The culprit was something called “tombstones,” Cassandra’s mechanism for handling deletions. When you delete data in Cassandra, it doesn’t remove it immediately. It writes a marker, a tombstone, that says “this data is gone.” The actual removal happens later during a background process called compaction. Until compaction runs, every read has to scan past tombstones to find live data.

Discord’s message deletion patterns, combined with message edits and channel deletions, were generating tombstones faster than compaction could clear them. A read for recent messages in a popular channel might scan through thousands of tombstones to return a few hundred live rows. Latency spiked. The p99 numbers, the worst-case reads that the slowest 1% of requests experienced, became genuinely painful.

The team documented this publicly in a 2017 engineering blog post that became required reading for anyone building on Cassandra at scale. Their conclusion: Cassandra was the wrong tool for their specific read patterns, and the problem was architectural, not configurable.

This is where the story gets interesting, because Discord’s solution wasn’t to find a database that remembered everything perfectly. It was to build a system that deliberately structured what it forgot, and when.

The team eventually migrated to ScyllaDB (a Cassandra-compatible database written in C++ rather than Java, with better performance characteristics for their workload), but the deeper lesson wasn’t about which database to choose. It was about the relationship between forgetting and speed.

Every high-performance database makes explicit tradeoffs about durability, immediacy, and completeness of memory. Redis, one of the most widely used caching layers in production systems, is fast precisely because it keeps data in RAM and optionally persists it to disk. It can be configured to lose some writes deliberately, accepting data loss in exchange for throughput. The database isn’t broken when it does this. It’s doing exactly what you asked.

Cassandra’s tombstone problem is a version of the same principle seen from the other side. The database was remembering too much, for too long, in a way that made retrieval expensive. The “forgetting” (compaction) wasn’t happening fast enough, and that incomplete forgetting was more expensive than total ignorance would have been.

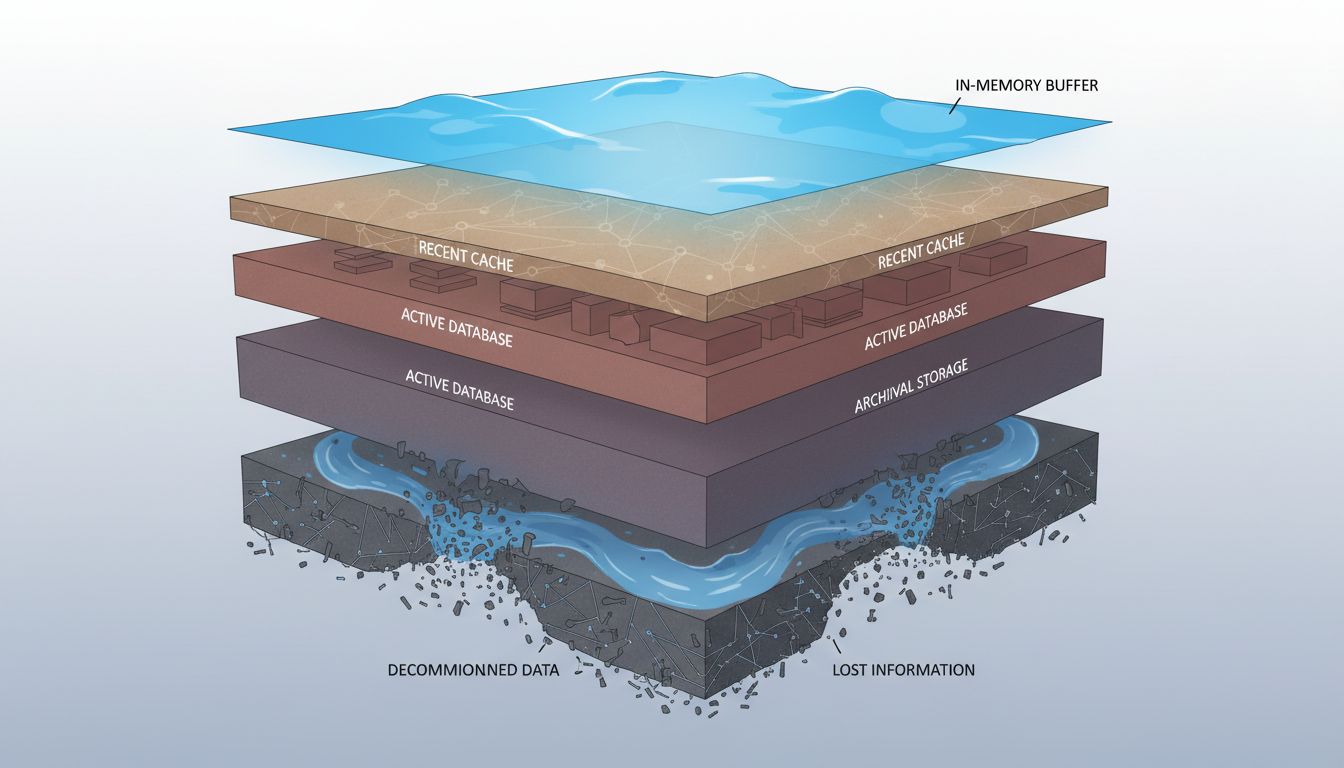

LSM trees, the data structure underlying Cassandra, ScyllaDB, RocksDB, and several other databases, make this explicit. LSM stands for Log-Structured Merge-tree. Writes go into an in-memory buffer first, then flush to immutable files on disk. Reads have to check multiple layers: the in-memory buffer, recent disk files, older disk files. Over time, background compaction merges these layers and discards obsolete data. The database is always in the process of organized forgetting, and the performance characteristics you see depend heavily on where in that cycle you are.

Facebook built RocksDB (an LSM-based storage engine, now widely used as the foundation for databases like CockroachDB and TiDB) specifically because they needed to tune these tradeoffs at enormous scale. The compaction strategies, bloom filter configurations, and memory allocation decisions in RocksDB are all different ways of answering the same question: what should we remember, at what fidelity, and for how long?

The Discord story extracted well because it had a clear villain (tombstones), a clear symptom (p99 latency), and a concrete architectural decision. But the underlying pattern shows up everywhere in database engineering, and it’s worth naming directly.

Every caching layer in your stack is a deliberate forgetting machine. Memcached evicts the least-recently-used data when it fills up. CDN edge nodes drop cached assets based on TTL and storage pressure. Browser caches expire. The entire purpose of a cache is to remember the things you’ll probably need and forget the things you probably won’t. Getting this wrong in either direction costs you: too aggressive forgetting means expensive cache misses; too conservative forgetting means stale data and memory pressure.

Time-series databases like InfluxDB and TimescaleDB handle forgetting as a first-class feature. Retention policies tell the database to automatically drop data older than a defined window. Your monitoring system doesn’t need per-second CPU metrics from three years ago. Keeping them would bloat storage, slow queries, and add cost with no meaningful benefit. The forgetting is the feature.

Event streaming systems like Kafka operate similarly. Kafka retains messages for a configurable period (often 7 days by default), then discards them. Consumers are expected to process messages before they age out. The system doesn’t promise to remember everything forever, and that’s precisely why it can handle millions of events per second at low latency.

What Discord’s 2017 problem illustrated, and what these other examples confirm, is that database performance isn’t really about how much you can remember. It’s about how cleanly you can forget. The fastest systems are the ones that have made deliberate, explicit decisions about what gets discarded, when, and by what mechanism. The slow ones are often systems that accumulated data without a forgetting strategy, or whose forgetting mechanism (like Cassandra’s compaction) got overwhelmed by the workload it was supposed to handle.

For engineers building systems today, the practical implication is this: before you choose a database or design a schema, think about your deletion and expiration patterns as carefully as you think about your write and read patterns. Ask what happens when tombstones accumulate. Ask what your compaction strategy is. Ask whether you have a retention policy or whether you’re implicitly promising to remember everything forever.

That implicit promise is the one that tends to break first.