Every sprint planning meeting contains at least one moment where someone says “that’s basically done” and nobody challenges it. That word, “basically,” is doing enormous structural work. It’s carrying unresolved disagreement about testing coverage, documentation, stakeholder sign-off, monitoring, and at least three other things the team hasn’t explicitly negotiated. The definition of done is one of those concepts that sounds solved until you look at what actually ships.

1. “Done” Is Not a Universal State. It’s a Contract Between Roles.

A developer saying a feature is done means something different from a QA engineer saying it, which means something different from a product manager saying it. The developer usually means: the code does what the ticket described, it’s reviewed, and it’s merged. The QA engineer means: it behaves correctly across the test matrix, edge cases included. The PM means: it solves the problem we intended to solve and the stakeholders have seen it. All three definitions are valid. None of them are complete on their own.

The failure mode is when teams assume these definitions are aligned because nobody has bothered to write the contract down. Agile methodologies formalized the concept of a “Definition of Done” (DoD) precisely because this misalignment is so common and so costly. But a DoD written once during team setup and never revisited becomes a relic. The real question is whether your current DoD reflects your current system, including the deployment infrastructure, the monitoring stack, and the actual users.

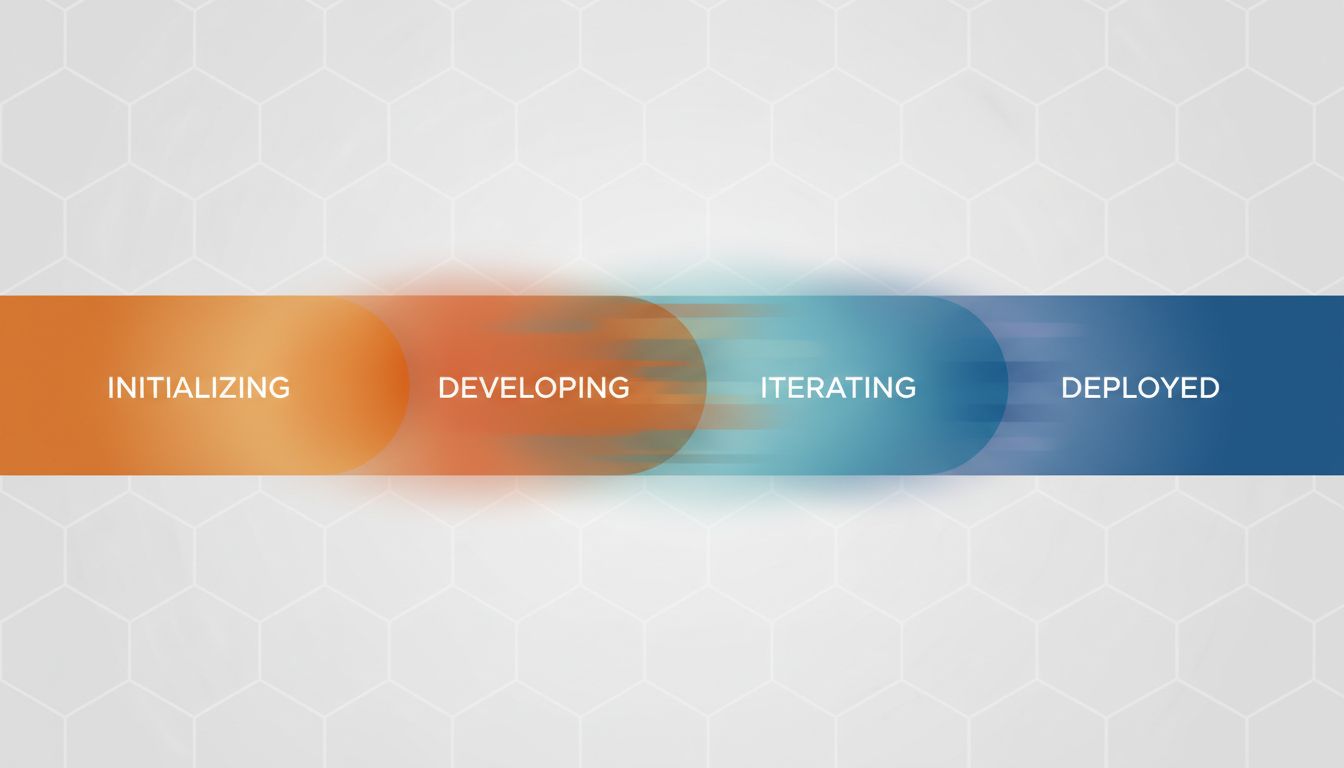

2. The Line Between Done and “Good Enough to Ship” Moves Constantly.

High-output teams ship faster by consciously distinguishing between done and shippable. These are related but not identical. A feature can be shippable, meaning it won’t break production and provides user value, without being done in a fuller sense. The technical debt is real, the edge cases are acknowledged, the documentation is a stub. Teams that operate at high velocity often build a second queue for “post-ship cleanup” that they intend to address in the next cycle.

The problem is that this queue has a half-life. Research into technical debt accumulation consistently shows that deferred cleanup tends to get re-deferred, not because engineers don’t care but because the next sprint already has committed work. If your team has a “done but needs cleanup” category, count how many tickets in that category are older than two sprints. That number tells you a lot about whether your definition of done is aspirational or operational.

3. Distributed Teams Have Structurally Harder Definitions of Done.

When everyone is in the same room, a lot of implicit coordination happens for free. The developer overhears the QA engineer finding a bug. The PM walks over to the designer and re-scopes the acceptance criteria on the spot. In distributed teams, none of that ambient information transfer occurs. The definition of done has to carry more weight because it’s doing the work that physical proximity used to do quietly in the background.

This isn’t an argument against distributed work. It’s an argument that distributed teams need to invest more deliberately in explicit shared agreements. The teams that handle this well tend to treat their DoD like a living document with versioning, not a sticky note on a Confluence page. They also tend to assign ownership: someone specific is responsible for calling a ticket done, and that person’s sign-off has a concrete meaning tied to a checklist that others can audit.

4. Acceptance Criteria and Definition of Done Are Not the Same Thing.

This conflation causes real damage. Acceptance criteria (AC) describe what a specific ticket or feature needs to do to satisfy the requirements. The Definition of Done describes what any work item needs to clear before it can be considered complete as a piece of engineering output. AC is per-ticket. DoD is per-team.

A ticket can pass all its acceptance criteria and still not meet the DoD. The feature works as specified, but there’s no logging, no error monitoring, no documentation, and the tests cover the happy path but nothing else. The AC said nothing about those things. The DoD should have. When teams collapse these two concepts, they usually end up with AC that balloons to cover everything, which makes tickets expensive to write and creates inconsistency across the backlog.

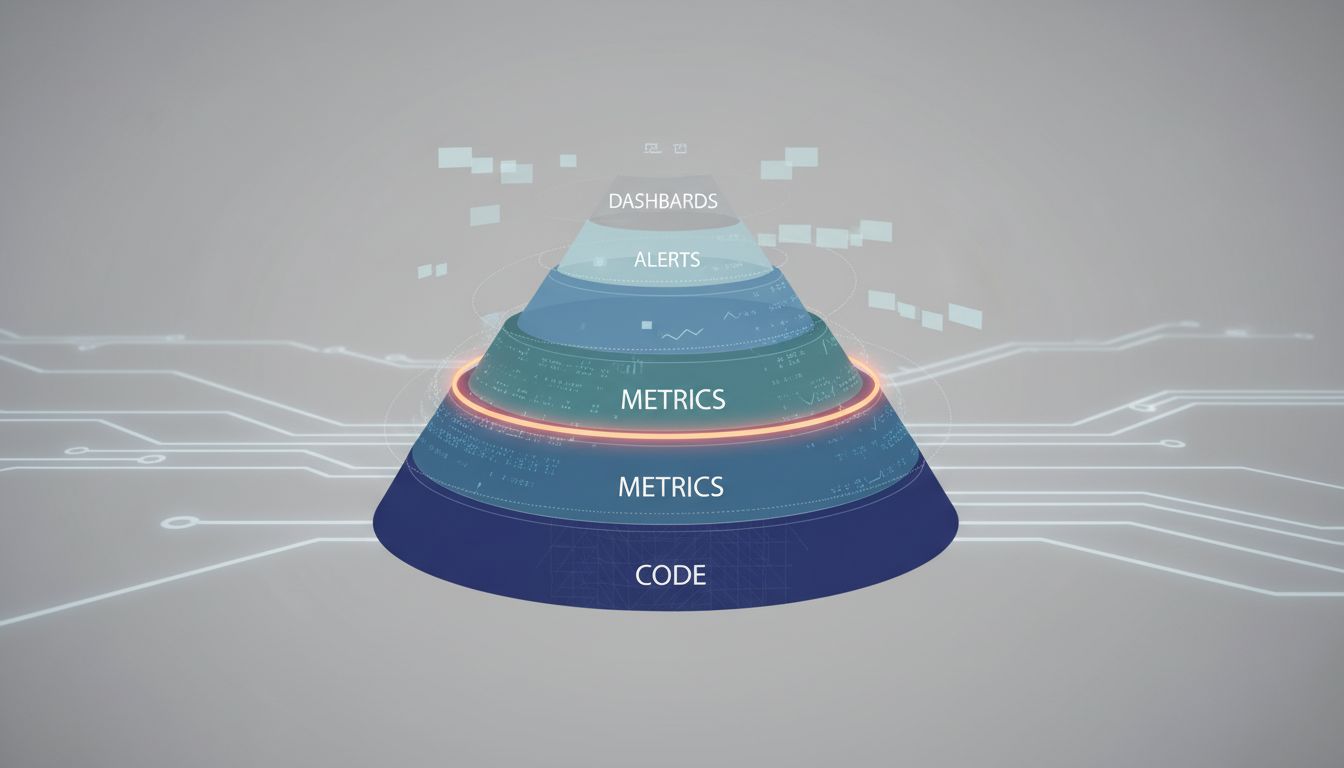

5. Monitoring and Observability Belong Inside the Definition of Done.

This is the most commonly missing item. A feature that works at merge time but has no instrumentation in production is not done in any meaningful operational sense. You can’t tell if it’s working. You can’t tell when it breaks. You can’t tell if anyone is using it.

Some teams treat observability as an infrastructure concern separate from feature work. That separation made more sense when logging and monitoring were genuinely hard to add. With modern tooling, adding a structured log line, a metric emission, or an error alert is a small addition to the ticket scope. Teams that build this into their DoD ship things they can actually reason about later. Teams that don’t end up doing forensic archaeology every time something goes wrong in production, which is a much worse use of senior engineering time.

6. The Definition of Done Is a Mirror for Team Maturity.

Look at what a team includes in their DoD and you can roughly infer where they’ve been burned before. Teams that include rollback plans have shipped something that couldn’t be rolled back cleanly. Teams that require performance benchmarks have shipped something that degraded under load. Teams that require security review have had a vulnerability slip through. The DoD is a scar map of lessons learned.

This isn’t a criticism. It’s actually a healthy signal. A DoD that grows over time means the team is integrating experience into process. The risk is that it becomes so long that it turns into a compliance exercise rather than a genuine quality gate. The best teams periodically audit their DoD the same way they audit their codebase: looking for items that are redundant, outdated, or never actually checked in practice. A definition of done that nobody enforces is worse than a shorter one that everyone respects, because it creates the illusion of rigor without the substance.

Done is not a moment. It’s a negotiated standard, and the teams that ship reliably are the ones who’ve done the work of making that negotiation explicit.