Tony Hoare invented null references in 1965 while designing ALGOL W. In a 2009 conference talk, he called it his “billion-dollar mistake,” estimating the cumulative cost of null-related crashes, vulnerabilities, and debugging hours across the industry. He was almost certainly underestimating.

The remarkable thing is not that a smart person made a regrettable design decision in 1965. The remarkable thing is that we have spent six decades building better tools, articulating cleaner abstractions, and then largely choosing not to use them.

What Null Actually Is (and Why It’s Broken)

Null is not a value. That’s the root of the problem. It’s an absence masquerading as a value inside a type system that was designed for values. When you declare a variable as a String, you mean a string. Null lets that variable hold something that is emphatically not a string, without the type system objecting, until runtime, when your program crashes.

The NullPointerException is so common in Java that developers have a reflex for it. The same concept appears as NullReferenceException in C#, nil dereferences in Objective-C and Go, undefined bleeding through in JavaScript, None mishandled in Python. Every major language inherited or reinvented the same footgun, because the semantics felt natural to designers working close to the metal, where a pointer to nothing is a perfectly sensible hardware concept.

The problem is that software engineering is not hardware. Representing “this field has no value” and “this pointer is invalid” as the same thing collapses two distinct semantic situations into one, and then hides both inside the type of whatever variable they’re assigned to. The type system stops telling the truth.

The Fix Has Existed for Decades

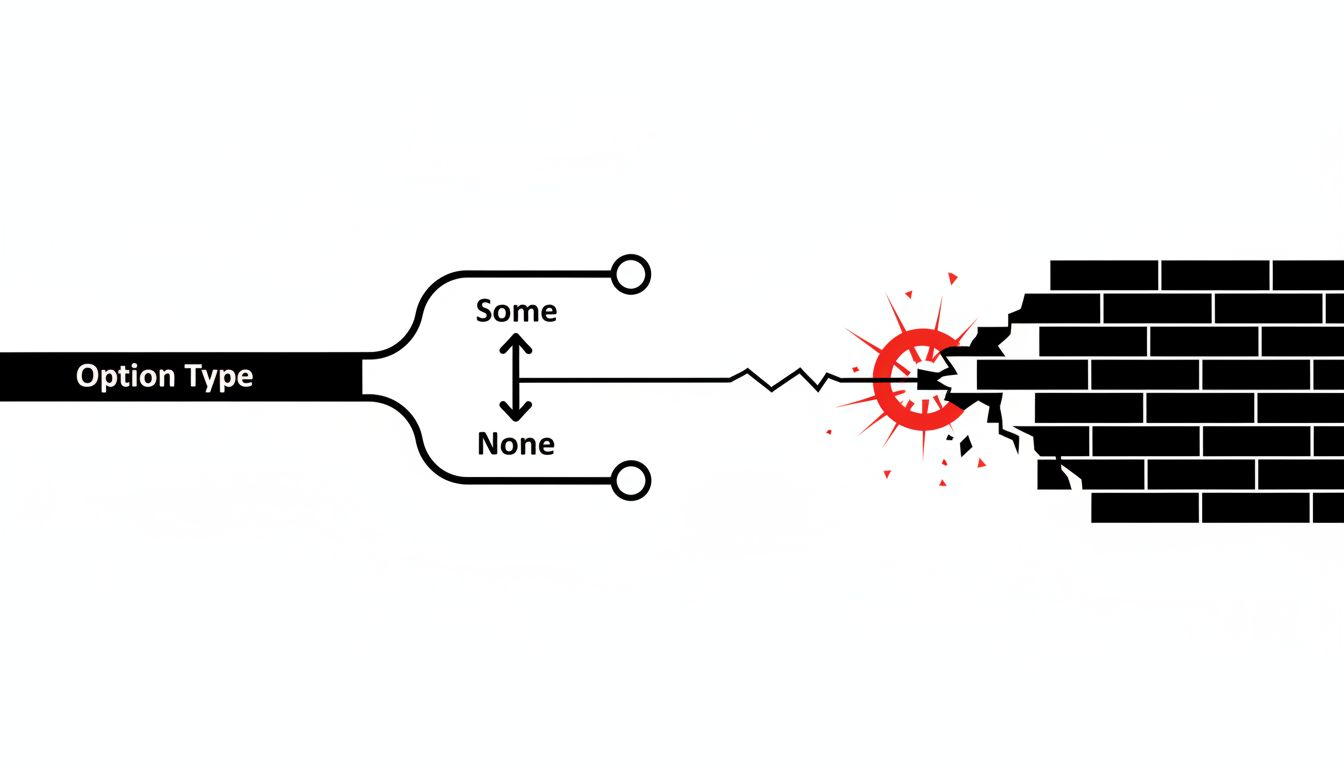

ML, the functional language developed at Edinburgh in the 1970s, introduced the option type. You don’t have a String. You have a String option, which forces you to handle both cases: present and absent. The type system makes absence explicit and mandatory to address. You cannot accidentally pass a potentially-absent value into a function that expects a definite one, because they’re different types.

Haskell has Maybe. Rust has Option. Swift, released in 2014, made optionals a core feature and made null-safety enforcement the default. Kotlin, which Google adopted as the preferred Android language in 2017, added nullable and non-nullable types. Even TypeScript, bolted on top of JavaScript’s chaotic type landscape, added strictNullChecks in 2016 to catch the worst offenses.

The knowledge has been there, codified and battle-tested, since before most working engineers were born. The tools followed. Adoption lagged badly.

Why We Keep Ignoring the Lesson

Three forces keep null alive long after it should have been retired.

The first is legacy. The world runs on C, Java, C++, and Python. Hundreds of billions of lines of code carry null semantics baked into every interface, every database schema, every API contract. SQL itself has nullable columns as a first-class feature, and the three-valued logic that results (true, false, and null as a third truth state) has been confusing developers and producing subtle bugs for fifty years. You don’t refactor that out. You manage it.

The second is friction. Null-safe type systems require discipline at the boundary. When you call code you don’t control, whether a library, an API, or a database, you must decide: can this be absent? Representing that decision explicitly takes slightly more keystrokes and forces more thinking upfront. Most organizations optimize for shipping over correctness, which means the slightly harder thing consistently loses to the slightly easier thing. The compiler warning you keep ignoring is right, but ignoring it is faster today.

The third is familiarity. Null-checking with if (x != null) is something every developer learns early and patterns around automatically. The Maybe monad, or even just Kotlin’s ?. safe-call operator, requires learning a new mental model. That’s not a high bar, but it is a bar, and in environments where hiring is constant and codebases are read by engineers with varying backgrounds, “familiar” has genuine organizational value that gets treated as a technical argument when it’s really a management preference.

The Cost Is Not Theoretical

In 2014, a null dereference in Apple’s SSL/TLS library produced the “goto fail” vulnerability, allowing man-in-the-middle attacks on iOS and macOS devices. The bug was a duplicate goto statement that bypassed signature verification, and while the proximate cause was a control flow error, the broader pattern, code paths that could silently fail to execute, is structurally related to the same reasoning that produces null bugs. The underlying assumption that absence is handleable with a defensive check, rather than unrepresentable at the type level, ran through the codebase.

NASA’s coding standards for safety-critical C code explicitly prohibit dynamic memory allocation and mandate strict rules around pointer use, essentially building null-safety conventions on top of a language that provides none. When the cost of a null dereference is a spacecraft or a patient monitor, organizations invent the discipline that the language won’t enforce.

For ordinary commercial software the cost is softer but constant: debugging sessions chasing NPEs in production logs, defensive null checks scattered through codebases like sandbags around a house with a leaky roof. Security researchers have catalogued null dereferences as a persistent class of memory-safety vulnerability, present in the Common Weakness Enumeration as CWE-476, recurring in CVE databases year after year.

What Modern Languages Actually Got Right

Rust’s approach is instructive because it treats the problem structurally rather than stylistically. There is no null in Rust. There is Option<T>. You cannot have a null pointer because the type system does not permit the concept. If a value might be absent, the type must reflect that. The compiler enforces handling of the absent case before code will compile. This is not a lint warning. It is not a runtime check. It is a compile-time invariant.

The result is that an entire class of bugs becomes literally unrepresentable. You can still write incorrect code. You can unwrap an Option carelessly and panic at runtime. But you have to do that explicitly, and the explicit act of writing .unwrap() is a visible decision that code review can catch and that experienced engineers recognize as a smell.

Swift took a softer path, adding optionals and safe-call operators but allowing forced unwrapping with !, which essentially restores null’s runtime crash behavior on demand. This was a reasonable tradeoff for a language replacing Objective-C in a large existing ecosystem, but it means Swift code varies wildly in null-safety depending on the team’s habits. The language provides the tools. Culture decides whether they’re used.

Kotlin made a similar bet for similar reasons. The !! operator exists. Production Android codebases contain it. The type system improved the baseline significantly while preserving an escape hatch that developers reliably discover and overuse.

The Database Problem Nobody Wants to Solve

Application-layer null safety is achievable. You can migrate a Python codebase to stricter typing. You can adopt Kotlin. You can write Rust. The database is harder.

Relational databases store the world’s most critical business data, and nearly every schema in existence uses nullable columns freely. The three-valued logic of SQL (where NULL = NULL evaluates to NULL rather than true) has been a source of subtle query bugs since the 1970s. NOT IN subqueries silently return empty results when the subquery contains nulls. Aggregate functions ignore nulls in ways that produce statistically misleading results. GROUP BY treats nulls as a single group, which is at least consistent, but whether that matches what any particular analyst intended is usually unknowable.

Database schema changes are among the most expensive operations in mature systems. Adding a NOT NULL constraint to a populated column requires either backfilling data or accepting a default, both of which require decisions about what the absence of data actually meant. The absence was always ambiguous. The constraint forces you to resolve the ambiguity, which turns out to be genuinely hard work that the original schema design deferred forever.

What This Means

The null problem is a useful lens on how the software industry actually learns. The correct solution was known, theoretically speaking, before the mistake was widely deployed. The practical solution, null-safe type systems, was buildable decades before mainstream languages adopted it. The adoption happened slowly, incompletely, and with abundant escape hatches, because correctness competes with inertia and correctness usually loses in the short term.

The lesson is not that Tony Hoare was careless. The lesson is that a design decision made for hardware-proximity reasons in 1965 embedded itself so deeply into the industry’s type systems, databases, languages, and habits that sixty years of knowing better has produced only partial correction. The next time someone argues that a known-bad design pattern is too entrenched to address, they’re probably right about the timeline and wrong about whether it matters. It matters. The costs accumulate regardless of whether anyone is measuring them.