The 72-hour period in November 2023 when OpenAI fired Sam Altman, watched its staff threaten mass exodus, and then rehired him with a restructured board is not, as most coverage suggested, a fascinating tale of Silicon Valley chaos. It is a clean, well-documented case study in what happens when governance structures are built for one kind of organization and then stretched over a fundamentally different one. The board didn’t lose. The structure did.

The board had real authority and used it correctly, once

OpenAI’s nonprofit board had a legal obligation to act in the interest of its stated mission: ensuring artificial general intelligence benefits humanity. That’s not a feel-good tagline. It’s the organizing principle of the legal entity that controls the company. When board members concluded, for reasons they still haven’t fully disclosed, that Altman was not being consistently candid with them, they had both the right and the obligation to act. They did.

The fact that markets panicked, Microsoft’s stock moved, and hundreds of employees signed a letter demanding reinstatement doesn’t retroactively make the board wrong to fire him. Those are commercial pressures. A nonprofit safety-focused board is specifically supposed to be insulated from commercial pressures. That’s the whole design.

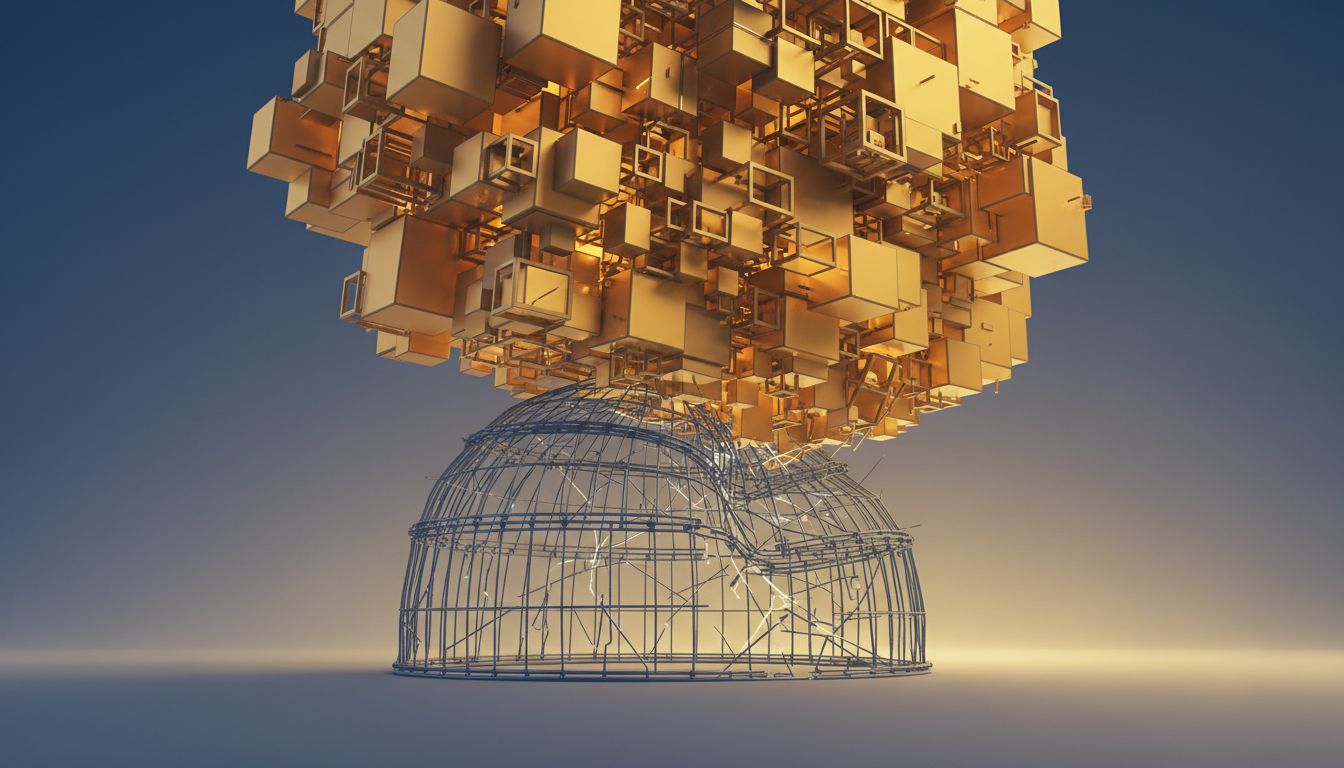

The problem isn’t that the board fired Altman. The problem is that by the time they did, the organization had grown so large, and its commercial relationships so entangled, that the board’s legal authority had effectively become ceremonial. They had the power to fire the CEO. They did not have the power to survive doing it.

The rehiring revealed the actual power structure

Within 72 hours, Altman was back. The board members who had voted to remove him were gone, replaced in part by figures more aligned with the commercial operation. Bret Taylor came in as chair. Larry Summers joined. The new board is credentialed and capable, but it is not the same kind of board. It is much more like a standard corporate board and much less like an independent oversight body.

This matters because it tells you who actually controls OpenAI. Not the legal entity. Not the stated mission. The answer, post-rehiring, is: the people with the most leverage over the commercial operation. That includes Microsoft, which had invested roughly $13 billion, and OpenAI’s employees, who collectively threatened to leave for Microsoft if Altman wasn’t reinstated. Both groups applied pressure. Both got what they wanted.

If you believe AGI represents genuine civilizational risk, this outcome should concern you. The organization most explicitly tasked with managing that risk just demonstrated that its safety board can be overridden by a 72-hour commercial squeeze.

Altman’s return wasn’t a vindication, it was a capitulation to incentives

The tech press largely framed Altman’s return as validation of his leadership. His employees rallied for him. Investors backed him. Microsoft offered him a job rather than let him sit idle for a week. That’s a real signal about his value as an operator.

But “this person is good at running the company” and “this person should lead the organization building potentially dangerous AI” are not the same question. The board was supposedly asking the second question. The market answered the first one and drowned the second out.

Altman is by most accounts an exceptional CEO for a growth-stage AI company. He recruits well, raises capital efficiently, and has built genuine products that people use. None of that resolves whatever concerns the board had about candor, and none of it addresses whether a nonprofit safety mandate can survive inside a company with a $157 billion valuation (as of early 2025).

The counterargument

The strongest case for the board’s failure is simple: they handled it incompetently. They fired the CEO without a succession plan, without prepared public communication, and apparently without anticipating that most of the staff would choose the CEO over the institution. A board that can’t execute a leadership transition isn’t a functioning safety body, it’s a liability.

This is fair. If the board’s concerns were legitimate, poor execution undermined their credibility and made the mission harder to defend. Good governance requires more than good intentions. You need to be able to act effectively, especially when acting against the grain of powerful commercial interests.

But the lesson here is “build the governance capability to actually do the hard thing” rather than “the hard thing was wrong to attempt.” The failure mode isn’t that the board tried to exercise oversight. The failure mode is that they waited until they had to do it badly, under pressure, without preparation.

What should have been built from the start

OpenAI’s original structure was a genuine attempt to solve a hard problem: how do you build transformative technology responsibly when the capital required is immense and the commercial incentives are enormous? The nonprofit-with-capped-profit model was creative. It just wasn’t durable.

What a durable version looks like is still an open question, but it probably involves clearer, pre-negotiated triggers for board intervention, independent funding that doesn’t depend on the commercial arm, and explicit legal agreements with major investors about what the safety mandate means in practice. None of that is easy. All of it is necessary if the stated mission is real.

The Altman episode didn’t prove that AI governance is impossible. It proved that you can’t build a governance structure for a nonprofit and then run a $100 billion company inside it without eventually being forced to choose which one you actually are. OpenAI chose. Watch what they do next.