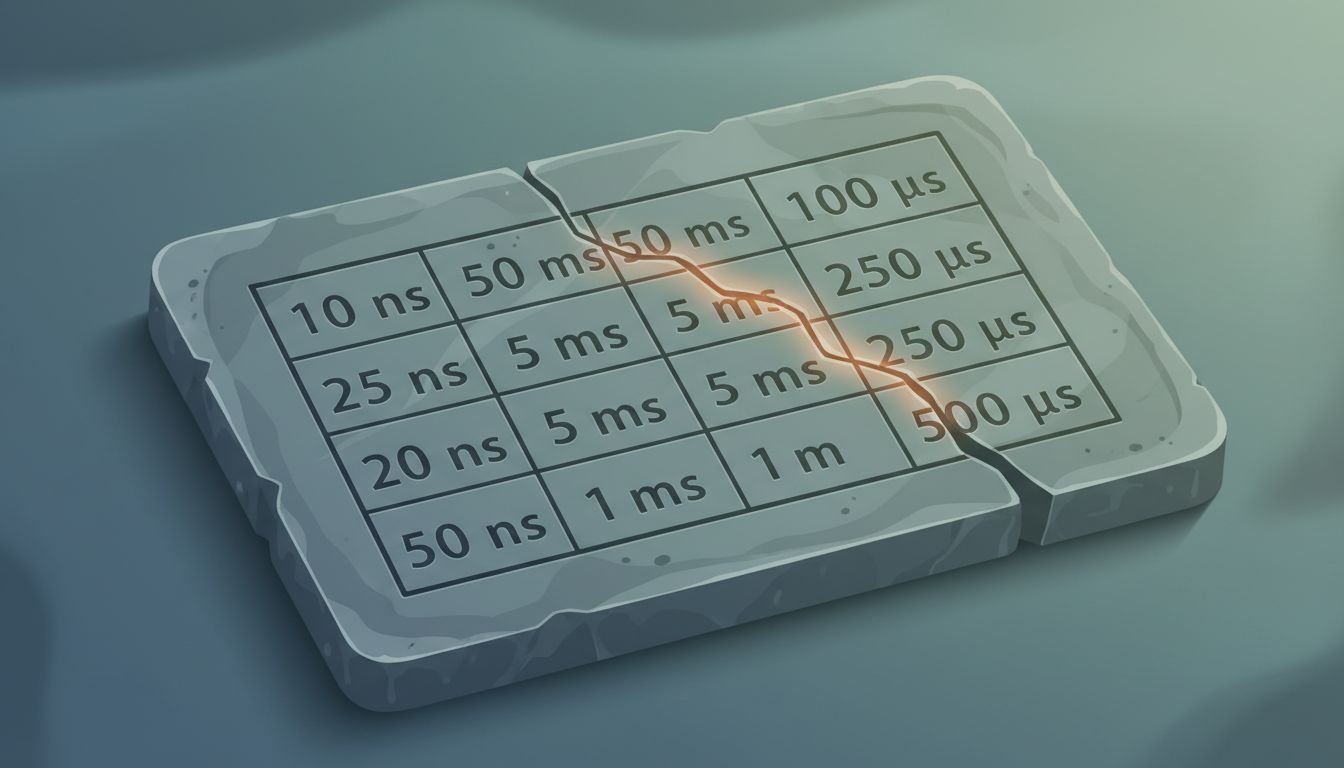

Every working engineer has encountered some version of the list. L1 cache reference: 0.5 nanoseconds. Main memory access: 100 nanoseconds. SSD random read: 150 microseconds. The numbers circulate in onboarding docs, conference talks, and system design interviews as if they were physical constants, like the speed of light or the charge of an electron. They are not. They are a snapshot of hardware from around 2004, and building 2024 systems around them is a quiet form of technical malpractice.

The Origin and the Drift

The canonical version of these numbers is attributed to Jeff Dean of Google, who compiled them as a practical heuristic for distributed systems thinking. The intent was sound: give engineers an intuitive feel for the orders-of-magnitude differences between storage tiers. That intuition still has value. The specific numbers have not aged gracefully.

Modern NVMe SSDs regularly achieve random read latencies under 100 microseconds, with high-end drives pushing below 20 microseconds. The original figure assumed SATA-era flash. Main memory latency figures similarly reflect DDR2-era DRAM. Contemporary DDR5 with well-tuned prefetching behaves differently enough to matter in latency-sensitive code paths. Network round trips within a single AWS availability zone routinely come in under 200 microseconds in practice, a figure that would have looked implausibly optimistic when Dean’s list was being photocopied.

None of this is secret information. Hardware vendors publish it. Benchmarking tools measure it. Yet the old numbers persist in slide decks and documentation with the authority of scripture.

Architecture Decisions Calcify Around Bad Data

This would be a minor academic annoyance if stale latency assumptions stayed in introductory materials. They don’t. They propagate into real design choices.

The classic argument for avoiding network calls in hot code paths was built on latency ratios that have shifted considerably. The case for aggressive in-process caching weakens when the “slow” alternative has gotten three to five times faster. Decisions about whether to colocate services, whether to use a remote cache versus a local one, whether a synchronous database call is acceptable inside a request handler: all of these hinge on latency estimates, and all of them can go quietly wrong when those estimates are two decades stale.

The deeper problem is that wrong numbers don’t announce themselves. An architecture built on outdated assumptions usually works. It just works worse than it should, and the gap between expected and actual performance gets rationalized away or blamed on application code rather than the model underneath.

The Interview Loop Keeps the Myth Alive

System design interviews are, at this point, the primary vector for transmitting these numbers to new engineers. Candidates are expected to recite approximate latency figures on demand, and interviewers who learned the numbers in 2008 are evaluating answers against a benchmark they’ve never updated.

The feedback loop is self-sealing. Candidates who internalize the numbers get hired. Hired engineers use the numbers in their own designs. Those engineers eventually conduct interviews and reward candidates who know the numbers. The content of the list is never interrogated because knowing the list is treated as a proxy for engineering competence rather than as a starting point for actual measurement.

This is how a generation of engineers ends up with a mental model of hardware that is accurate the way a 2004 road atlas is accurate: mostly right about the interstate highways, useless for finding anything built in the last decade.

The Counterargument

The obvious defense of the old numbers is that the orders of magnitude still hold. Cache is still faster than RAM, RAM is still faster than SSD, SSD is still faster than disk, local is still faster than network. The intuition the numbers are meant to build remains valid even if the specific figures are off.

This is partially true and mostly beside the point. When you’re deciding whether to add a caching layer, the order of magnitude matters less than the actual ratio. If you believe an SSD random read costs 150 microseconds when it actually costs 25, you may add caching that costs more in complexity than it saves in latency. Worse, you may fail to push back on an architecture that assumes caching is mandatory when modern storage could serve the use case directly. The orders of magnitude give you a map of the territory; the actual numbers tell you whether the journey is worth taking.

There is also something worth examining in the appeal to orders-of-magnitude thinking as a substitute for measurement. Systems that perform badly in production almost always do so because of specific numbers in specific contexts, not because an engineer failed to understand that disk is slower than RAM.

Measure the System You Actually Have

The fix is not to memorize a new table of numbers, though publishing and teaching current figures would be a reasonable start. The fix is to treat latency as an empirical question about a specific system rather than a constant to be looked up.

This means benchmarking the actual storage hardware in your production environment. It means measuring inter-service call latency in your specific network topology rather than reasoning from generic figures. It means building the habit of checking assumptions against reality before encoding them into architecture.

Jeff Dean’s list was a tool for thinking, not a specification. The engineers who understood it that way got the most out of it. The ones who treated it as received truth have been making decisions based on hardware that went end-of-life before the iPhone existed.

Your mental model of computer hardware has an expiration date. For most working engineers, that date passed a while ago.