Better autocomplete doesn’t make you a better writer. It makes you a faster one. Those are not the same thing, and conflating them is costing you more than you probably realize.

I’ve been writing code and prose for long enough to notice something uncomfortable happening to both: the smoother the tool, the less thinking I do before I reach for it. That used to be a mild annoyance. Now that AI-assisted writing is genuinely good, it’s becoming a structural problem.

The friction wasn’t wasted effort

Writing is thinking made visible. The struggle to find the right word, the pause before committing to a sentence structure, the moment where you realize mid-paragraph that your argument doesn’t actually hold, these aren’t bugs in the writing process. They’re the process. They’re where the thinking happens.

When autocomplete is bad (or absent), you draft, stall, revise, and occasionally surprise yourself with a formulation you didn’t know you had. When autocomplete is good enough to be plausible, you accept. The suggestion arrives before the friction does, and friction is where comprehension lives.

This maps directly onto what cognitive scientists call “desirable difficulties,” the counterintuitive finding that learning is better when retrieval is harder. Robert Bjork’s work at UCLA showed that making practice conditions slightly harder produces stronger long-term retention than making them easy. The writing equivalent: forcing yourself to find the word produces a stronger relationship with that word than accepting a suggestion. You knew this instinctively when you were learning to write. Smart autocomplete is quietly unlearning it for you.

You’re optimizing for fluency, not accuracy

Here’s the specific failure mode I keep seeing in my own writing and everyone else’s: AI-assisted text is confident. It flows. It sounds like it knows what it’s saying. That fluency is the problem, because fluency and correctness are not correlated.

When you write a sentence haltingly, you’re more likely to notice when it’s wrong. When you accept a sentence that arrived fully-formed and grammatically perfect, you’re much less likely to interrogate it. The internal editor that would have flagged a logical gap or a weak claim gets bypassed because the surface looks finished.

This is especially true for technical writing, where the difference between almost-right and right can be significant. A documentation paragraph that sounds authoritative but subtly misstates a system’s behavior is worse than no paragraph at all. Autocomplete is very good at almost-right.

The averaging problem

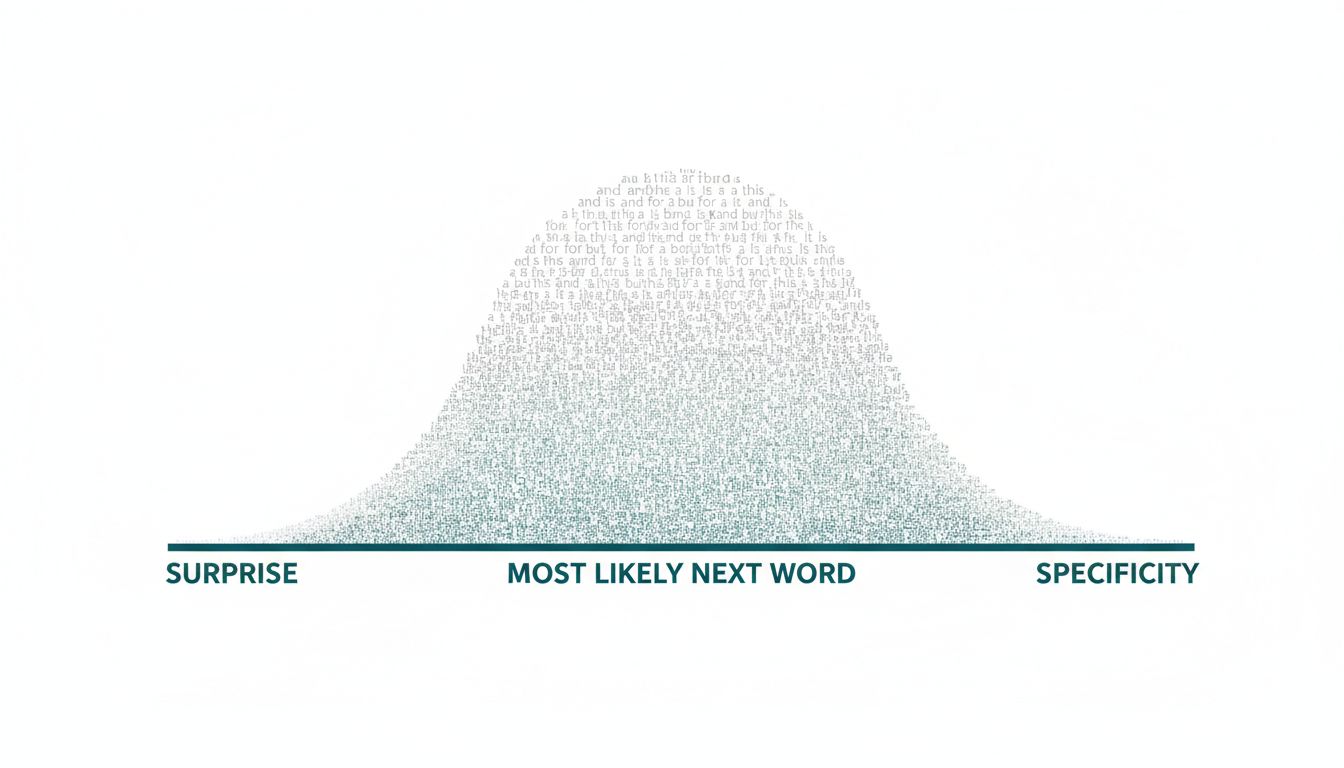

Language models predict the next token based on what tokens usually follow in their training data. This is genuinely impressive engineering, and embeddings are doing more semantic work in this process than most people appreciate. But the mechanism matters for writers: what usually follows is, by definition, average.

Good writing is often surprising. It makes a connection the reader didn’t expect, or frames a familiar thing in a way that makes it suddenly strange. That surprise comes from a specific person’s specific perspective, not from the central tendency of a large corpus. When you accept suggestions heavily, you’re trading your particular voice for a smooth statistical average. The writing becomes competent and forgettable in equal measure.

The writers I find most worth reading are notable for what they do that you wouldn’t predict. Autocomplete is, mechanically, a prediction engine. It is structurally opposed to unpredictability.

The counterargument

The strongest objection goes something like this: these tools lower the barrier to writing for people who struggle with blank-page paralysis or language barriers, and that’s net positive. If someone who wouldn’t have written anything now writes something, autocomplete served them well.

This is true, and I don’t want to dismiss it. There are real populations for whom fluency assistance is genuinely enabling rather than degrading.

But that argument doesn’t apply to people who were already writing. For experienced writers, the evidence points the other way: the more you offload the hard part, the weaker your capacity for the hard part becomes. This isn’t moralism about tools. It’s closer to the argument against GPS for navigation: using it to get somewhere you couldn’t otherwise reach is one thing; using it for every trip until you can no longer read a map is something else.

The people most at risk are intermediate writers, good enough that autocomplete suggestions sound plausible, not skilled enough to reliably spot when a plausible suggestion is actually wrong or merely adequate.

What to do with this

I’m not arguing for abandoning these tools. I’m arguing for using them with the same intentionality you’d apply to any dependency that changes your capability when removed.

Write the first draft without assistance. Not because first drafts are sacred, but because the struggle of the first draft is where you figure out what you actually think. Use autocomplete for editing passes, for catching typos, for suggesting alternatives to a word you’ve already chosen. That’s a fundamentally different use, one where you’re evaluating rather than accepting.

Periodically write something important entirely by hand, or at minimum in a plain text editor with every predictive feature disabled. Notice what comes back when the suggestions go away. Usually it’s slower. Often it’s better. Almost always it’s more distinctly yours.

The goal isn’t to suffer for craft. The goal is to not accidentally outsource the part of writing that makes it worth doing.