The Software Nobody Bought Turned Out to Be Worth More

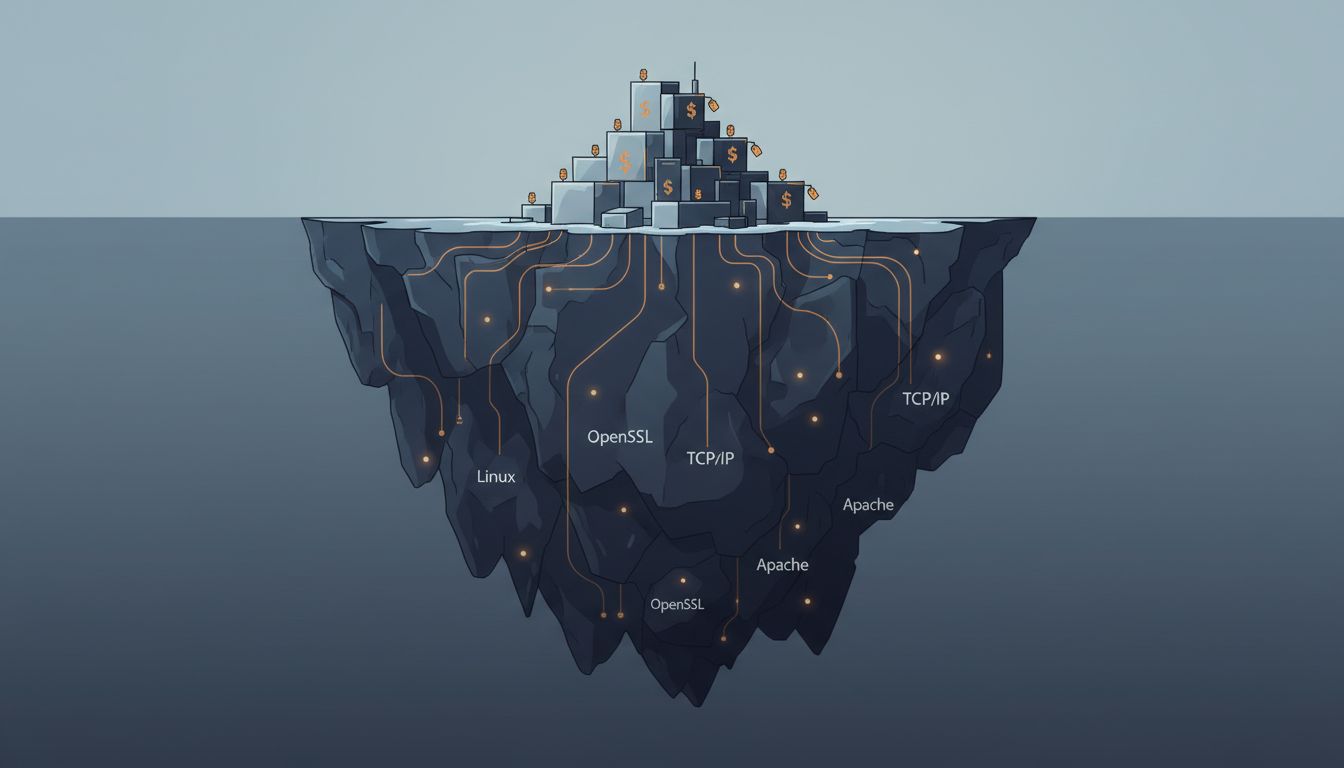

The most economically significant software in history was given away. Linux runs the majority of the world’s servers, nearly all of Android, and the infrastructure beneath every major cloud provider. Apache HTTP Server delivered the early web to billions of people. OpenSSL has quietly encrypted a significant fraction of all internet traffic since the mid-1990s. None of these projects had a sales team. Most ran on volunteer labor for years. And yet the compounding economic value they created, captured almost entirely by the companies built on top of them, dwarfs the revenue of most software companies that ever existed.

This is not a coincidence. It is the central paradox of software economics, and understanding it matters if you’re trying to make sense of why the industry allocates resources the way it does.

How Infrastructure Becomes Invisible (and Invaluable)

The economic concept at play here is often called the appropriability problem. When a technology is easy to copy and hard to restrict, the inventors tend to capture very little of the value they create. The rest flows to whoever can build a defensible position nearby. In software, that means the value of infrastructure rarely accrues to the people who built the infrastructure.

Linux is the sharpest illustration. The Linux kernel is maintained by thousands of contributors and coordinated by the Linux Foundation, whose members include IBM, Intel, Samsung, Google, and Microsoft. Those companies collectively spend hundreds of millions of dollars annually on Linux development, not because they’re being charitable, but because a shared, freely available operating system kernel eliminates a cost center for all of them while preventing any single competitor from owning that layer of the stack. The value of Linux to the global economy has been estimated in the hundreds of billions of dollars. The Linux Foundation’s annual budget is under $100 million.

The people who wrote Linux didn’t lose. Many became extremely valuable engineers. Linus Torvalds is not poor. But the ratio of value created to value captured is extraordinary in a way that would look insane in any other industry.

The OpenSSL Problem and the Luck of Infrastructure

The darker version of this story is OpenSSL. For years, a library responsible for securing a meaningful fraction of all encrypted internet traffic was maintained by a team that, at one point, consisted largely of two people, one of whom was working part-time. When the Heartbleed vulnerability was disclosed in 2014, the world discovered that critical financial, medical, and government systems had been depending on software funded at a level that couldn’t sustain basic code review.

The response was the Core Infrastructure Initiative, a Linux Foundation effort that pulled funding from Amazon, Google, Microsoft, Facebook, and others. The lesson was awkward for everyone: the most important software in the world was also some of the most underfunded, and the companies that had profited most from it had been happy to let that situation persist until a crisis forced their hand.

This is the pathology that underlies all of open-source economics. The value flows upstream toward whoever can sell services, support contracts, or cloud infrastructure on top of the free layer. The maintainers of the free layer, meanwhile, operate on goodwill, hobbyist energy, and whatever corporate sponsorship they can attract. Open source runs the world. Almost nobody pays for it.

When Free Software Becomes a Business Strategy

Not every open-source project is a volunteer labor of love that accidentally became critical infrastructure. A significant number were open-sourced deliberately, as competitive strategy.

Google’s decision to open-source Android in 2008 was not altruism. It was a calculated move to prevent any single company from controlling the mobile operating system layer and potentially blocking Google’s services from handsets. By making the OS free, Google ensured that mobile hardware manufacturers had a viable alternative to paying Microsoft licensing fees or building their own systems, and that Google’s search and advertising products would come pre-installed on the resulting devices. Android became the most widely deployed operating system in history. Google captured the value not from the OS but from the layer above it.

Meta’s open-sourcing of LLaMA models follows a similar logic. Meta is not in the business of selling AI models. It’s in the business of selling attention. Releasing capable models into the open-source community accelerates the proliferation of AI tools and keeps the field from consolidating around any competitor’s proprietary stack. If AI inference becomes cheap and commoditized, the company with the most data and the largest advertising business wins. Open-sourcing the model is a way of shaping that outcome.

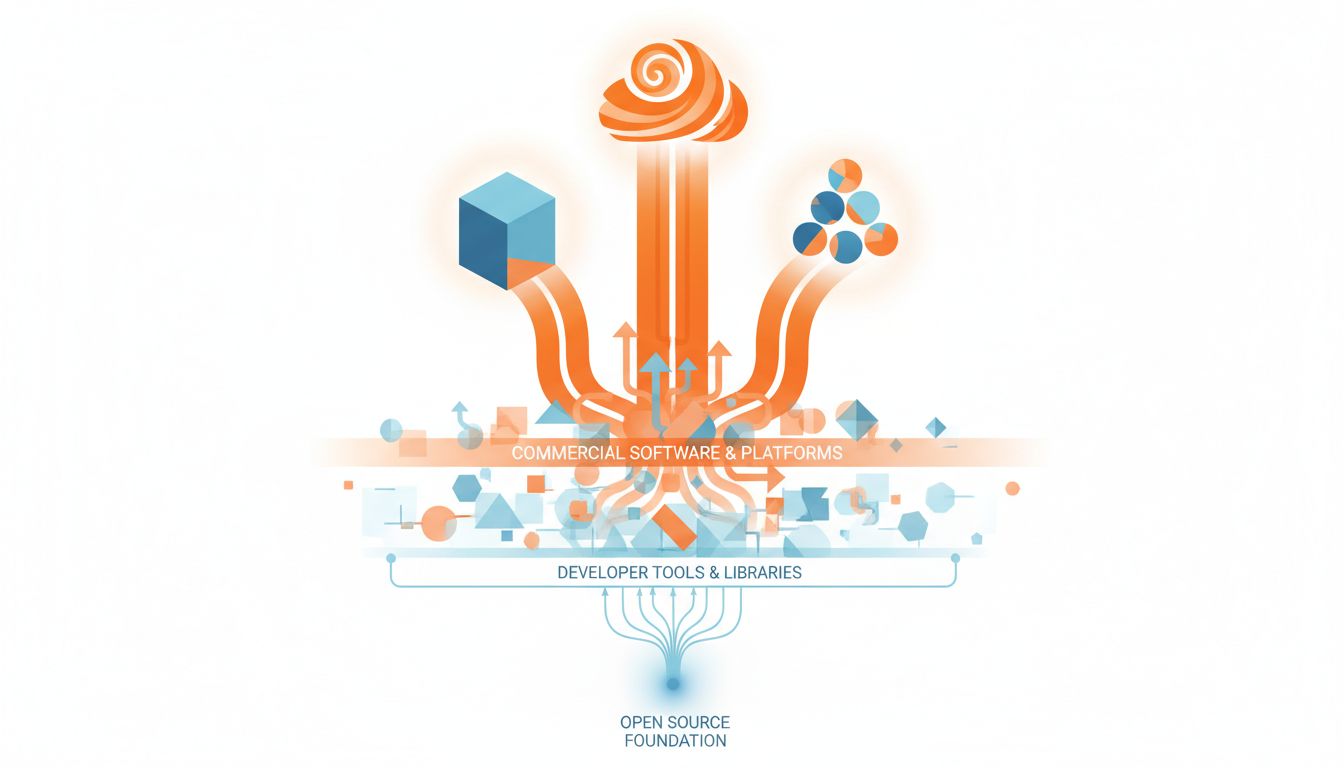

This strategic use of open source is sometimes called “commoditizing the complement.” If you control layer N+1 and open-source layer N, you make the layer below you cheap while keeping your own position defensible. It’s a move that benefits the companies playing it, but it also genuinely benefits the broader developer community, which is why the two interests so often align.

The Emergence of the Commercial Open-Source Company

The 2010s saw a serious attempt to thread this needle: build on open source, capture some of the value through commercial licensing or managed services. Companies like HashiCorp, Confluent, MongoDB, Elastic, and Redis Labs all followed versions of this model, releasing open-source software and then selling enterprise features, support, or cloud-hosted versions on top.

The results were instructive. The model worked well enough to produce several multi-billion-dollar companies. But it also exposed a structural tension that has never been fully resolved: the same cloud providers that depend on open-source software are also the most efficient distributors of it. When AWS launched Amazon ElastiCache (built on Redis) and Amazon DocumentDB (broadly compatible with MongoDB), they weren’t doing anything technically illegal. But they were extracting value from software those companies had built and maintained, without returning much to the projects or their sponsors.

This prompted a wave of license changes, from MongoDB’s Server Side Public License to Elasticsearch’s shift away from Apache 2.0 to HashiCorp’s Business Source License. The cloud providers pushed back, in some cases forking the projects entirely. The Elasticsearch fork became OpenSearch, maintained by Amazon. The community fractured.

None of this has been cleanly resolved. The commercial open-source model is viable but fragile, dependent on a careful balance between community goodwill and business interest that is easy to upset.

Why Proprietary Software Still Exists (and Always Will)

Given all of this, it’s worth asking why anyone builds proprietary software. The answer isn’t simple. Some software is genuinely hard to replicate without sustained investment and talent concentration. The companies that built enterprise resource planning systems, specialized industrial software, or deeply integrated SaaS products are not easily displaced by an open-source alternative. The switching costs are high, the domain knowledge required is enormous, and the customer relationships matter more than the code.

Salesforce doesn’t have a serious open-source competitor not because the code is secret but because the network of integrations, the sales relationships, the institutional knowledge baked into years of customer implementations, and the trust built around data handling are not things you can fork on GitHub. The value is not in the software. It’s in the position.

This is a useful corrective to the more utopian claims sometimes made on behalf of open source. Free software is extraordinarily good at commoditizing infrastructure, accelerating developer tooling, and preventing monopoly lock-in at the stack layers where those things matter. It is much less effective at displacing products whose moats are relationship-based or network-effect-based. One enterprise customer is a trap, not a business, and the same principle applies in reverse: one enterprise relationship, properly cultivated, is the thing open source can almost never replicate.

The AI Inflection Point

The economics of all of this are being scrambled right now by AI. Foundation models are extraordinarily expensive to train, which initially suggested that AI would be a proprietary software story, that the companies with the compute and data to build large models would capture the value. OpenAI, Anthropic, and Google all bet on that logic.

But the open-source community has moved with unusual speed. Meta’s LLaMA releases, Mistral’s work out of France, and the broader Hugging Face ecosystem have collectively demonstrated that open-source model weights can reach near-frontier capability at a fraction of the training cost of the market leaders. The pattern rhymes with Linux: expensive to build initially, cheap to deploy once the architecture is figured out, and increasingly difficult to sell when free alternatives are nearly as capable.

The question of where AI value actually accrues, at the model layer or the application layer or the data layer, is still genuinely open. But the history of software suggests caution about betting heavily on infrastructure that is becoming commoditized. The most valuable AI company may turn out to be one that looks less like OpenAI and more like Google: sitting above the free layer, capturing the value that the free layer generates.

What This Means

The pattern across fifty years of software economics is consistent. Infrastructure tends toward free, either because it gets open-sourced deliberately, because open-source alternatives emerge, or because cloud providers commoditize it. Value accumulates at the layers that are hardest to replicate: customer relationships, proprietary data, network effects, and deep domain integration.

For anyone building software, the implication is uncomfortable but clarifying. If your value is in the code itself, your position is probably more fragile than you think. If your value is in what surrounds the code, you may be in better shape than your revenue line currently suggests. The software that commands a price tends to be software where the switching cost isn’t really about the software at all.

And for the volunteers who maintain the infrastructure the rest of the industry depends on: the gap between the value you create and the compensation you receive remains one of the more significant unsolved problems in tech. The post-Heartbleed response showed that industry can move when the consequences become visible. The challenge is that most of the time, the consequences stay invisible until they don’t.