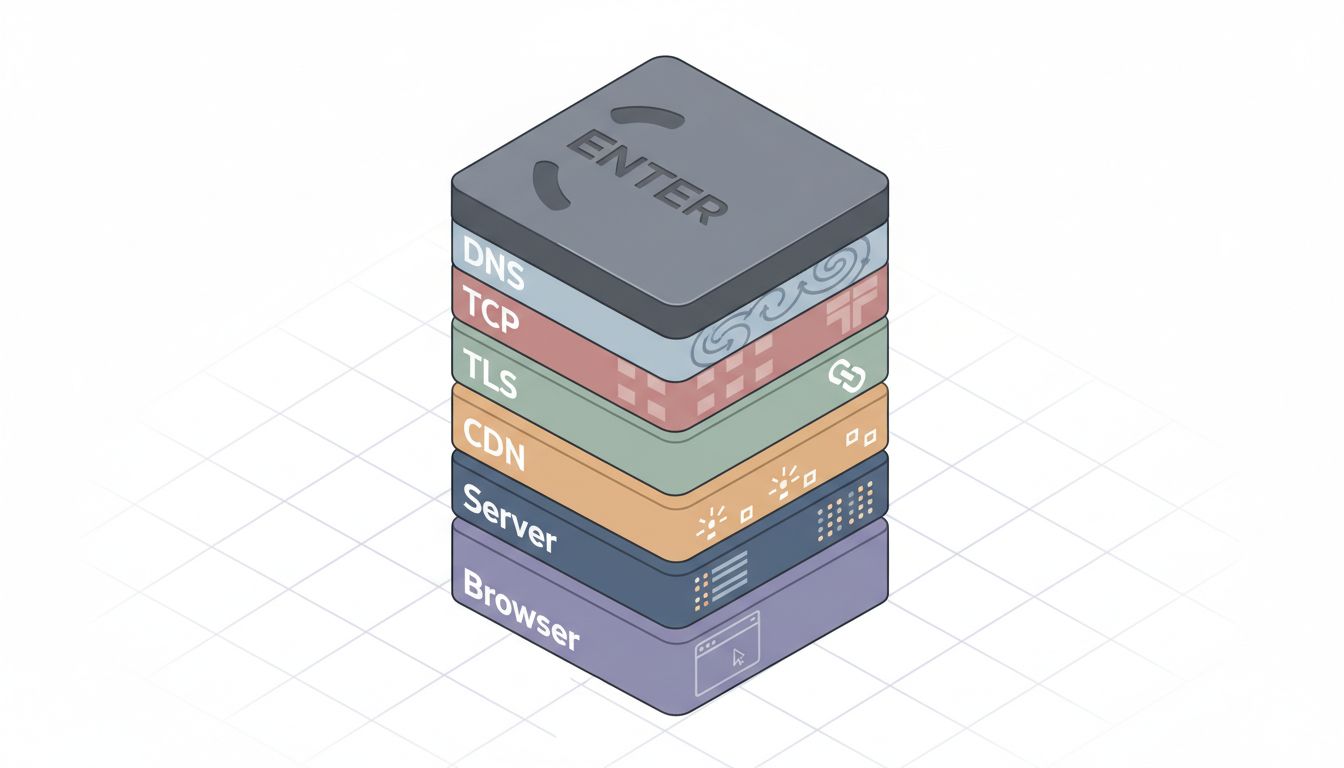

Most people interact with the internet the way they interact with electricity: flip a switch, light comes on, don’t ask questions. Type a URL, hit Enter, page loads. The mental model is a straight line between you and a server somewhere. That model is wrong, and the wrongness is consequential.

Understanding what actually happens during a URL request isn’t just trivia for engineers. It’s the difference between building systems that fail gracefully and systems that fail mysteriously. It’s why your “simple” app has latency you can’t explain, and why the fix often lives somewhere you never thought to look.

DNS Is a Phone Book That Rewrites Itself Constantly

Before a single byte of your request travels to a destination server, your browser has to figure out where that server actually lives. The domain name you typed (say, example.com) is a human convenience. The network speaks in IP addresses. DNS is the translation layer.

This sounds straightforward until you trace what actually happens. Your browser checks its local cache first. If that misses, your operating system checks its own cache. If that misses, your router might have an answer. If not, the query travels out to a recursive resolver (usually run by your ISP or a service like Google’s 8.8.8.8 or Cloudflare’s 1.1.1.1), which in turn may need to walk the DNS hierarchy from root servers through TLD servers to authoritative nameservers, assembling the answer and caching it for the next person who asks.

All of this can happen in under 50 milliseconds on a good day. It can also introduce hundreds of milliseconds of latency if caches are cold and the chain is long. DNS failures are a leading cause of outages that look, to users, like the website is “down” when the website’s servers are perfectly healthy.

TCP Handshakes and TLS Are Not Free

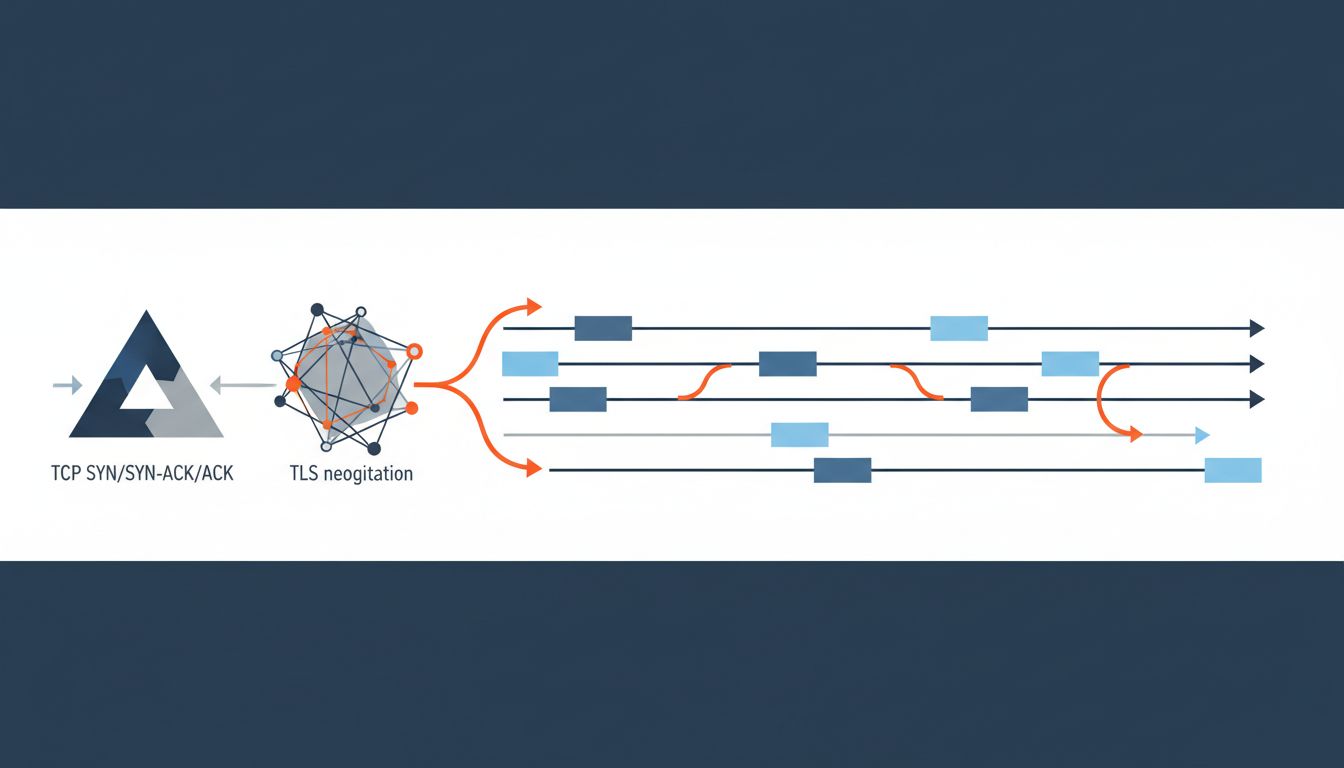

Once your browser has an IP address, it needs to establish a connection. For any HTTPS site (which is most of the modern web), this involves two distinct handshakes before a single byte of useful content moves.

First, the TCP three-way handshake: SYN, SYN-ACK, ACK. Your computer says hello, the server acknowledges and says hello back, you acknowledge the acknowledgment. This takes one round trip. For a server 100ms away, that’s 100ms of setup before anything happens.

Then TLS negotiation on top of that. The client and server agree on a cipher suite, exchange certificates, verify identity, and derive shared encryption keys. TLS 1.3 (now widely deployed) got this down to one round trip in most cases, compared to two round trips for TLS 1.2. That improvement sounds small. At scale, shaving one round trip off every new connection is significant.

HTTP/2 and HTTP/3 exist largely to reduce how often these handshakes happen, by multiplexing multiple requests over a single connection. TCP/IP delivers your message by throwing most of it away covers why the underlying protocol is even stranger than most people realize.

The Server Response Is Often Not What You Think

Assume the handshakes are done and your request finally reaches a server. Even here, “the server” is rarely one thing. Your request likely hit a CDN edge node first, not an origin server. If the CDN has a cached version of what you want, the origin server never sees your request at all. This is by design and it’s why major websites can serve content to millions of simultaneous users without melting.

If the CDN misses and passes the request to origin, you might hit a load balancer that chooses among a pool of application servers. The application server might query a database, call several downstream APIs, render a template, and assemble a response. Each of those steps has its own latency budget, and a slow database query or a flaky third-party API call shows up in the user’s browser as sluggishness with no obvious explanation in the application code.

This is why distributed tracing tools exist. The request isn’t a single event. It’s a tree of events, and the failure or slowness can be hiding anywhere in that tree.

Your Browser Has More Work to Do Than You’d Expect

The HTML response arrives and your browser has barely started. It parses the HTML, builds the DOM, encounters references to CSS files, JavaScript files, images, fonts, and fires off additional requests for each. Some of those resources block rendering (particularly render-blocking JavaScript and CSS). Some trigger further requests. A modern web page loading from scratch can involve dozens to hundreds of HTTP requests before everything is in place.

Browser engines parse, build layout trees, calculate styles, composite layers, and paint pixels to your screen. What actually happens inside a CPU when code runs is relevant here because all of this parsing and rendering is CPU-bound work happening on your local machine, not on the server.

The counterargument

Some people will say this level of detail doesn’t matter for most practitioners. Abstract it away, use good frameworks, trust your CDN. There’s real merit to that view. Abstraction is what makes software development tractable.

But abstractions leak under pressure. Latency bugs, mysterious timeouts, cascading failures during traffic spikes, intermittent TLS errors in certain regions: these are the problems that eat engineering teams’ time and that require understanding the layers beneath the abstraction to diagnose. The developers who can’t reason about DNS TTLs or TCP connection reuse spend weeks on problems that take hours for someone who can.

The straight line is an illusion worth examining

Typing a URL and hitting Enter triggers a negotiation between a dozen or more distinct systems, many of them distributed, several of them caching, all of them capable of independent failure. The experience feels atomic. The reality is deeply layered.

That gap between experience and reality isn’t an argument for everyone to become a networking expert. It’s an argument for building enough of a mental model that when something breaks, you know where to look. The internet’s reliability is an engineering achievement, not a given. Treating it as magic is fine until it isn’t.