The Simple Version

A compiler takes code written in a human-readable language and translates it into instructions a processor can execute. But calling it a translator undersells what actually happens.

The Gap Between What You Write and What Runs

When you write x = a + b in C, you are not writing a machine instruction. You are writing an intention. The compiler’s job is to figure out the most efficient way to honor that intention, and it has enormous latitude in how it does so.

Processors don’t understand variables. They understand registers, memory addresses, and a fixed set of arithmetic operations specific to their architecture. The gap between your source code and those operations is vast, and bridging it requires several distinct passes, each one transforming your code into a progressively lower-level representation.

This is why the same C program compiled for a Mac and for a Linux server produces two completely different binary files. The source is identical. The target machines speak different dialects. The compiler handles the translation both ways.

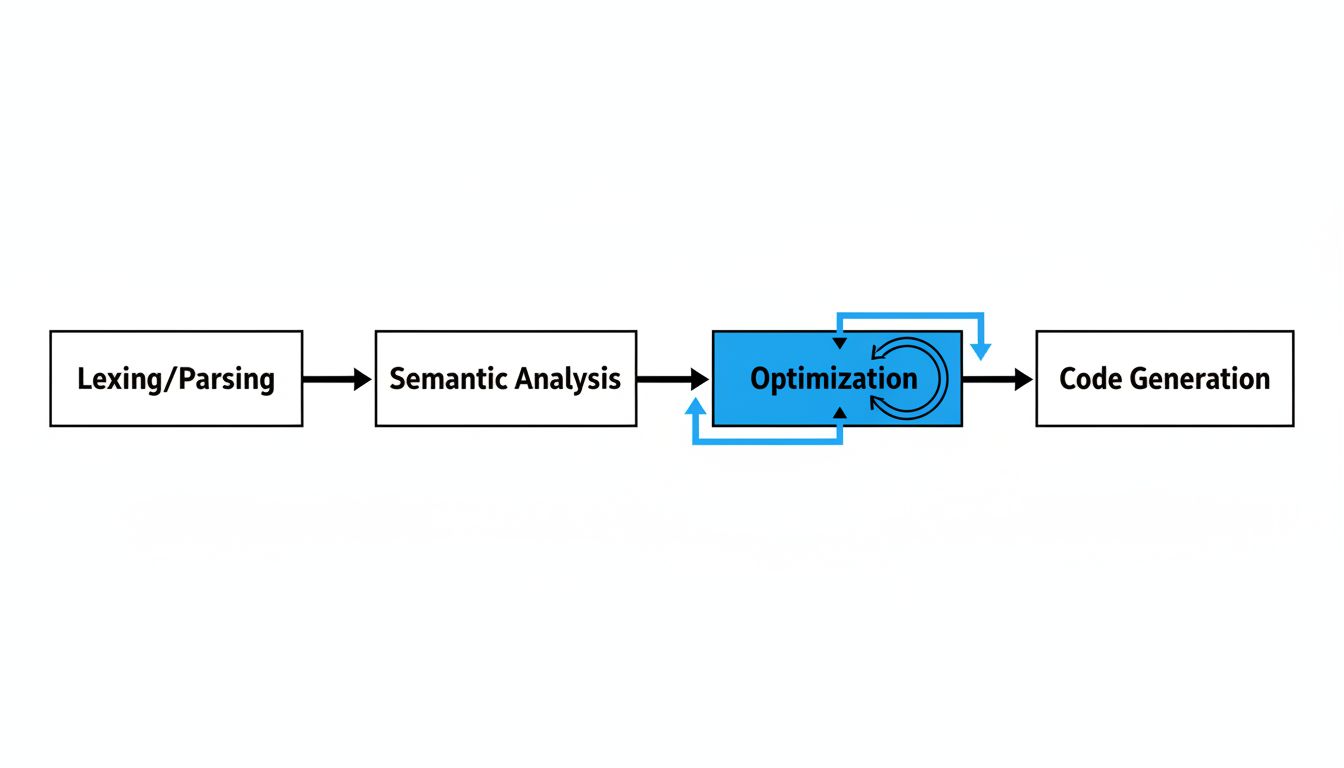

Four Stages, Each Doing Real Work

Lexing and parsing. The compiler first reads your code as raw text and breaks it into tokens: keywords, operators, identifiers, literals. Then it checks that those tokens follow grammatical rules. This is where you get syntax errors. The output is an abstract syntax tree, a data structure that captures the hierarchical relationships in your code (a function contains statements, statements contain expressions, expressions contain operators and operands).

Semantic analysis. Grammar can be valid and meaning can still be wrong. "hello" / 5 is syntactically fine in some languages and semantically incoherent. The compiler checks types, resolves variable scopes, verifies that the functions you’re calling actually exist with the signatures you’re expecting. This stage catches a category of errors that the grammar check misses entirely.

Optimization. This is where compilers earn their keep, and where the gap between what you wrote and what runs becomes dramatic. A modern optimizing compiler will inline small functions to eliminate call overhead, reorder instructions to keep the processor’s execution pipeline full, eliminate variables that exist in your source but whose values can be computed at compile time, and vectorize loops to run the same operation on multiple data elements simultaneously.

The canonical example is dead code elimination. If you write if (false) { doSomething(); }, the compiler simply removes that branch. It never appears in the binary. Compilers routinely do this for conditions that aren’t literally false but that analysis proves can never be true.

Code generation. The final stage produces actual machine instructions for the target architecture, allocates registers, handles calling conventions, and lays out the binary file in the format the operating system expects. On x86-64 Linux, that’s ELF format. On macOS, it’s Mach-O. Same logic, different packaging.

The Part That Surprises Even Experienced Programmers

Compilers are permitted to transform your code in any way that preserves observable behavior, and observable behavior is defined more narrowly than most people assume. The compiler doesn’t have to preserve the order of operations you wrote. It doesn’t have to keep variables you never read. It doesn’t have to generate a branch for every if statement if it can prove which path will be taken.

This is why your compiler rewrites your code and hides the evidence. The program that runs bears a meaningful resemblance to the program you wrote, but they are not the same thing.

GCC and Clang, the two dominant C/C++ compilers, both offer optimization levels controlled by flags like -O2 and -O3. At higher levels, the transformations become aggressive enough that debugging gets genuinely difficult. Variables disappear. Functions collapse. The call stack stops reflecting the source structure. This is not a bug. It’s the contract: observable behavior is preserved, internal structure is not.

Why This Matters If You’re Not a Compiler Engineer

Understanding compilation shapes how you reason about performance. When a programmer assumes that a function call costs something, a loop costs something, and a variable read costs something, they’re often wrong in specific and predictable ways. The compiler may have eliminated the function, unrolled the loop, and cached the variable in a register that never touches memory. Alternatively, it may have introduced costs you didn’t write, recomputing something repeatedly because it couldn’t prove two pointer values didn’t alias.

This is part of why deleting code makes software more reliable. Less code gives the compiler a clearer picture of your intent. Fewer variables, fewer branches, fewer abstractions means the optimizer has fewer constraints to reason through and more freedom to produce efficient output.

There’s also a security dimension. Compilers will eliminate what looks like unnecessary memory-clearing code (zeroing a buffer after use) if they can prove the cleared values are never read again. From a correctness standpoint, that’s a valid optimization. From a security standpoint, that buffer may have contained a password, and you wanted it gone. This class of bug has appeared in real cryptographic libraries, and it’s why security-sensitive code sometimes has to go out of its way to defeat the optimizer.

The Compiler as Collaborator

The framing of compiler-as-translator is technically accurate but misses the relationship. A translator renders your meaning faithfully in another language. A compiler negotiates with your code. It interprets your intent, applies decades of research into program optimization, and produces something that runs faster than most humans could write by hand, while remaining faithful to what your program was supposed to do.

The best programmers treat the compiler as a collaborator with known capabilities and known blind spots. They write code that makes intent clear enough for the optimizer to help. They know when an abstraction will get compiled away and when it won’t. They understand that the binary is not a recording of their code but a consequence of it.

That distinction is worth holding onto every time you write a line.