99.9% uptime sounds good. It means your service is down less than nine hours per year. Your status page stays green. Your SLA is met. Engineering celebrates.

Your users, meanwhile, experience something measurably worse, and the gap between what your metrics show and what people actually feel is where trust erodes quietly. Here is why.

1. Uptime Measures the Server, Not the User

When your monitoring pings an endpoint and gets a 200 response in 40 milliseconds, it marks the service as “up.” But a real user request doesn’t travel directly to your server and back. It traverses DNS resolvers, CDN edge nodes, load balancers, application servers, database queries, third-party API calls, and then the entire return trip, rendered by a browser on hardware you don’t control. Any one of those legs can degrade or fail without touching your uptime metric at all.

Google’s research on Core Web Vitals found that real-user performance can differ dramatically from synthetic monitoring, because synthetic tests run from controlled data centers while your actual users are on mobile connections in rural areas, on old laptops, behind corporate firewalls. Your monitoring measures the best-case path. Your users take the median path, and some take the worst-case one.

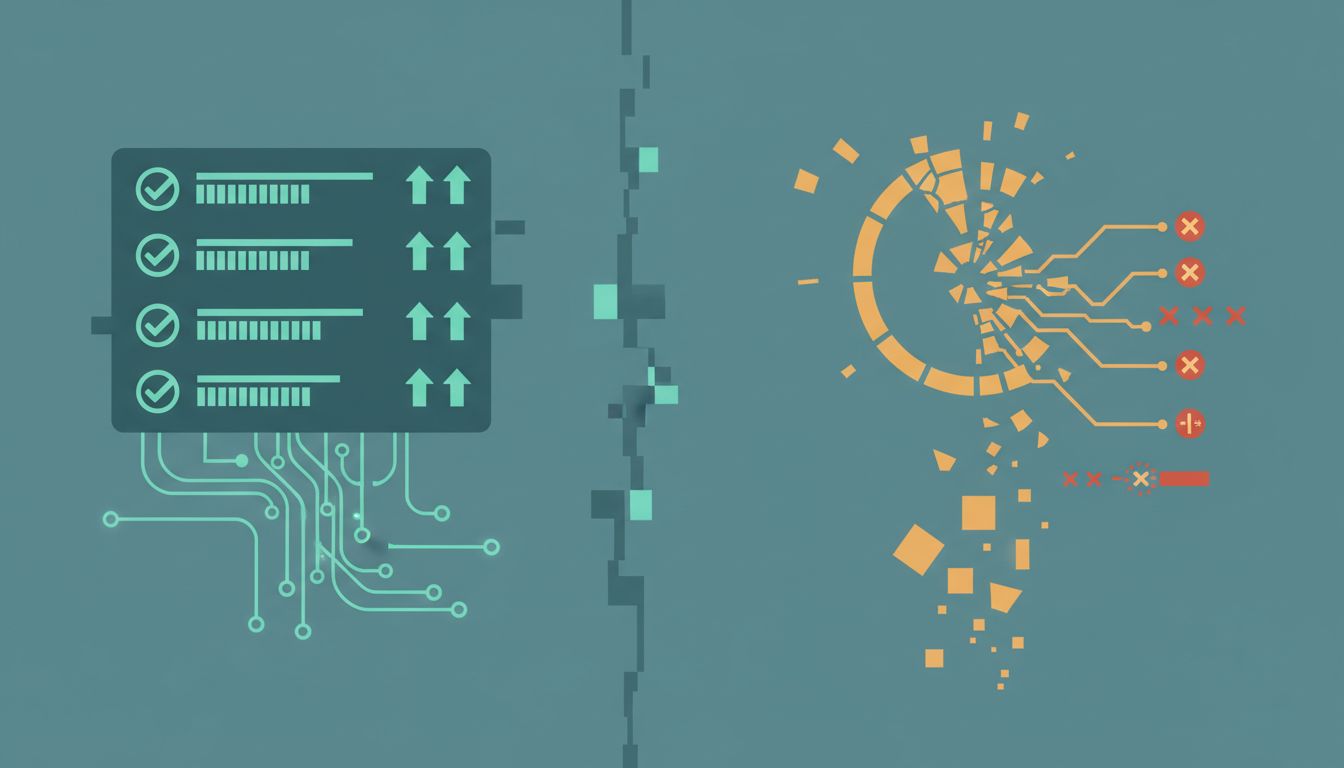

2. Partial Failures Are Invisible to Binary Uptime

Uptime is binary: up or down. Real degradation is a spectrum. A database under load might respond in 8 seconds instead of 80 milliseconds. Your monitoring still registers “up.” A payment processor might be returning errors for 15% of requests. If your health check doesn’t exercise the payment flow, the status page stays green while a meaningful fraction of users can’t complete checkout.

Amazon has written publicly about the complexity of measuring service health at scale, and the core problem is that any sufficiently complex distributed system is almost always partially broken in some way. Services don’t fail completely. They limp. They time out on some paths. They serve stale data from a fallback cache. These states are genuinely hard to detect with simple availability checks, but they’re what users actually experience.

3. The Aggregation Problem Hides Spikes

Uptime is typically reported as a monthly or annual percentage. This aggregation is where a lot of pain disappears statistically. A service that goes down for 43 minutes on the first of every month has 99.9% uptime for the year. But if those 43 minutes hit peak traffic, you’ve degraded the experience for your largest audience, repeatedly, on a schedule.

The same math applies to latency. A service with a median response time of 100ms might have a 99th-percentile response time of 4 seconds. The median looks great in aggregate reporting. The 1% of requests taking 4 seconds represents real users waiting, abandoning, and churning. If you have a million requests per day, that’s 10,000 bad experiences daily that never show up in your headline number.

4. Dependency Failures Become Your Problem

Your service might be perfectly available, but if a third-party authentication provider is slow, users see a login page that hangs. If your CDN has an issue, your static assets load in 6 seconds. If an analytics script from a vendor you integrated two years ago starts timing out, it can block page rendering entirely in some browser configurations.

You don’t own these failure modes, but users attribute them to you. They don’t know or care that the slowness came from a font provider or an A/B testing library. They know your product felt broken. As services grow more interconnected, this problem compounds. The open source dependencies running under your product introduce the same dynamic: someone else’s issue becomes your user’s problem and your churn number.

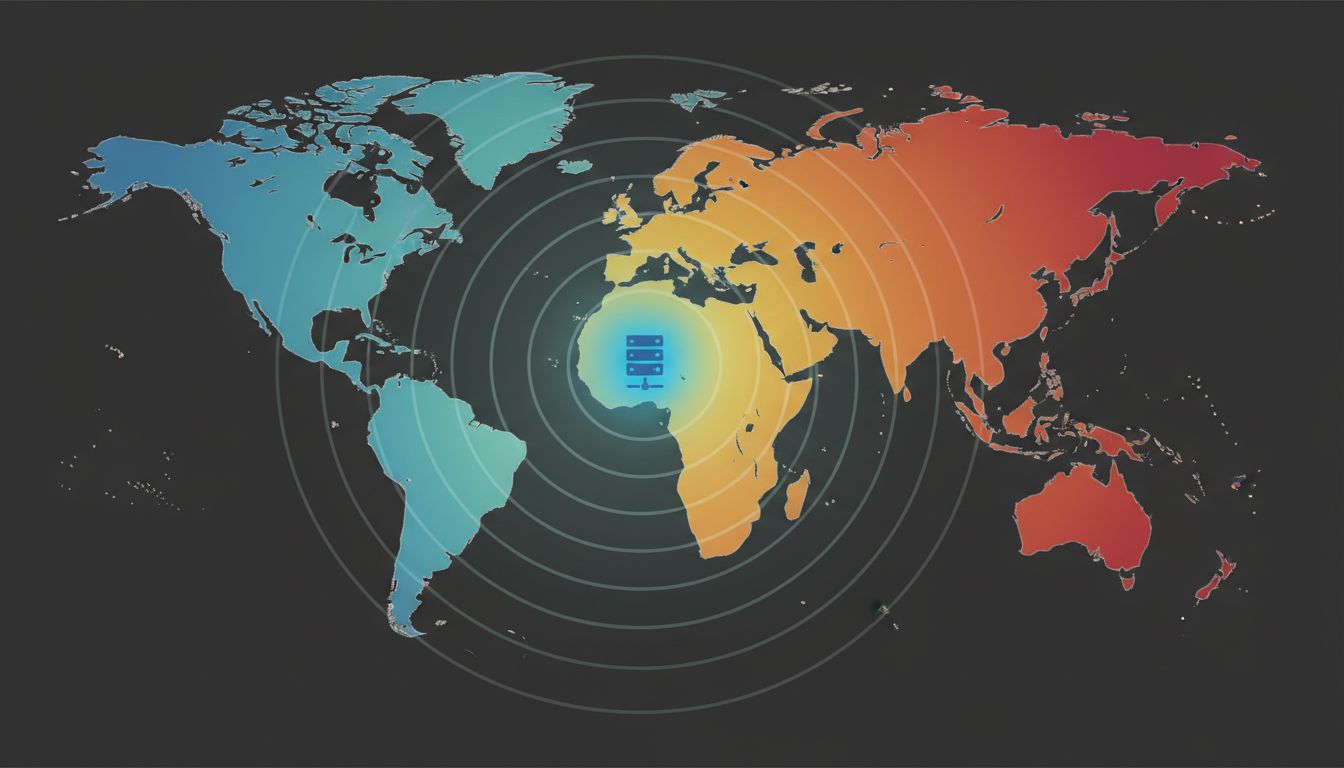

5. Geography and Network Topology Create Uneven Experiences

Most teams measure availability and latency from wherever their engineering team is located or wherever their primary data center sits. If you’re a US-based company and your servers are in Virginia, your latency to European users might be 3-4x what you measure internally. Your 99.9% uptime might be 99.9% uptime at 80ms for users on the East Coast, and 99.9% uptime at 400ms for users in Southeast Asia.

These aren’t edge cases to dismiss. They represent real user segments with real revenue implications. And the 99.9% number is the same for all of them, which is exactly the problem with using it as your primary reliability metric.

6. The Metric You Report Shapes What You Build

This isn’t just a measurement problem. It’s an incentive problem. If your engineering team is evaluated on uptime percentage, that’s what they optimize for. That means fast, simple health checks that pass easily. It means database queries that aren’t tested in your monitoring. It means dependency failures that don’t count against the SLA.

Teams that switch to user-centric metrics, things like successful checkout rate, time-to-interactive for real users in the 90th percentile, error rates on critical user journeys, tend to find a different set of problems than teams watching server availability. The latency numbers engineers typically cite are often disconnected from what real network conditions look like, which compounds this. The measurement choices upstream determine what gets fixed downstream.

What Actually Helps

Synthetic monitoring from multiple geographic regions catches more than single-location pings. Real User Monitoring (RUM) captures actual experience across actual devices and networks. Error rate tracking on specific user actions, not just HTTP status codes, reveals the partial failures that availability checks miss. Percentile-based latency metrics (p95, p99) expose the tail experience that medians hide.

None of this means your 99.9% uptime number is lying. It means it’s answering a narrower question than you might think. The question it answers is: “Was your server reachable from our monitoring location?” The question your users are implicitly asking is different: “Can I actually do the thing I came here to do, in a reasonable amount of time, right now?” Closing the gap between those two questions is where reliability engineering gets genuinely interesting.