The performance review always goes the same way. Engineers profile the application, find a few slow database queries, rewrite some loops, and ship an update claiming a 40% speed improvement. Users notice nothing. The app still feels sluggish. Everyone is confused.

The reason is simple: the bottleneck was never in the code.

For the vast majority of consumer and business applications, perceived slowness is a network and rendering problem, not a computation problem. Modern CPUs execute billions of instructions per second. Your business logic, even when poorly written, typically consumes milliseconds of that capacity. What consumes hundreds of milliseconds, sometimes over a second, is the journey a request takes before and after your code touches it.

Latency lives in the gap between servers and users

A typical web request from a user in São Paulo hitting a server in Virginia travels roughly 10,000 kilometers. At the speed of light through fiber, that round trip takes around 130 milliseconds before a single line of your application code executes. Add TLS handshakes, DNS resolution, and TCP connection setup, and you are frequently looking at 300 to 400 milliseconds of overhead on a cold request, regardless of how optimized your server-side logic is.

Google’s research into Core Web Vitals found that the largest contentful paint, the moment users perceive a page as having loaded, is heavily influenced by time to first byte and resource load order, not by server processing time. Most sites that score poorly on these metrics have fast backend code. They just serve assets from a single origin, don’t prioritize critical resources, and make the browser do sequential work that could be parallel.

This is the uncomfortable truth: a well-optimized CDN configuration will do more for perceived performance than rewriting your ORM queries.

Third-party scripts are the hidden tax

Open the network tab on an average marketing-led product and count the requests. Analytics scripts, A/B testing frameworks, chat widgets, ad trackers, heatmap tools. It is common to find 20 to 40 third-party scripts loading on pages where engineers have spent weeks optimizing first-party code.

These scripts are not just additive. Many of them are render-blocking, meaning the browser cannot display content until they finish loading. A single slow script from a third-party vendor sitting on an overloaded CDN can hold the entire page hostage. The engineering team has no visibility into this because their monitoring watches the application server, not the browser.

The business side adds these scripts because they are low-friction. No deployment required, just paste a tag. But each one is a performance tax that compounds, and the bill is paid by users on slower connections, which skews heavily toward mobile and toward markets outside wealthy urban centers.

Perception is not a performance metric, but it should be

There is a measurable difference between actual load time and perceived load time, and product teams almost universally optimize for the former while users respond to the latter.

Amazon and other large platforms have published research showing that even 100-millisecond delays in load time affect conversion rates. But the mechanism is not purely about raw speed. It is about whether the interface feels responsive. A page that shows a skeleton loader immediately and populates content over 800 milliseconds feels faster than a page that shows nothing for 400 milliseconds and then renders completely. The second page is objectively faster. The first one wins.

This is a front-end architecture decision, not a backend optimization problem. Engineers who are measured on server response times have no incentive to solve it.

The counterargument

Some applications genuinely do have code-level performance problems. Data-intensive pipelines, machine learning inference, complex financial calculations, these are domains where algorithmic efficiency matters enormously. If your application is doing heavy computation on the critical path, profile the code.

But this describes a small fraction of software. The median SaaS product, mobile app, or e-commerce site is not bottlenecked by CPU cycles. It is bottlenecked by round trips, payload sizes, and render-blocking resources. The argument is not that code quality is irrelevant. It is that most engineering teams are solving for the wrong constraint.

Focusing exclusively on server-side optimization when users are complaining about slowness is the technical equivalent of renovating the kitchen when the complaint is about the commute. It produces real improvements that solve the wrong problem.

Where the work actually needs to happen

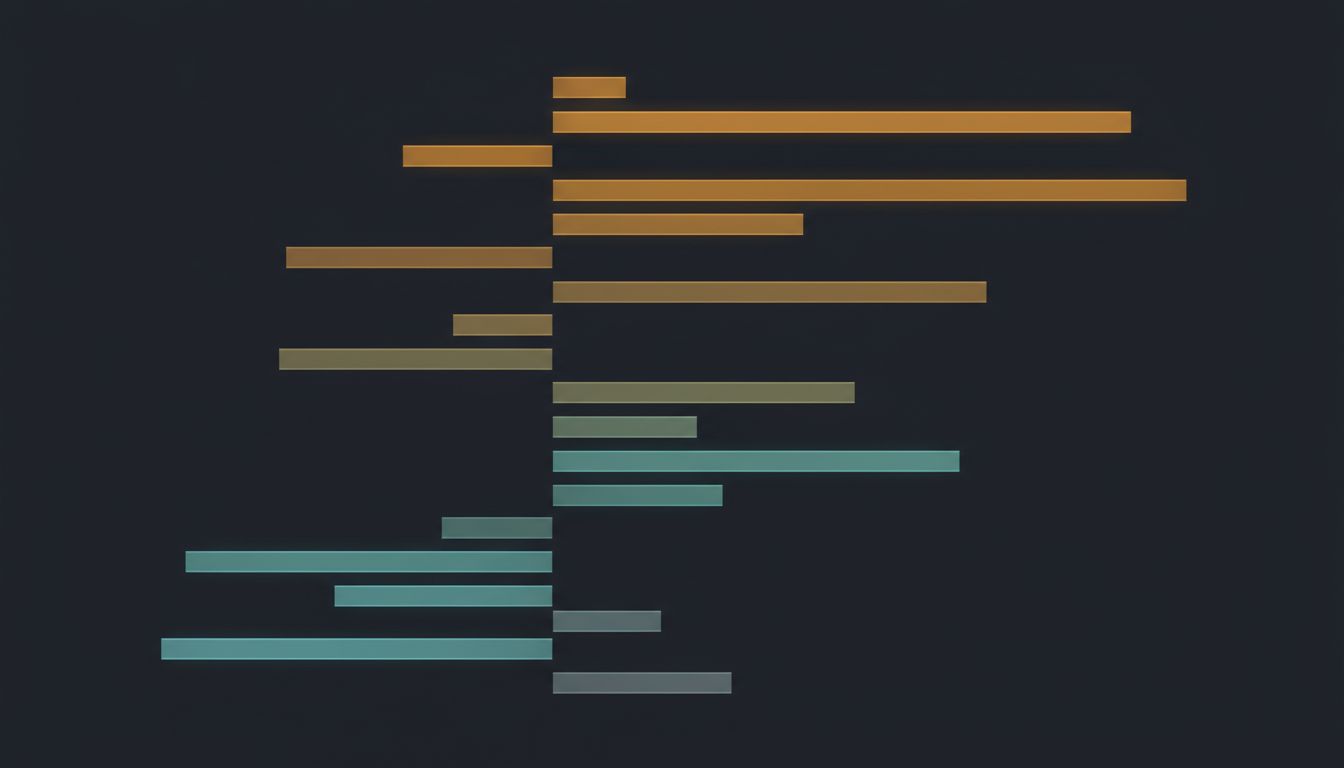

Fix the constraint that is actually binding. For most applications, that means investing in CDN strategy and edge caching, auditing and removing third-party scripts that cannot justify their performance cost, adopting progressive rendering patterns so users see something immediately, and measuring performance from the user’s browser rather than from the server.

The fastest code is the code you never run, but the second fastest request is the one that never crosses an ocean. Get the assets closer to the user, get the critical content rendering before everything else loads, and stop treating the network as someone else’s problem.

Your users are not sitting inside your data center. Your code is. That asymmetry is the whole problem.