A green checkmark on your CI pipeline doesn’t mean your software works. It means your tests passed. Those are not the same thing, and conflating them is costing teams real money and real users.

The modern deployment pipeline is an engineering marvel wrapped around a fundamental misconception. We’ve built sophisticated machinery, canary releases, blue-green deployments, automated rollbacks, and treated the output of that machinery as proof of correctness. It isn’t. It’s proof of consistency: the code does what it did before, in the conditions we anticipated. Production doesn’t care about your anticipations.

Your tests are a model of your users. Your users are not.

Every test suite encodes assumptions. Request rates, data shapes, concurrency patterns, the specific sequence of actions a user takes before hitting a particular endpoint. Those assumptions are a simplification of reality, and simplifications fail at the edges.

The problem isn’t that developers write bad tests. The problem is that tests, by definition, can only cover what you thought to test. Production surfaces what you didn’t think of. The bugs that only appear when a million people use your software at once aren’t bugs your test suite can find, because your test suite doesn’t have a million people. A single integration test for a database query tells you nothing about what happens when five hundred connections arrive simultaneously from a fleet of underpowered Lambda functions.

The gap between your test environment and production isn’t a tooling problem. It’s an epistemological one. You don’t know what you don’t know, and your tests only capture what you do.

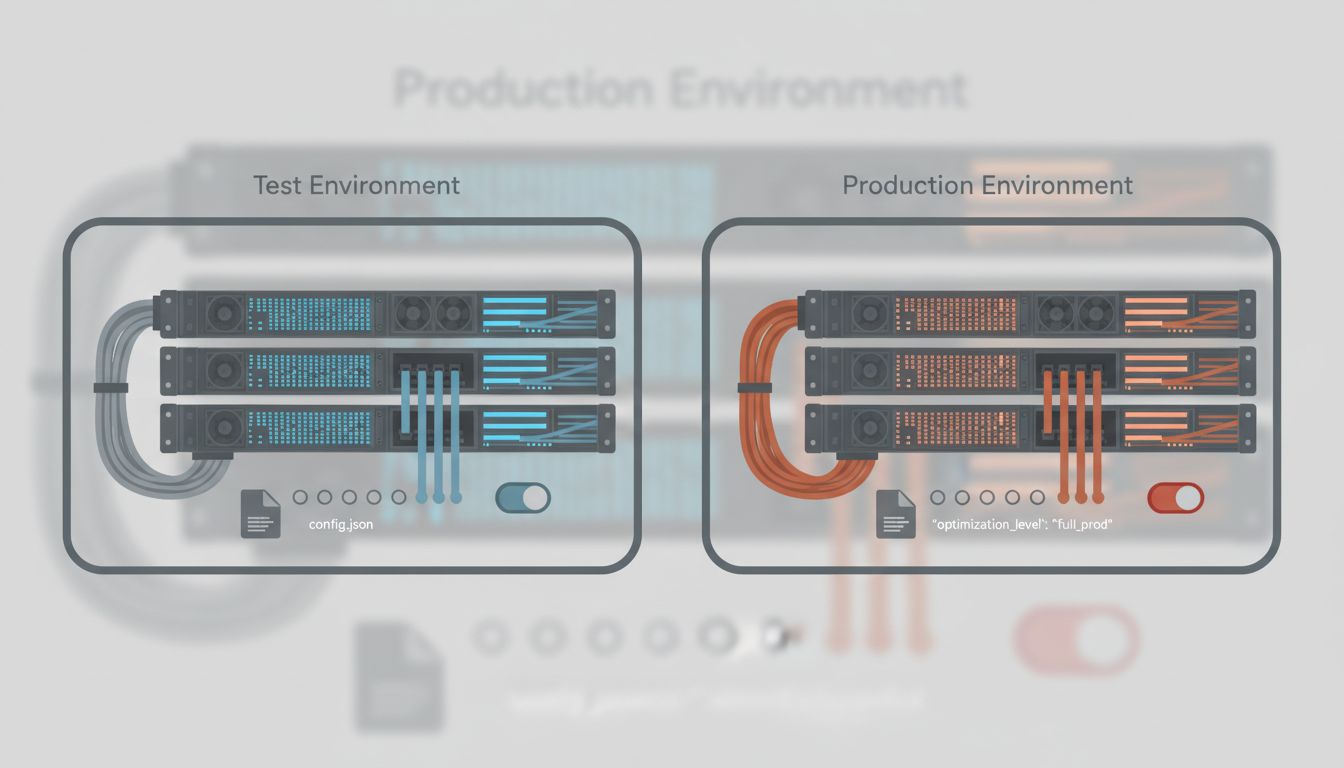

Configuration is code, but it rarely gets tested like code.

A significant share of production incidents trace back not to application logic but to configuration: environment variables that differ between staging and production, feature flags left in ambiguous states, infrastructure settings that nobody touched in two years and everyone forgot existed.

The deployment succeeded because the application code was correct. The application code was deployed into an environment that wasn’t. These are separate systems with separate failure modes, and most pipelines only validate one of them rigorously.

Infrastructure-as-code tooling has improved this story considerably, but the discipline of treating config with the same rigor as application logic remains uneven. Teams run thousands of unit tests on a function that formats a date string and zero tests on the environment variable that determines which payment processor receives real transactions.

Observability gaps mean you’re flying blind after the green light.

Here’s a scenario that plays out constantly. Deployment completes, metrics look normal, the on-call engineer signs off. Then, forty minutes later, a customer reports something broken. The team looks at dashboards and sees nothing wrong, because the thing that’s wrong isn’t what the dashboards measure.

Most monitoring is retrospective and narrow. You instrument the things you’ve worried about before. The new failure mode you just introduced is, by definition, not one of those things. Error rates stay flat because the errors are being swallowed silently. Latency looks fine because the slow path only triggers for users with a specific account age and a specific locale combination. The p99 doesn’t move because the affected cohort is small.

Good observability requires making your system legible in ways you haven’t anticipated needing. Structured logs, distributed traces, the ability to ask arbitrary questions of your production data after something goes wrong. Most teams have monitoring. Far fewer have genuine observability, and the difference matters enormously when you’re trying to understand a failure you didn’t predict.

The social dynamics of deployment amplify all of this.

Deployments carry organizational pressure. There’s a ticket to close, a sprint to complete, a product manager waiting on a feature, a customer escalation that this release supposedly fixes. That pressure compresses the careful thinking that catches the edge cases.

When a deployment is also the resolution to an incident, the pressure is even more acute. Teams rush a fix into production to stop the bleeding, and sometimes the fix introduces a new failure while patching the old one. The pipeline turns green. The incident is marked resolved. The new problem surfaces at 2am.

This isn’t a process failure in isolation. It’s a process failure enabled by the false confidence that a passing pipeline provides. The green checkmark signals safety. It doesn’t provide it.

The counterargument

Someone will say: this is what canary deployments and progressive rollouts are for. Ship to one percent of traffic, watch for anomalies, expand if things look clean. This is genuinely good practice, and it does catch a meaningful category of production failures before they affect everyone.

But it doesn’t dissolve the underlying problem. Canary deployments rely on the same observability gaps described above. If you don’t know what to measure, watching one percent of traffic doesn’t tell you much. And they don’t address configuration failures, which often apply uniformly from the first request. A misconfigured environment variable hits your canary cohort and your full production fleet at exactly the same rate.

Progressively shipping broken software to progressively more users is an improvement over shipping it to all users at once. It’s not a substitute for understanding why production keeps catching things your pipeline misses.

The deployment pipeline is not the problem. The problem is the story we tell ourselves about what it guarantees. Passing tests are evidence that your code is internally consistent. They are not evidence that your code is correct, complete, or safe to run in an environment you don’t fully control.

Fix the gap by investing in genuine observability, treating configuration as a first-class testable artifact, and building a culture that stays skeptical of the green checkmark. The checkmark means you can proceed. It doesn’t mean you’re done.