The Art of Convincing Software It’s Somewhere Else

Somewhere in an AWS data center, a virtual machine believes it is the sole occupant of a server with 16 CPU cores, 64 gigabytes of RAM, and a dedicated network interface. It is wrong on every count. That hardware is shared among dozens of other virtual machines, each holding the same confident, incorrect belief. The entire architecture of modern cloud computing rests on this productive deception.

Virtualization is, at its core, an interposition problem. You want software to behave as though it controls hardware it doesn’t actually control. The hypervisor, the thin layer of software sitting between the guest operating system and the physical machine, intercepts every attempt the guest makes to touch real hardware and responds with a convincing simulation. The guest OS never knows the difference. More precisely, it has no mechanism by which it could find out.

This is not a new trick. IBM was running virtual machines on the CP-40 mainframe in 1967, years before the personal computer existed. What changed is that the trick became cheap enough to run at planetary scale.

Why Hardware Had to Be Tricked, Not Rewritten

The elegance of virtualization is that it doesn’t require modifying the software being virtualized. Windows doesn’t need to know it’s running inside a hypervisor. Linux doesn’t need a special mode. They issue the same privileged instructions they always have, and the hypervisor quietly intercepts those instructions before they reach actual silicon.

The technical challenge this created for x86 was real. Intel’s architecture, which had evolved organically since 1978, contained a class of instructions that were sensitive, meaning they behaved differently depending on whether they were executed in privileged or unprivileged mode, but didn’t trap to the hypervisor when run in user mode. A proper virtual machine monitor needs to intercept all sensitive instructions. On x86, that was initially impossible without patching the guest.

VMware’s engineers solved this in the late 1990s with binary translation: the hypervisor scanned guest code before execution and rewrote problematic instructions on the fly. It worked, but it imposed overhead. The cleaner solution arrived in 2005 and 2006 when Intel and AMD shipped VT-x and AMD-V, hardware extensions explicitly designed to support virtualization. Sensitive instructions now trapped cleanly into the hypervisor. The performance gap between virtual and bare-metal narrowed dramatically, and the cloud became economically viable.

The Memory Problem Nobody Talks About Enough

CPU virtualization gets most of the attention, but memory management is where virtualization gets genuinely complicated. Every guest OS thinks it owns a contiguous block of physical memory starting at address zero. Multiple guests on the same machine all believe this simultaneously. Someone has to maintain the fiction.

The hypervisor does this by maintaining a second layer of address translation. The guest thinks it’s translating virtual addresses to physical addresses. The hypervisor intercepts that and translates what the guest calls “physical” addresses to actual host physical addresses. For years this was done in software, which meant every memory access potentially involved the hypervisor. It was expensive.

Intel and AMD solved it with hardware, again. Extended Page Tables (EPT) on Intel and Rapid Virtualization Indexing (RVI) on AMD offload this second translation layer to the CPU’s memory management unit. The hardware walks two page tables instead of one. The performance impact shrank from severe to nearly negligible for most workloads.

The consequence worth understanding is that memory, not CPU, is usually the real constraint on how many virtual machines a physical host can run. CPU time is interruptible and schedulable. Memory is not. If you pack too many VMs onto a host, they genuinely compete for physical RAM in ways that are hard to hide from the guests. The hypervisor can try tricks like memory ballooning (asking guest VMs to voluntarily return unused pages) and transparent page sharing (deduplicating identical memory pages across guests), but there are limits. Cloud providers know these limits intimately, and they price accordingly.

What Containers Changed, and What They Didn’t

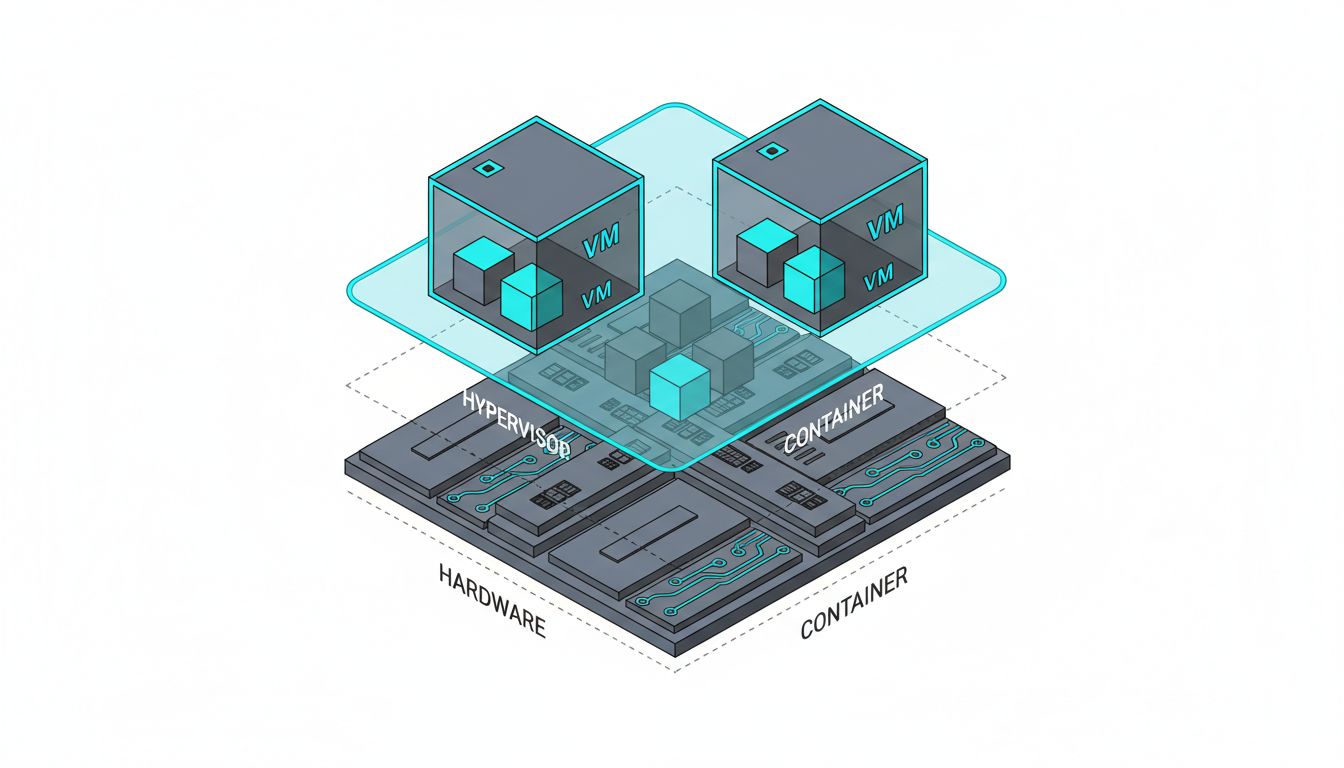

The rise of containers, specifically Docker after 2013 and Kubernetes thereafter, prompted a long argument about whether virtual machines were obsolete. The argument missed the point. Containers and VMs solve related but distinct problems.

A container shares the host kernel. It gets isolated namespaces for processes, networking, and filesystems, but it is not running its own operating system. This makes containers fast to start (milliseconds versus seconds), small in memory footprint, and efficient. It also means that if you need to run Linux containers, you need a Linux kernel. You cannot run a Windows container natively on a Linux host, and the container’s isolation guarantees are weaker than a VM’s because the kernel attack surface is shared.

Virtual machines never went away because containers need to run somewhere, and that somewhere is often a VM. AWS’s Firecracker, the microVM technology the company open-sourced in 2018, was built specifically to run Lambda functions and Fargate containers. Firecracker boots a minimal Linux VM in under 125 milliseconds and uses well under 5 megabytes of memory per instance. It is a VM optimized for running containers. The two technologies ended up layered rather than competing.

The Lie That Built the Internet’s Infrastructure

Virtualization’s success rests on an insight that sounds almost philosophical: if software cannot detect that it’s being deceived, the deception is functionally indistinguishable from reality. This is true until it isn’t, and the edge cases are where things get interesting.

Timing attacks can, in principle, reveal virtualization. A guest can measure the latency of certain operations and notice that they don’t match the timings of real hardware. Cloud providers have various mitigations, none of them perfect. The hypervisor call instruction (CPUID) will also, if queried correctly, return a hypervisor vendor string in most configurations, so a determined program can detect it’s virtualized. But these are edge cases that most software never explores. The deception holds because almost nobody is trying to break it.

The deeper point is that this is how a relatively small number of physical machines serves a very large fraction of the world’s computing workloads. The utilization of a bare-metal server running a single application is often low, sometimes embarrassingly so. Virtualization turns that wasted headroom into actual capacity. The fastest programs win by doing less work, and hypervisors apply the same logic to infrastructure: the most efficient data center is one that does more with the hardware it already has.

The server that never existed has become the server that runs everything. The fiction was never about fooling people. It was about making the hardware go further than it would have otherwise, and on that measure, the trick has worked beyond any reasonable expectation.