The simple version

The most reliable computer systems in the world are kept reliable not by preventing failures, but by engineering them to fail constantly in small, controlled ways before those failures can become large, uncontrolled ones.

Why “reliability” is the wrong goal

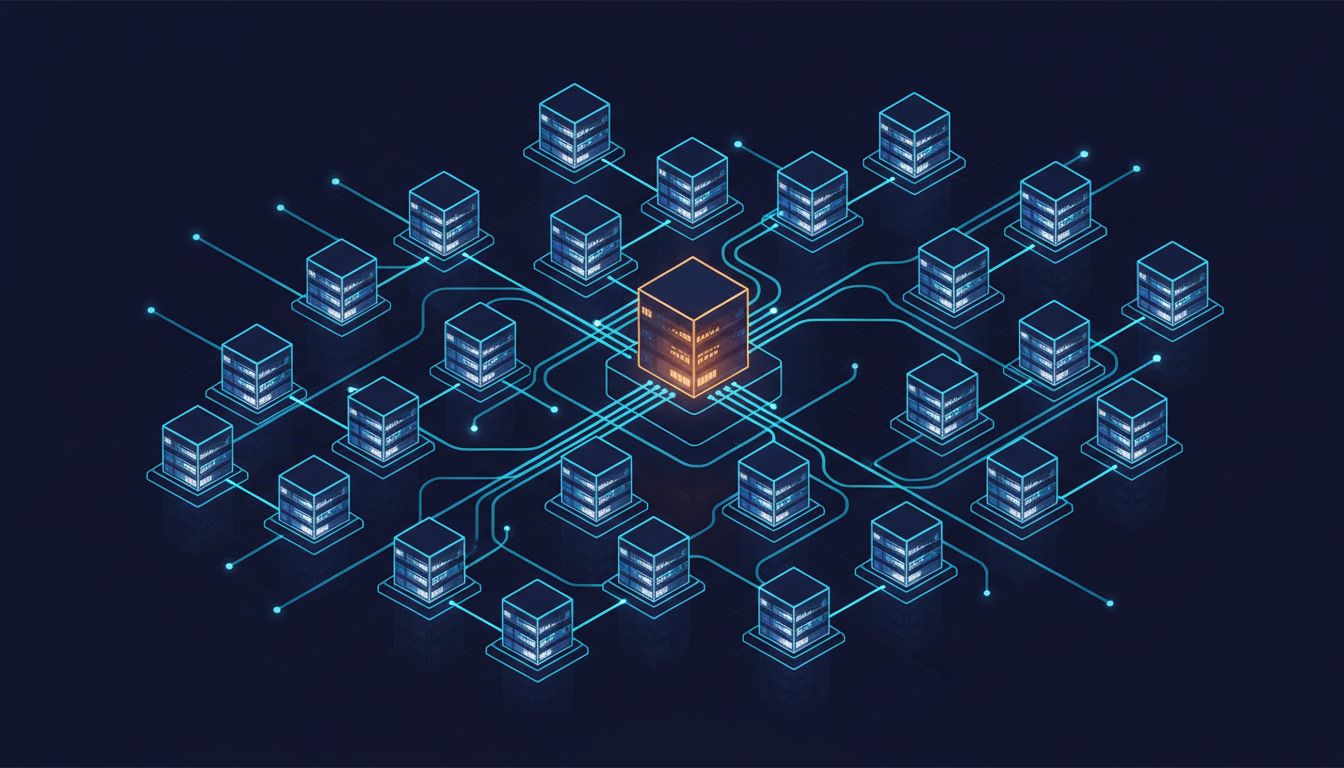

If you asked most people how a tech company keeps its servers running, they’d describe something like a fortress: redundant hardware, careful monitoring, engineers on call to prevent anything from going wrong. That mental model is wrong, and the gap between it and reality explains why some companies can take a disk failure in stride while others lose millions of dollars to an outage.

The key insight, developed seriously over the past two decades of cloud infrastructure, is that in any system large enough to matter, failure is not an edge case. It is the normal condition. Netflix, at any given moment, is running on tens of thousands of servers. The question is never whether some of them will fail today. The question is whether the failure of those servers will affect the people trying to watch a movie.

The engineers who build these systems have largely stopped trying to make individual components more reliable. Instead, they build systems that expect components to fail and continue working anyway.

What “chaos engineering” actually means

In 2010, Netflix began deliberately killing servers in its own production environment, meaning the actual infrastructure serving real customers, not a test environment. The tool they built for this, Chaos Monkey, would randomly terminate virtual machine instances during business hours.

The logic was counterintuitive but sound: if your system can’t survive a randomly terminated server on a Tuesday afternoon with engineers at their desks, it certainly can’t survive one at 2 a.m. on a holiday weekend. By forcing failures constantly in conditions where people could respond, Netflix built teams and architecture that could handle failures in conditions where no one was paying attention.

This practice, now called chaos engineering, has spread broadly. Amazon, Google, Microsoft, and many smaller companies run some variation of intentional failure injection. The discipline even has a formal definition: it’s the practice of experimenting on a system to build confidence in its ability to withstand turbulent conditions.

The point is not to break things for sport. The point is that a system that has never been broken in testing is a system whose breaking points are unknown. Unknown breaking points in infrastructure serving millions of users is a liability, not a neutral fact.

The architecture that makes this possible

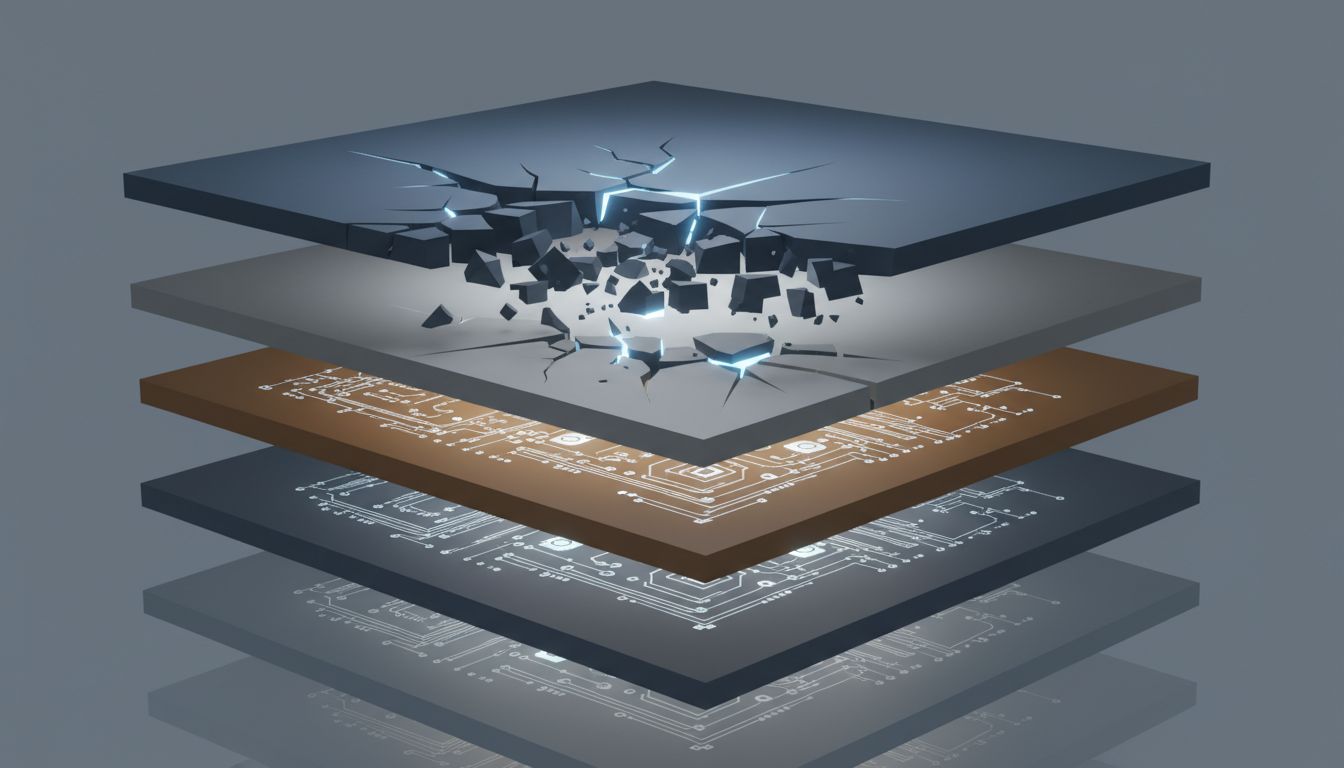

Deliberate failure only works if the underlying architecture is built for it. Two concepts matter most here.

The first is redundancy without single points of failure. A single point of failure is any component whose failure takes down the whole system. The goal is to eliminate them entirely. This means running multiple copies of every critical service, distributing them across different physical machines, different data centers, and ideally different geographic regions. When Netflix’s engineers kill a server, traffic automatically routes to other servers. The user sees nothing.

The second concept is graceful degradation. Not every failure can be fully hidden. When Netflix’s recommendation engine has a problem, instead of crashing the app entirely, the system falls back to a generic list of popular content. The user’s experience gets worse, but it doesn’t stop. This is a deliberate design choice made in advance: what does this service do when part of it is broken? The answer is almost never “display an error message and stop working.”

These two ideas together, redundancy and graceful degradation, are what allow failure injection to be a useful practice rather than a destructive one. You can only learn from breaking something if the breakage is survivable.

The cost calculation that makes it worth it

Building systems this way is expensive. Redundancy means paying for more servers than you’d need if everything worked perfectly. Chaos engineering requires engineering time to design experiments, analyze results, and fix the weaknesses they expose. Graceful degradation requires thinking carefully about every failure mode before it happens.

Companies invest in this because the alternative is more expensive. Amazon has estimated, in various public disclosures, that each hour of downtime costs them figures in the tens of millions of dollars. For companies at that scale, engineering hours spent on reliability have a clear return. But the math also works at smaller scales than most people assume. A company with a few million in annual recurring revenue can lose a meaningful portion of it, plus customer trust, in a single extended outage.

The deeper reason this approach wins, though, is organizational. Teams that run chaos experiments regularly develop intuitions about failure modes that teams who avoid failure never build. When an unexpected outage happens, the team that has practiced responding to failures responds faster and more effectively. The practice is as much about training engineers as it is about finding bugs.

The version you can apply without Netflix’s budget

The underlying principles don’t require the infrastructure of a major cloud company.

Start by identifying your single points of failure. Most systems have obvious ones: a single database, a single API key, a single server running a critical job. Documenting these is the first step toward eliminating them.

Then ask what happens when each component fails. Not “if” but “when.” Write the answer down. If the answer is “the whole system stops,” that’s the design problem to solve before it solves itself in a crisis.

Formal chaos experiments at small scale can be as simple as turning off a non-critical service during a planned window and watching what happens. The goal isn’t to reproduce Netflix’s tooling. It’s to know your system’s behavior under stress before your users discover it.

The reliable system, counterintuitively, is the one that has failed the most times in controlled conditions. Every failure survived in a test is a failure prevented in production. As distributed systems lie to you about what just happened, the only honest way to know how your infrastructure behaves under pressure is to apply that pressure yourself.