The most important design decision in the history of local networking wasn’t made by a committee. It was forced by physics.

In the early 1970s, Robert Metcalfe was working at Xerox PARC when he encountered a problem that should have been fatal to the concept of shared-medium networking: put two machines on the same wire, let them both try to send data at the same time, and the signals don’t politely wait their turn. They overlap, corrupt each other, and produce electrical noise that neither sender nor receiver can make sense of. The wire becomes, briefly, useless. Metcalfe’s solution to this problem became Ethernet, and the logic he used to solve it quietly shaped every network you’ve touched since.

The Setup: One Wire, Too Many Talkers

The original Ethernet standard, formalized in 1980 by Xerox, Intel, and Digital Equipment Corporation, was built around a single coaxial cable. Every device on the network shared the same physical medium. This was elegant from a cost perspective and nightmarish from a coordination perspective. There was no central scheduler, no traffic cop, no authority deciding whose turn it was to transmit.

When two devices transmitted simultaneously, what happened was physically straightforward and operationally catastrophic. Each device injected a voltage signal onto the wire. Those signals traveled in both directions at roughly two-thirds the speed of light, met somewhere on the cable, and superimposed. The resulting voltage level was wrong, the timing was wrong, and the receiving devices couldn’t decode either message. Both transmissions were destroyed.

This is a collision. And in early Ethernet deployments using thick coaxial cable (what engineers called 10BASE5, for 10 megabits per second over a 500-meter segment), collisions weren’t edge cases. They were routine.

What Happened: CSMA/CD and the Art of Graceful Failure

Metcalfe’s protocol, Carrier Sense Multiple Access with Collision Detection, addressed this with a sequence of behaviors that seems almost socially intuitive once you understand it.

First, carrier sense: before transmitting, a device listens to the wire. If it detects voltage activity indicating another transmission in progress, it waits. This alone eliminates most collisions.

But not all of them. Two devices can both listen simultaneously, both hear silence, both decide to transmit at the same instant, and collide anyway. The gap between “I heard nothing” and “my signal has reached the far end of the cable” is where collisions live. On a 500-meter coaxial segment, a signal takes about 2.5 microseconds to travel end-to-end. During that propagation delay, a second device at the far end has no way to know a transmission is already in flight.

So CSMA/CD added collision detection. While transmitting, a device monitors the wire. If it detects a voltage level that couldn’t be its own signal alone, it knows a collision occurred. It immediately broadcasts a jam signal to ensure every device on the network recognizes the collision (rather than receiving a corrupted frame and quietly discarding it). Then it stops transmitting.

Now comes the part that made Ethernet work at scale: exponential backoff. After detecting a collision, the devices involved each wait a random amount of time before attempting to retransmit. The randomness is the point. If both devices waited the same fixed interval, they’d collide again immediately. Instead, each device picks a random integer between 0 and 1, multiplies it by a slot time (51.2 microseconds in 10BASE5), and waits that long. If they collide again, the range doubles: 0 to 3. Then 0 to 7. Then 0 to 15, and so on, up to a ceiling of 1,023 slot times. After sixteen consecutive failures, the device gives up and reports an error.

This is called truncated binary exponential backoff, and it is a beautiful piece of engineering. The randomness ensures that two colliding devices almost certainly choose different wait times. The exponential growth of the range means that as network traffic increases and collisions become more frequent, devices back off further, reducing load on the medium and giving the network room to recover. It is a self-regulating system built from arithmetic.

Why It Matters: The Physics You Can’t Engineer Away

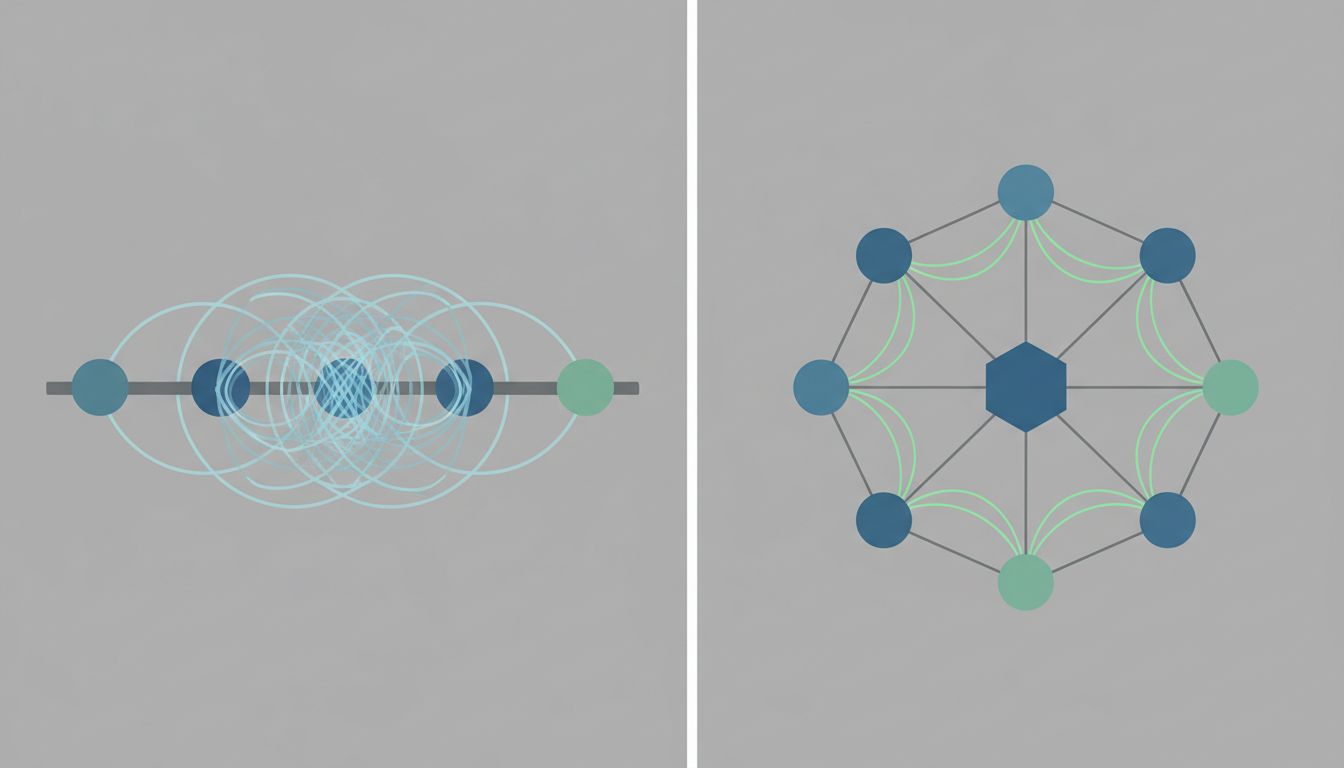

The collision domain concept, the set of devices that can interfere with each other’s transmissions, became one of networking’s foundational abstractions. Network engineers in the 1980s and 1990s spent enormous effort managing collision domain size. Too many devices on a single segment and collision rates climbed, throughput fell, and latency became unpredictable. Bridges and later switches were valuable precisely because they broke networks into smaller collision domains.

The full-duplex switch, which became standard through the 1990s, effectively eliminated collisions by giving each device its own dedicated pair of wires for sending and receiving. Modern switched Ethernet doesn’t need CSMA/CD because there is no shared medium to contend over. The protocol still exists in the spec, but it almost never fires.

This is the kind of infrastructure evolution that rarely gets its due: a problem so thoroughly solved that the solution itself disappears from view. Most engineers working today have never seen a collision counter increment in a production environment. The problem feels theoretical. It wasn’t.

What We Can Learn: Degradation as Design

The deeper lesson from Ethernet’s collision handling isn’t about networking. It’s about how robust systems behave when they fail.

Most failure modes in distributed systems today involve the same fundamental tension: multiple actors, shared resources, no central authority. The problems that only appear when a million people use your software at once are often collision problems in disguise, two processes reaching for the same database row, two services writing to the same cache key, two threads mutating the same object.

What made CSMA/CD elegant was that it didn’t try to prevent all collisions. Prevention would have required coordination overhead that would have cost more than the collisions themselves. Instead, the protocol accepted that collisions would happen, detected them quickly, recovered gracefully, and used randomness to prevent the failure mode from compounding.

This is the correct framing. A system that handles failure gracefully is more valuable than one that claims to eliminate failure entirely, because the claim is almost always false. The question isn’t whether your system collides. It’s whether it knows when it has, and what it does next.

Metcalfe’s wire taught us that. Everything since has been footnotes.