You’ve been there. Production is throwing errors, you can’t reproduce it locally, so you add some logging to get a better look. You deploy. The bug stops happening. You remove the logging. It comes back.

This experience has a name: a Heisenbug, borrowed from Heisenberg’s uncertainty principle in quantum mechanics, where the act of measuring a particle’s position disturbs its momentum. In software, the act of observing a bug changes the conditions that cause it. The name is clever, but more importantly, it points to something real and fixable once you understand what’s actually happening.

Why Observation Changes Behavior

Logging isn’t free. When you add a console.log or write to a file, you’re inserting work into your program’s execution. That work takes time, and time is often exactly what a flaky bug depends on.

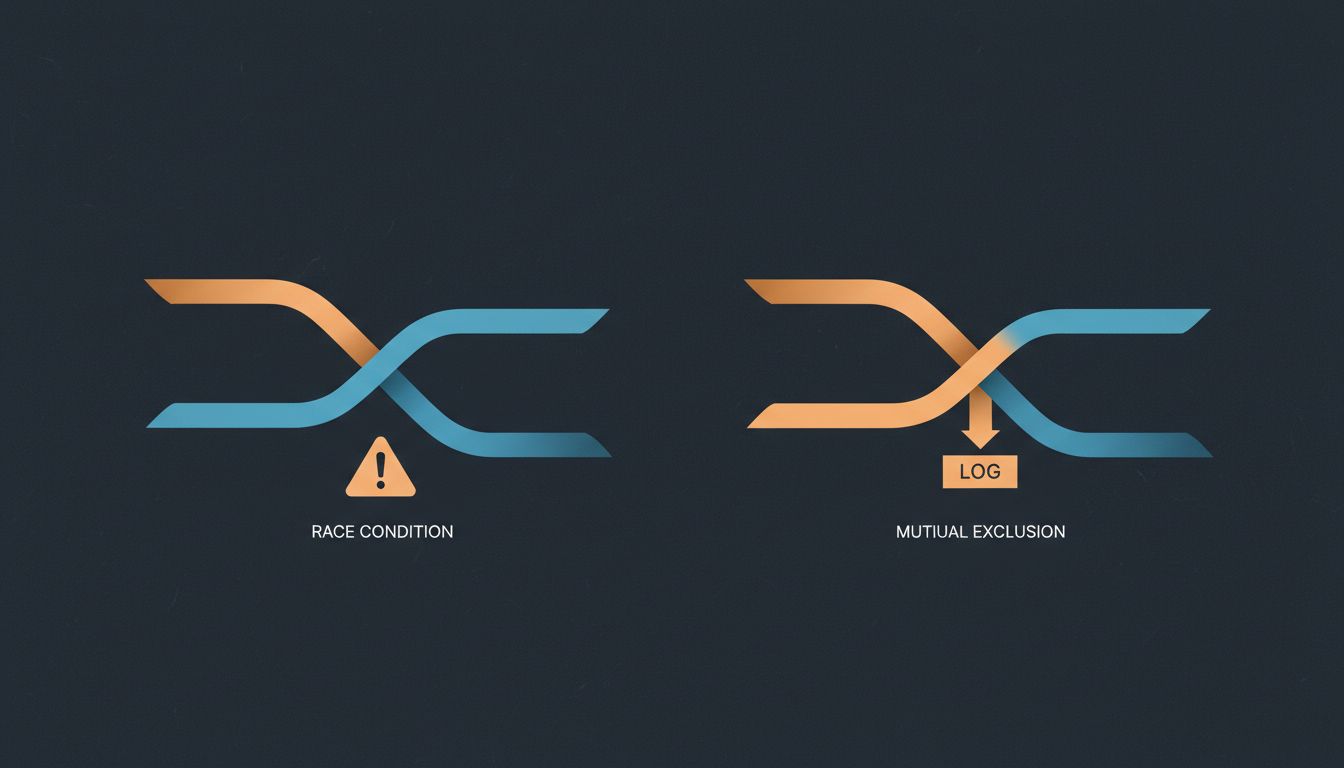

The most common cause is timing. Two threads, two async operations, or two networked services are racing to access a shared resource. Without logging, one consistently beats the other in just the wrong order. Add logging, and you slow down the winner just enough that the race resolves differently. The bug disappears, not because you fixed anything, but because you accidentally changed who wins the race.

This is why Heisenbugs are so tightly associated with concurrency. If you’ve ever tried to debug a threading issue by adding print statements and watched the problem evaporate, you’ve met this phenomenon firsthand. The underlying race condition is still there. You just can’t see it anymore because your observation is masking it.

Timing isn’t the only mechanism. Memory layout is another. In C and C++, adding a variable or a logging call can shift how objects are arranged in memory, which can hide (or occasionally reveal) buffer overflows and undefined behavior. In garbage-collected languages, the extra allocations from string formatting can trigger garbage collection at different moments, changing execution order. Even CPU cache behavior can shift when your code size changes, altering which data is in cache when a critical section runs.

The Deeper Problem: You Can’t Trust Your Evidence

Heisenbugs matter beyond the frustration they cause. They expose a fundamental reliability problem in reactive debugging, which is how most developers actually debug most of the time. You observe symptoms, form a hypothesis, insert observation tools, and check whether your hypothesis was right. That workflow assumes your tools are neutral observers. Heisenbugs prove they’re not.

This is worth sitting with for a moment. When a bug only appears in production and not in your dev environment with its different hardware, different timing, and different load, you’re already working with degraded evidence. Add logging and you degrade it further. You can convince yourself the bug is fixed when you’ve only suppressed it.

This has real costs. A team that thinks they’ve fixed an intermittent bug and hasn’t will eventually encounter it again, usually at a worse time. The work of debugging it the second time often includes untangling why the first fix appeared to work. That kind of confusion is expensive in ways that are hard to put a number on.

What Non-Invasive Debugging Actually Looks Like

The practical response to Heisenbugs is to reach for debugging techniques that don’t meaningfully alter execution.

Hardware watchpoints are the first tool worth knowing. A debugger watchpoint on a memory address tells the CPU itself to pause execution when that address is read or written. The CPU handles this at the hardware level, making it far less invasive than software breakpoints or logging calls. If you’re debugging C, C++, or Rust and you suspect memory corruption, this is where to start.

Tracing instead of logging. Tools like Linux’s perf, strace, dtrace, or eBPF let you observe system calls and kernel events with dramatically lower overhead than userspace logging. They’re not zero-overhead, but they’re much closer to it. For a bug that disappears with a 5ms logging delay but survives a 50-microsecond trace overhead, you can actually make progress.

Reproducible environment construction. Many Heisenbugs that seem timing-dependent are actually environment-dependent. They occur on a 16-core production server but not your 8-core laptop. They occur under load but not in isolation. Before you add a single log line, try to reproduce the conditions: the CPU count, the memory pressure, the number of concurrent connections. Docker and VMs make this more tractable than it used to be.

Logging at the boundary, not inside the race. If you must add logging, add it outside the critical section rather than inside it. Log what happened after the fact rather than trying to trace execution mid-flight. This doesn’t eliminate the observer effect but reduces it substantially.

When the Bug Requires Logging to Find

Sometimes you genuinely cannot avoid instrumenting your code to get enough information to diagnose a problem. Here’s how to do it more safely.

First, use asynchronous logging. Synchronous logging (where your code blocks until the log write completes) adds the most latency and disrupts timing the most. Async logging writes to a buffer and flushes in a background thread. Libraries like log4j2 in Java, spdlog in C++, or structured logging systems built around ring buffers (as used in many high-frequency trading systems) keep overhead in the low-microsecond range.

Second, log at the right granularity. You don’t need to log every iteration of a hot loop. Log transitions and state changes. If a bug involves a resource being acquired and released, logging the acquire and release with timestamps tells you more than logging every operation in between, and it’s far less invasive.

Third, leave your logging in. This sounds obvious but many teams strip logging before they ship, which means every production bug requires a new debugging cycle. Structured, always-on logging at sensible verbosity levels, with the ability to dial up detail per-component without redeployment, is more useful than heroic logging added in a crisis. This is one reason observability has become its own engineering discipline.

The Variant That Fixes Itself

There’s a related cousin worth knowing about: the bug that disappears when the engineer goes home. Load drops, memory pressure eases, a background job finishes, and the race condition resolves cleanly for hours. The bug is real but self-concealing under the right conditions.

If you’ve read about bugs that fix themselves, you’ll recognize that these are often the same underlying class of problem. The system’s behavior depends on conditions that aren’t stable, and stable conditions happen to be the conditions under which you’re watching.

The common thread is that these bugs require you to debug the environment, not just the code. What was the system load? What other processes were running? What was the network latency to the database? These questions are harder than reading a stack trace, but they’re the right questions.

Making Your Systems More Observable Without Breaking Them

The long-term answer to Heisenbugs isn’t better logging. It’s building systems where you can observe state without altering it. A few principles that pay off consistently:

Prefer immutable state where you can. If shared state is read-only, it can’t race. Functional approaches and immutable data structures don’t eliminate all concurrency bugs, but they eliminate a large class of them.

Expose internal metrics through read-only channels. Prometheus-style metrics, health endpoints, and counters that get sampled externally are largely non-invasive compared to logging mid-execution. They let you see the shape of what happened over time rather than tracing individual operations.

Write deterministic tests for timing-sensitive code. If you can control the clock in your tests (dependency injection for time is underused), you can force the race conditions that are hard to reproduce naturally. The bug becomes reproducible on demand rather than appearing only under observation.

Review concurrency with the understanding that fixing one symptom can expose another. Synchronizing a previously unsynchronized operation can introduce new timing dependencies elsewhere. Heisenbugs are often a sign of architectural debt in the concurrency model, not just a single bad line of code.

What This Means

A Heisenbug isn’t a special, exotic creature. It’s what happens when your debugging tools change the thing you’re trying to measure, usually by altering timing. Understanding the mechanism gives you a different way to approach the problem: instead of reaching immediately for more logging, ask what the bug depends on. Is it time? Memory layout? Load? Once you know what the bug is sensitive to, you can choose tools that don’t disturb that sensitivity, and you can start building systems that are easier to observe honestly in the first place.